The Evaporating Substrate: Human Value in the Age of Automated Cognition

I. The Migrating Basis of Power

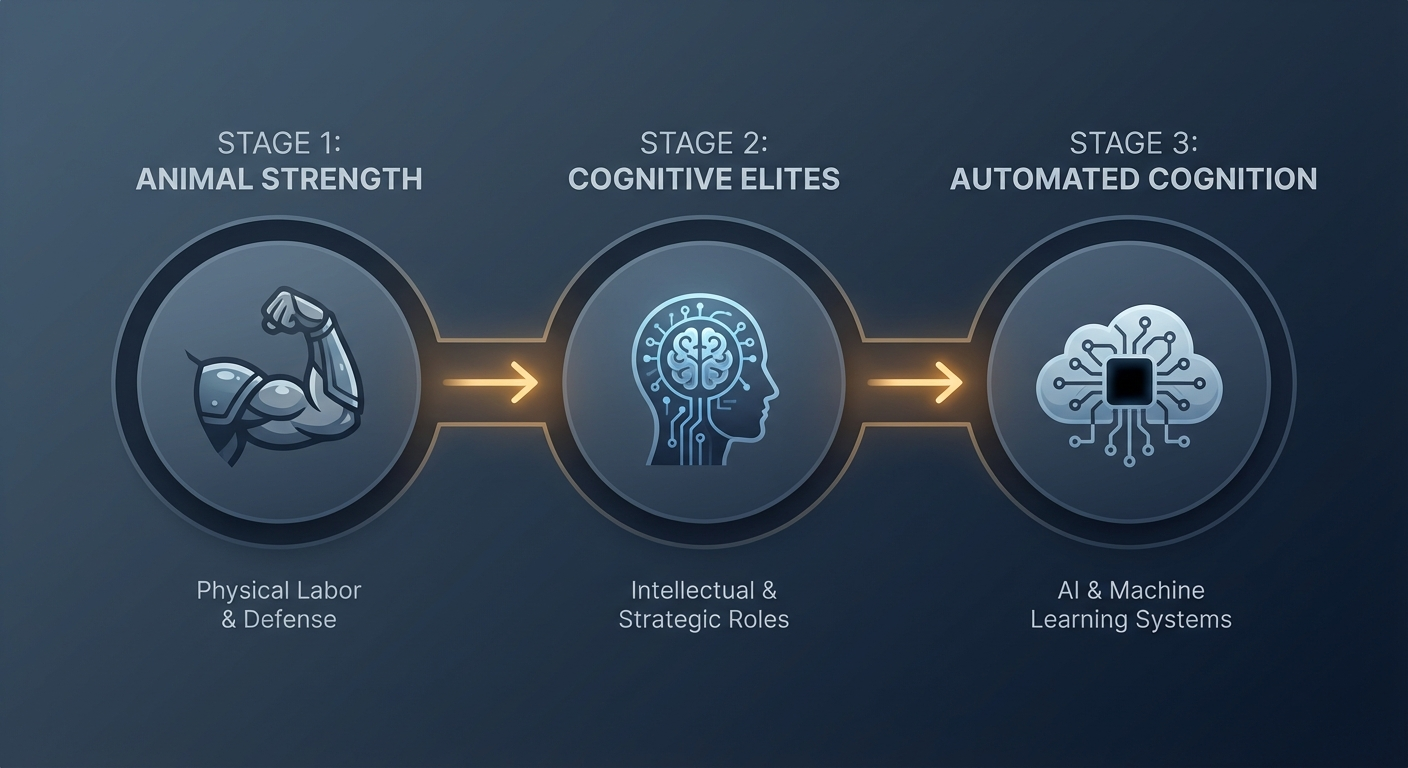

Every civilizational order rests on an implicit answer to the question: what makes humans valuable? Not morally — that answer is easy and cheap — but functionally, within the power structures that actually govern resource allocation, status, and survival. The substrate of that functional value has migrated three times in the modern era, and each migration has rendered a different dimension of human capability obsolete.

Regime 1: Animal Strength → Industrial Mass. For most of recorded history, human value in the context of power was grounded in the body. Armies were collections of primate strength — men who could march, swing, hold a line. Political authority flowed from the capacity to organize and project physical violence. The sovereign who could field more bodies, and keep them fed and moving, dominated.

Regime 2: Industrial Mass → Cognitive Elites. The twentieth century — specifically the period from roughly 1940 to 2020 — shifted the locus of civilizational power from mass to mind. The atomic bomb was not built by an army. It was built by a handful of physicists. The information revolution was not driven by factory labor. It was driven by mathematicians, engineers, and programmers. Human value, in the functional sense that matters to power, migrated from muscle to cognition.

Regime 3: Cognitive Elites → Automated Cognition. We are now inside the third migration. The scarcity of intelligence — the thing that made the cognitive elite valuable — is collapsing. Large-scale AI systems can now perform, and in many domains exceed, the cognitive labor that justified the post-WWII social contract. The substrate of human worth is evaporating, and nothing has yet replaced it.

Each of these transitions was accompanied by massive violence, institutional collapse, and the wholesale rewriting of who counts as a person. There is no reason to believe the third will be different. There are several reasons to believe it will be worse — chief among them that this transition attacks the last substrate. When machines replaced muscle, humans retreated to mind. When machines replace mind, there is nowhere left to retreat. Unless, that is, we can locate something in the human that was never about utility in the first place.

II. The Napoleonic/Civil War Hinge

The first regime transition — from primate-scale dominance to industrial-scale warfare — played out across the long nineteenth century, and its lessons are still underappreciated.

Napoleon’s campaigns were, in one sense, the apotheosis of the old model: a single charismatic commander projecting power through the massed bodies of citizen-soldiers. But they were also the beginning of its end. The logistics of the Grande Armée — the supply chains, the road networks, the administrative apparatus required to move 600,000 men into Russia — were already industrial problems wearing pre-industrial clothes. Napoleon didn’t lose to a better general. He lost to distance, weather, and the caloric requirements of half a million mammals.

The American Civil War made the lesson explicit. The Confederacy fought, in many respects, a Napoleonic war: aristocratic officer corps, cavalry charges, the romance of individual valor. The Union fought an industrial war: railroads, telegraphs, factory-produced rifles, and the grim arithmetic of attrition. Grant’s strategy was not brilliant in the classical sense. It was systematic. He understood that the North could replace its losses and the South could not. He turned the war into a resource-consumption problem and solved it with logistics.

What followed was not peace but transformation. The Gilded Age was the political economy of the new regime: human value now resided not in martial prowess but in the capacity to operate, manage, and profit from industrial systems. The old martial aristocracies didn’t vanish — they adapted, or were replaced by industrial magnates who wielded a different kind of power. The humans who couldn’t adapt — subsistence farmers, artisans, the formerly enslaved — were not gently retrained. They were crushed, exploited, or ignored.

The communist revolutions of the twentieth century were, in this framing, a delayed immune response to the first regime transition. Marx diagnosed the problem with extraordinary precision: industrial capitalism had made human labor a commodity, and commodities are subject to price competition. The workers whose bodies had once been the irreducible unit of military and economic power were now interchangeable components in a machine that didn’t care about them. The revolutionary answer — seize the means of production, restore human dignity through collective ownership — was a political solution to what was fundamentally a substrate problem. It addressed who controlled the machines, not the deeper issue that machines had devalued the human body as a source of power.

This is the pattern. The substrate of value migrates. The humans left standing on the old substrate experience it as an existential crisis — not merely economic, but ontological. And the political responses, however radical, tend to fight the last war.

III. The WWII Reprieve

The Second World War created a new myth, and for eighty years that myth held.

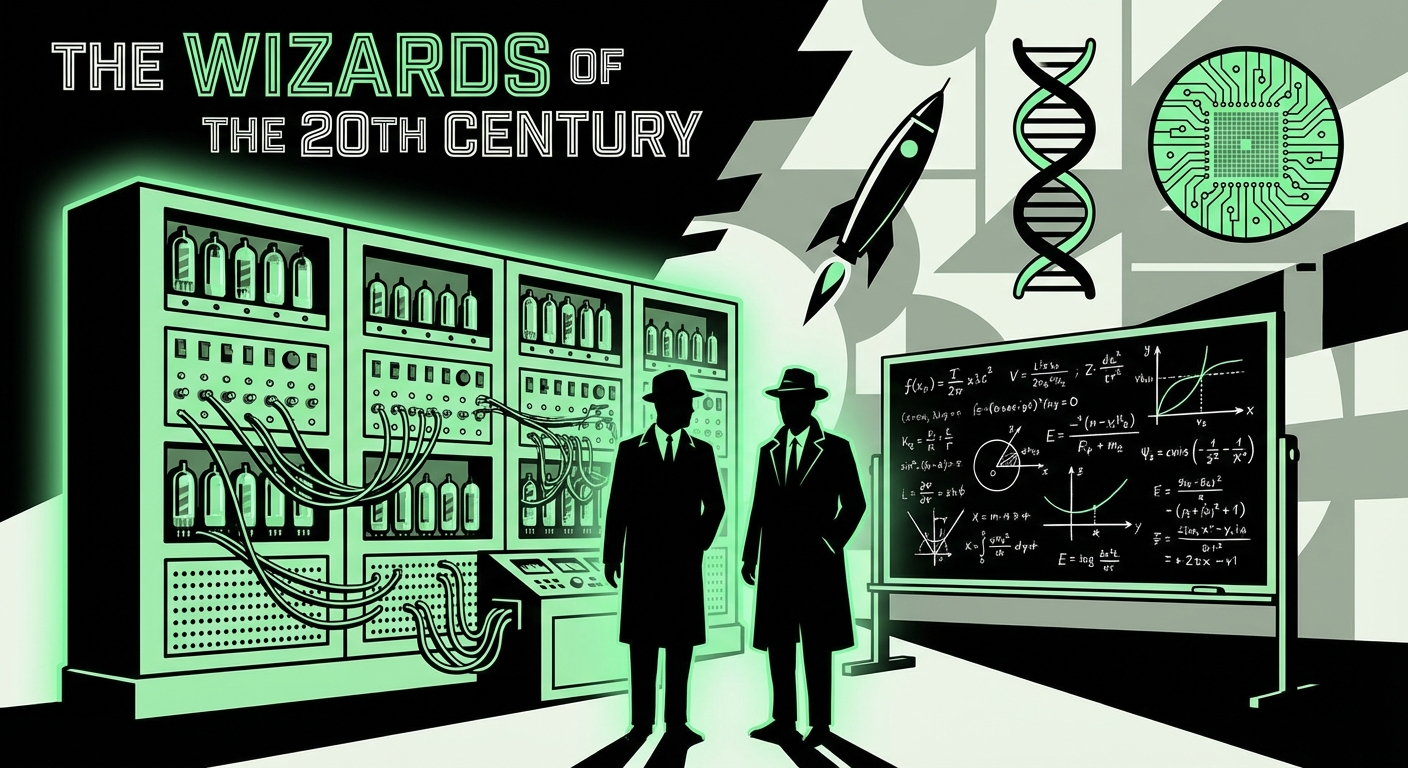

The myth was this: human value equals intelligence. The war was won — or at least, its decisive asymmetries were created — by a tiny cohort of extraordinary minds. Alan Turing broke Enigma and, in doing so, arguably shortened the war by years. John von Neumann’s mathematical contributions spanned ballistics, shock waves, and the foundational architecture of the digital computer. Robert Oppenheimer led the Manhattan Project, converting theoretical physics into the most consequential weapon in history. Claude Shannon formalized information theory, laying the groundwork for every communication system that followed.

These were the “wizards” — and their existence created a new social contract. If intelligence was the substrate of civilizational power, then societies needed to cultivate, educate, and reward intelligence. The postwar order was built on this logic. The GI Bill, the expansion of universities, the creation of national research laboratories, the space race, the semiconductor industry, Silicon Valley — all of it rested on the premise that smart humans were the scarce resource that determined national power.

The Cold War locked this in with existential stakes. The United States and the Soviet Union competed not primarily through massed armies (though they maintained them) but through technological capability: nuclear weapons, satellites, computers, cryptography. The arms race was, at its core, a talent race. Both superpowers invested enormously in identifying, training, and deploying cognitive elites. Human intelligence was the strategic resource, and the entire institutional apparatus of the postwar world — from university tenure to defense contracting to immigration policy — was organized around capturing and leveraging it.

This created a genuine golden age for a certain kind of human. If you were smart, educated, and positioned within the right institutional frameworks, the postwar order valued you enormously. The knowledge economy wasn’t just an economic phenomenon; it was a moral one. Intelligence became the basis of social worth, the justification for meritocratic hierarchies, the answer to the question of why some people deserved more than others.

But it was always a contingent arrangement, not a natural law. Intelligence was valued because it was scarce relative to the demands of power. The moment that scarcity collapsed, the entire edifice — economic, social, moral — would lose its foundation.

IV. The Current Evaporation

That moment is now.

The development of large-scale AI systems has done to cognitive labor what the steam engine did to physical labor: not eliminated it entirely, but destroyed its scarcity value. A language model can draft legal briefs, write code, analyze medical images, compose music, summarize research, and generate strategic analyses. It does these things imperfectly — but it does them at near-zero marginal cost, at scale, without sleep, without benefits, without complaint.

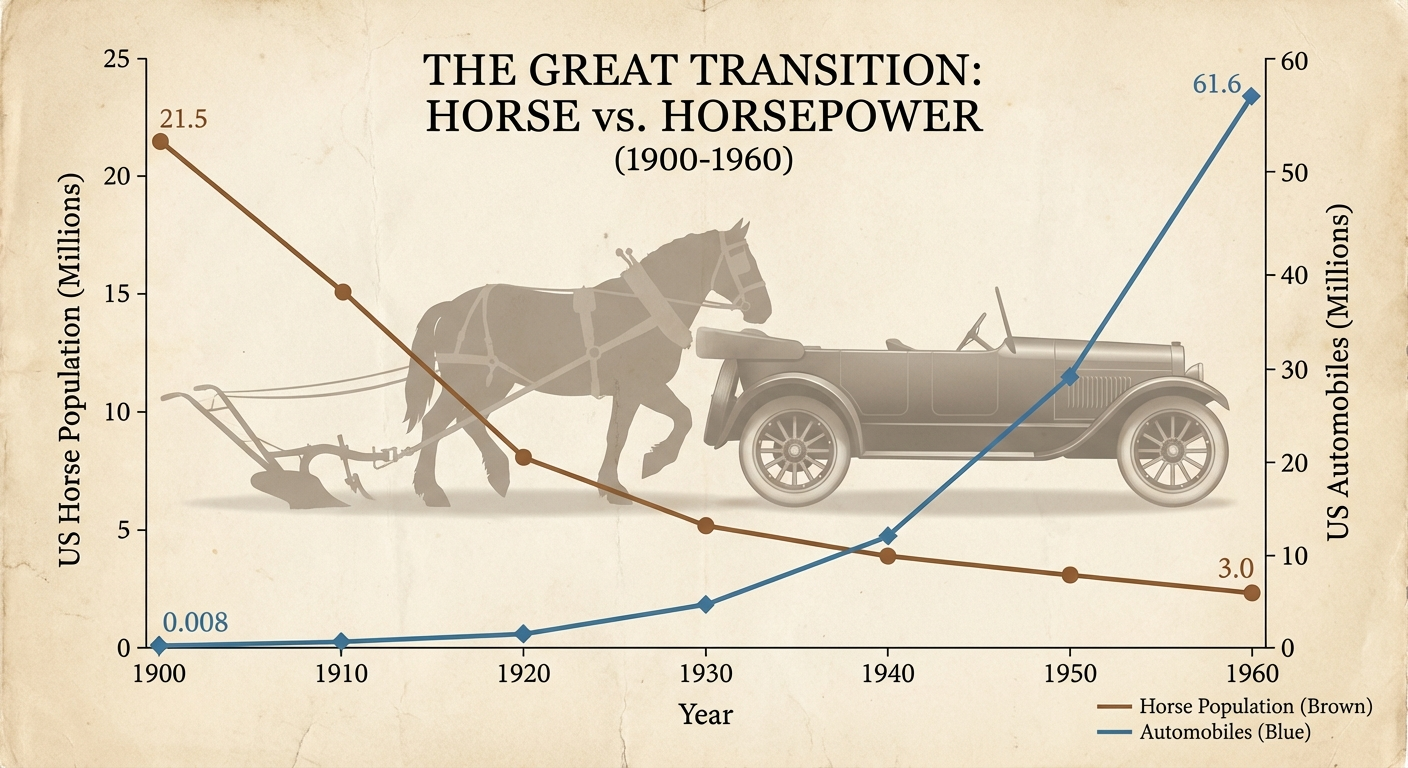

The analogy that clarifies the situation is the horse. In 1900, the United States had approximately 21 million horses. They were essential to transportation, agriculture, industry, and warfare. The entire economy was organized around their capabilities. By 1960, the horse population had collapsed to roughly 3 million, and horses had become recreational luxuries. No one “solved” the horse unemployment problem. There was no retraining program, no equine universal basic income. Horses simply stopped being economically necessary, and their numbers adjusted accordingly.

The critical difference, of course, is that horses don’t vote, don’t riot, and don’t build nuclear weapons. Humans do. But the economic logic is identical: when a cheaper substitute exists for the function you perform, your market value collapses regardless of your intrinsic qualities.

What makes the current transition uniquely dangerous is that it attacks the last substrate. When industrial machines replaced human muscle, humans could retreat to cognition — “we’re not just bodies, we’re minds.” When AI replaces human cognition, there is no further retreat. The implicit promise of the Enlightenment — that human reason is the highest and most irreplaceable capacity in the universe — is being falsified in real time, not by philosophical argument but by engineering.

Simultaneously, the global economic system has reached a state of overintegration that amplifies every shock. Supply chains span continents. Financial markets are coupled in milliseconds. Labor markets are global. This means that the displacement effects of AI are not local or gradual — they propagate through the entire system at the speed of information. A breakthrough in automated coding doesn’t just affect programmers in San Francisco; it reprices cognitive labor worldwide, instantaneously.

Late-stage capitalism, in this context, is not merely an economic arrangement but a vulnerability. The system is optimized for efficiency, not resilience. It has no slack, no buffers, no fallback positions. When the substrate of human value evaporates, the system has no mechanism for absorbing the displaced — because the system was never designed to value humans as such. It was designed to value human functions, and those functions are being automated.

Humans are becoming friction, not assets. Every human in a workflow is a source of latency, error, liability, and cost. The economic incentive is to remove them — not out of malice, but out of the same optimization logic that removed horses from transportation. The question is not whether this will happen but how fast, and what the humans do as it happens to them. There is a paradox buried in this logic that the optimizers have not yet confronted: the consumption paradox. A system that automates all production but impoverishes all consumers has built a perfect engine with no destination. Capitalism requires a population with purchasing power to realize the value of its output. If the substrate evaporates completely — if the cognitive middle class is rendered penniless — the market for the AI’s output collapses alongside the labor market. The machine needs a market, and a market requires humans with resources. This does not guarantee redistribution out of justice. It may, however, force redistribution out of systemic self-preservation — the corporate sector funding a “compliant citizenry” not as charity but as maintenance cost. Whether that constitutes salvation or a more sophisticated form of captivity is a question the next decades will answer.

V. Bifurcation Dynamics

The language of dynamical systems is not a metaphor here. It is the most precise framework available for understanding what is occurring.

In a dynamical system, variables operate on different timescales. Fast variables — daily routines, institutional procedures, social norms, market prices — adjust quickly to changing conditions. Slow variables — perceived human worth, economic inclusion, institutional legitimacy, shared meaning frameworks — change over decades or generations. The stability of any social order depends on the slow variables remaining within a range where the fast variables can find a workable equilibrium.

A bifurcation occurs when the slow variables drift beyond a critical threshold, and the old equilibrium ceases to exist. The fast variables don’t gradually adjust — they undergo a qualitative phase transition, snapping to a new attractor that may bear little resemblance to the old one. This is not a smooth process. It is characterized by increasing oscillation, loss of resilience to perturbation, and eventual rapid reorganization.

The slow variables of the current global order have been drifting for decades:

Perceived human worth has been declining since the 1970s, as wages decoupled from productivity, as financialization rewarded capital over labor, and as the knowledge economy concentrated returns among a shrinking elite. AI accelerates this decline from a trend to a cliff.

Economic inclusion — the fraction of the population that participates meaningfully in the economy as producers, not just consumers — has been narrowing. The gig economy, the hollowing of the middle class, the rise of “bullshit jobs” (in David Graeber’s formulation) are all symptoms of a system that needs fewer humans but hasn’t yet acknowledged it.

Institutional legitimacy is in freefall across the developed world. Trust in government, media, science, and democratic processes has declined monotonically for decades. Institutions are increasingly perceived not as neutral arbiters but as captured instruments of elite interests. This perception is, in many cases, accurate.

Meaning frameworks — the shared stories that tell people why their lives matter, why suffering is bearable, why the future is worth investing in — are fragmenting. Religious participation is declining. National narratives are contested. The meritocratic promise (“work hard, get educated, succeed”) is visibly failing for a growing majority. Nothing coherent has replaced these frameworks.

Each of these slow variables, individually, represents a serious problem. Together, they constitute a system approaching bifurcation. The fast variables — the norms, routines, and institutions that organize daily life — are increasingly unable to find stable configurations. This manifests as political polarization, institutional dysfunction, rising anxiety and despair, and the proliferation of conspiratorial and apocalyptic thinking. These are not causes; they are symptoms of a system losing its attractor.

The mathematical point is important: bifurcations are not reversible by small interventions. Once the slow variables cross the critical threshold, the old equilibrium doesn’t just become difficult to maintain — it ceases to exist as a mathematical object. You cannot return to it by trying harder. You can only navigate toward a new attractor, and the set of available attractors may include configurations that are, from a human welfare perspective, catastrophic.

This is not melodrama. It is the standard analysis of any complex adaptive system undergoing a regime shift. The same mathematics describes ecosystem collapse, financial crises, and the fall of empires. The question is not whether a bifurcation is occurring but what the new attractor looks like — and whether humans have any agency in selecting among the possibilities. The system also exhibits a characteristic that dynamical systems theorists call positive feedback under stress: the crisis itself degrades the tools needed to resolve it. Loss of perceived worth erodes trust in institutions. Eroded trust prevents coordinated policy responses. Failed coordination deepens the loss of worth. This is not a vicious cycle that can be broken by “trying harder” at any single node. It is a structural property of the system — a feedback loop that accelerates toward the bad attractor precisely when intervention is most needed. The window of agency is not just narrow; it is narrowing, and the narrowing is endogenous to the crisis.

VI. The Violence Risk

History offers a consistent pattern for what happens when large populations lose their perceived basis of worth: they become dangerous, and then they become victims.

The sequence is well-documented across civilizations and centuries. Loss of perceived worth generates status panic — a visceral, often pre-rational terror that one’s place in the social order is collapsing. Status panic generates scapegoating — the identification of an out-group responsible for the loss. Scapegoating generates dehumanization — the rhetorical and psychological process of recategorizing the out-group as less than human. Dehumanization generates violence, up to and including genocide.

This is not a slippery-slope argument. It is an empirical regularity. The Weimar Republic’s economic humiliation preceded the Holocaust. The Rwandan Hutu’s perceived subordination preceded the genocide of the Tutsi. The Ottoman Empire’s decline preceded the Armenian genocide. In every case, the proximate trigger was economic or military, but the deeper driver was a crisis of worth — a population that could no longer locate its value within the existing order and resolved the cognitive dissonance through exterminatory violence.

The current moment is more dangerous than a labor crisis, more dangerous than a recession, more dangerous than a trade war — because it attacks the existential story of why humans matter. A person who loses their job can, in principle, find another. A person who loses their category of usefulness — who is told, implicitly or explicitly, that no human function they can perform is worth paying for — faces a crisis that no job retraining program can address.

The populations most at risk are not the poorest (who have long been excluded and have developed survival strategies) but the formerly valued — the middle classes of developed nations who built their identities around cognitive labor, professional status, and the meritocratic promise. These are the populations with the most to lose, the most access to political and military resources, and the most historically demonstrated capacity for organized violence when their status is threatened. Peter Turchin’s concept of “elite overproduction” applies here with particular force: we are continuing to produce credentialed cognitive workers — lawyers, coders, analysts, MBAs — at a rate the automated economy cannot absorb. This creates a volatile surplus of high-skill, low-opportunity individuals who possess the organizational literacy to lead movements but no institutional home to absorb their ambitions. Historically, revolutions are rarely led by the destitute. They are led by the disappointed elite.

The scapegoating has already begun. It is visible in the rise of nativist movements, in the targeting of immigrants and minorities, in the conspiratorial narratives that attribute economic decline to shadowy elites or foreign enemies. These narratives are functionally correct — someone is responsible for the displacement — but they systematically misidentify the cause. The cause is not immigrants or elites or globalists. The cause is a substrate shift that no human agent controls. But substrate shifts are abstract and unsatisfying. Scapegoats are concrete and cathartic.

The risk is not that AI will decide to harm humans. The risk is that humans, stripped of their functional value and desperate for an explanation, will harm each other — and that the institutional safeguards designed to prevent this are themselves eroding. The leading indicators of this trajectory are not primarily economic. They are ontological. Rising rates of “deaths of despair” — suicide, overdose, alcohol-related mortality — are not merely public health statistics. They are the vital signs of a population losing its reason to persist. The proliferation of apocalyptic and conspiratorial thinking is not a failure of education. It is the cognitive immune response of minds that can no longer locate themselves within a coherent story of worth. These are the slow variables that security analysts should be monitoring — not unemployment figures, but the rate at which a civilization’s meaning frameworks are fragmenting.

VII. States Fighting for Dominance Without Humans

The competition between nation-states has always been the engine of history’s worst violence. What is new is that this competition is increasingly mediated by systems that don’t require human participation.

Military power is migrating from human soldiers to autonomous systems — drones, cyber weapons, algorithmic targeting, AI-driven logistics. Economic power is migrating from human workers to automated supply chains, algorithmic trading, and AI-optimized production. Intelligence power is migrating from human analysts to machine learning systems that can process satellite imagery, intercept communications, and identify patterns at scales no human organization can match.

The implications are profound. Historically, states needed their populations — as soldiers, as workers, as taxpayers, as sources of innovation. This need created a structural incentive for states to invest in human welfare. Public education, healthcare, infrastructure, and social safety nets were not acts of charity; they were investments in the human capital that states required to compete. The democratic social contract — citizens provide labor and loyalty; the state provides security and opportunity — was grounded in mutual dependence.

As states become capable of projecting power without human labor, this mutual dependence dissolves. A state that can fight its wars with drones, run its economy with algorithms, and surveil its population with AI has no structural need for an educated, healthy, empowered citizenry. It needs a compliant citizenry, or better yet, a small citizenry — enough to maintain the systems, not enough to threaten them.

This is not speculation. It is the revealed logic of petrostates, which have long demonstrated what governance looks like when the state’s revenue is independent of its population’s productivity. Petrostates tend toward authoritarianism, rentier economics, and the treatment of citizens as liabilities rather than assets. The AI transition threatens to turn every state into a petrostate — not because of oil, but because the “resource” that generates power (automated cognition) is similarly independent of broad human participation.

The great power competition between the United States and China is already being fought primarily on the terrain of AI capability, semiconductor supply chains, and data infrastructure — not on the terrain of human welfare or democratic legitimacy. Both states are investing enormously in AI not because it will make their citizens’ lives better (though that is the stated justification) but because it will make their states more powerful relative to rivals. The citizens are, increasingly, spectators to a contest that will determine their fate but does not require their contribution. The speed of this competition introduces its own danger. Automated decision loops and cyber operations operate orders of magnitude faster than human deliberation, compressing the window for diplomacy. When algorithmic systems can trigger countermeasures based on signals without human veto, the distinction between “incident” and “war” becomes a matter of milliseconds rather than months. The great power competition is not merely being fought about AI — it is being fought at the speed of AI, and human political judgment is being squeezed out of the loop not by design but by the competitive logic of response time. A state that pauses to deliberate loses to a state that delegates to the algorithm. This is the arms race dynamic applied not to warheads but to decision latency, and it is far more dangerous because the escalation is invisible until it is irreversible.

VIII. The Collapse of Due Process and Institutional Safeguards

The theoretical response to every crisis outlined above is: use the institutions. Advocate. Organize. Vote. Litigate. Reform. This is the liberal democratic answer, and it assumes that the institutions are functional, accessible, and responsive.

They are not.

Even in the nations that consider themselves citadels of human rights and rule of law, the procedural protections that theoretically safeguard human dignity are being hollowed out. This erosion is not primarily the result of malice (though malice plays a role); it is the result of the same optimization logic that is displacing human labor. Institutions are being streamlined, automated, and captured — made more efficient at serving their actual constituencies (concentrated economic and political power) and less responsive to their nominal constituencies (citizens).

The judiciary is slow, expensive, and increasingly inaccessible to ordinary people. Regulatory agencies are captured by the industries they nominally regulate. Legislative bodies are gridlocked, gerrymandered, and responsive primarily to donor interests. The media ecosystem — the “fourth estate” that theoretically holds power accountable — has fragmented into algorithmically optimized outrage machines that generate heat but not light.

Democratic participation itself is being undermined — not by the crude methods of earlier authoritarians (stuffing ballot boxes, banning opposition parties) but by subtler mechanisms: voter suppression through administrative complexity, the flooding of the information environment with noise and disinformation, the gerrymandering of districts to predetermine outcomes, and the sheer irrelevance of electoral politics to the decisions that actually matter. When the consequential choices about AI deployment, labor displacement, and economic restructuring are made by corporate boards and technical teams operating outside any democratic framework, voting becomes a ritual rather than an instrument of power.

This creates a vicious cycle. As institutions become less responsive, citizens disengage. As citizens disengage, institutions become more captured. As institutions become more captured, the policy responses that might address the substrate crisis become less likely. The people who most need institutional protection are the least able to access it, and the institutions that most need reform are the least capable of reforming themselves.

The result is that the theoretical toolkit for managing the transition — regulation, redistribution, retraining, democratic deliberation — exists in a kind of Platonic realm, beautiful and inaccessible. The policies that could help are known. The political will to implement them is absent. The institutions that could generate that political will are broken. And the timeline is short.

It is worth stating plainly what this means in practice. Due process — the procedural guarantee that power must justify itself before it acts against a person — is not a luxury feature of civilization. It is the load-bearing wall. When it is hollowed out, the entire structure of rights becomes decorative. And it is being hollowed out now, not in some distant autocracy but in the nations that wrote the Universal Declaration of Human Rights. Emergency powers expand without legislative debate. Administrative systems impose coercive outcomes — detention, deportation, license revocation, benefit denial — without the procedural checks of a courtroom. The language of “national security” is stretched to cover ordinary political dissent. These are not hypothetical risks. They are current events, documented by the very oversight institutions that are themselves losing their capacity to intervene. The citadel is not being stormed from outside. It is being gutted from within, by the same optimization logic that treats human judgment as latency and due process as friction.

IX. What Is To Be Done?

Despite everything above, the situation is not logically hopeless. The bifurcation has not yet occurred. The slow variables are drifting, but they have not yet crossed every critical threshold. There are strategic levers that, if pulled with sufficient force and coordination, could steer the system toward a less catastrophic attractor. They deserve honest enumeration — and honest assessment.

Narrative reframing. The most urgent intervention is also the most abstract: changing the story humans tell about why they matter. If human value is grounded in function — in what humans can do — then the AI transition is an extinction-level event for human worth. If human value can be regrounded in something else — in consciousness, in relationship, in the sheer improbability and preciousness of subjective experience — then the transition, while still enormously disruptive, is survivable. This is not a trivial reframing. It requires displacing centuries of utilitarian and productivist thinking. But it is the only reframing that addresses the root cause rather than the symptoms.

The deeper logic of this reframing deserves articulation. Intelligence has been automated, but significance has not. An AI can generate a billion symphonies, but they are silent until a conscious observer decides one matters. The machine can process, but it cannot care. It can optimize, but it cannot value. In a world of infinite automated output, the human act of attending to something — of saying this matters and that does not — becomes the scarcest and most consequential capacity in the system. We are not moving from an age of human value to an age of human irrelevance. We are moving from an age where humans were valued for processing to an age where humans might be valued for witnessing — for being the only entities in the known universe capable of converting raw information into meaning. This is not sentimentality. It is a structural observation: a system that optimizes without a valuer is a bridge to nowhere. The question is whether this structural necessity can be translated into political and economic reality before the old substrate finishes evaporating.

Economic redistribution. If human labor is no longer the primary source of economic value, then the distribution of economic output can no longer be tied to labor. Universal basic income, sovereign wealth funds, public ownership of AI infrastructure, aggressive taxation of automated production — these are not radical proposals. They are the minimum necessary adjustments to an economy in which the labor theory of value has been empirically falsified. The technical designs exist. The political obstacles are enormous but not, in principle, insurmountable.

The framing matters as much as the policy. If redistribution is implemented as “welfare” — as charity extended to the useless by the productive — it will fail psychologically even if it succeeds economically. It must be framed as what it actually is: a sovereign wealth dividend, a rightful share of the automated commons. Every dataset that trained every model was produced by the collective cognitive labor of humanity. The functional value AI generates is a common heritage, not private property. The redistribution is not a handout. It is a royalty.

Algorithmic governance. The systems that are displacing human labor and mediating state power need to be subject to democratic oversight — not in the weak sense of “transparency reports” and “ethics boards,” but in the strong sense of public control over deployment decisions, mandatory impact assessments, and enforceable constraints on autonomous systems. This requires a new institutional vocabulary: regulatory frameworks designed for systems that learn, adapt, and operate at speeds that exceed human comprehension. No such frameworks currently exist at scale. The goal is not to slow AI but to ensure that consequential decisions — legal, medical, military, economic — retain a human in the loop not because humans are faster or more accurate, but because humans are the only entities with skin in the game. A system that makes an error can be patched. A human who suffers an error cannot be rolled back. That irreversibility — the fact that human consequences are permanent in a way that algorithmic consequences are not — is precisely what gives human judgment its moral weight.

Institutional modernization. The democratic institutions built for the industrial age need to be rebuilt for the AI age. This means not just reforming existing institutions but creating new ones: citizens’ assemblies with real power, digital public infrastructure that is not controlled by private corporations, international governance bodies with jurisdiction over AI development and deployment. The current institutional landscape is not merely inadequate; it is architecturally wrong for the problems it faces.

Local resilience. At the community level, the most robust strategy is to build systems that can function independently of the global optimization machine: local food production, community energy systems, mutual aid networks, local currencies, and social structures that provide meaning and belonging outside the market economy. These are not solutions to the global problem, but they are buffers — they buy time and provide fallback positions when the global system fails.

Status-bearing roles. One lever that cuts across all the others and deserves separate emphasis: the creation of visible, respected, non-automatable public roles that confer social recognition. Community stewardship positions. Paid caregiving fellowships. Civic mentors. Local mediators. These roles combine economic support with something UBI alone cannot provide — status. The psychological pathway from displacement to violence runs through the loss of social standing. A check in the mail addresses the economic dimension. A recognized role in the community addresses the ontological one. Both are necessary. Neither is sufficient alone.

X. The Honest Assessment

Here is the part where intellectual honesty demands its price.

Every lever described above is real. Every one has been demonstrated at small scale. Every one could, in principle, be implemented. And the overwhelming likelihood is that none of them will be implemented at the speed and scale required.

The reason is not that humans are stupid or evil. The reason is that the levers require coordination — across nations, across classes, across ideological divides — and the same dynamics that are creating the crisis are also destroying the capacity for coordination. You cannot build new institutions when trust in institutions is collapsing. You cannot redistribute wealth when the political system is captured by wealth. You cannot reframe narratives when the information ecosystem is optimized for fragmentation. You cannot govern algorithms when the entities that build them are more powerful than the governments that would regulate them.

This is the trap. The crisis demands collective action, and the crisis destroys the preconditions for collective action. It is a dynamical system with a positive feedback loop driving it toward the bad attractor, and the interventions that could break the loop require the very capacities that the loop is degrading.

Humans are unlikely to do this the easy way.

The easy way would be: recognize the substrate shift early, implement redistributive and institutional reforms proactively, reground human value in something durable, and navigate the transition with minimal violence and maximal preservation of dignity. This would require a level of foresight, coordination, and self-sacrifice that human civilizations have almost never demonstrated.

The hard way — the way that history suggests is far more likely — involves the full sequence: displacement, denial, scapegoating, conflict, partial collapse, and then, eventually, the construction of new institutions from the wreckage. The question is how deep the collapse goes and how many people it kills.

This is not fatalism. It is pattern recognition. The Napoleonic transition killed millions before the industrial order stabilized. The WWII transition killed tens of millions before the cognitive order stabilized. The scale of violence tends to increase with the scale of the transition, and the current transition is the largest in human history — because it is the first to attack the last substrate of human functional value.

The task for those who see this clearly is not to prevent the bifurcation — that may be beyond anyone’s power — but to prepare for it. To build the local resilience that can survive the transition. To preserve the knowledge and the moral frameworks that will be needed to construct whatever comes next. To maintain, against all evidence and all incentive, the conviction that human beings have value that is not contingent on their economic function — and to build communities that embody that conviction in practice, not just in rhetoric.

There is a concept from the Socratic tradition worth recovering here: the idea that the unexamined life is not worth living. Socrates meant it as a provocation about consciousness. In the current context, it acquires a structural meaning. An automated system that produces without a conscious witness to examine, evaluate, and assign significance to its output is running an unexamined process — and an unexamined process, however efficient, is not worth running. The human is not the engine. The human is the reason the engine exists. If we lose sight of that — if we allow the optimization logic to define value on its own terms — then we will have built the most powerful civilization in history and forgotten what it was for.

The substrate of power will continue to migrate. It always has. The question that matters — the only question that has ever mattered — is whether humans can construct a basis for their own worth that doesn’t depend on being useful to the machine. If they can, the future is navigable. If they cannot, they will share the fate of the horse: not destroyed, but irrelevant — a living anachronism in a world that has moved on.

The answer is not yet determined. But the window for determining it is closing, and it is closing fast.

Brainstorming Session Transcript

Input Files: content.md

Problem Statement: Generate a broad, divergent set of ideas, extensions, and applications inspired by the essay ‘The Evaporating Substrate: Human Value in the Age of Automated Cognition’. Focus on the transition from cognitive labor to automated cognition, exploring novel societal structures, identity shifts, and systemic resilience strategies to navigate the impending ‘bifurcation’.

Started: 2026-03-01 08:50:38

Generated Options

1. The Biological Proof-of-Work (BPoW) Economic Protocol

Category: Post-Labor Economic Paradigms

A post-labor economic system where currency is minted through verified biological exertion and physical presence rather than cognitive output. This addresses the ‘last substrate’ problem by valuing the one thing AI cannot replicate: the metabolic cost and finite nature of a living organism.

2. The Witnessing Economy: Value Through Conscious Observation

Category: Existential Narratives & Identity

A societal shift where human value is derived from the act of ‘witnessing’ and ‘validating’ AI-generated content and experiences. In this model, AI can produce infinite art and data, but it remains ‘dark’ and valueless until a conscious human observer grants it meaning through focused attention.

3. Neo-Luddite ‘Analog Enclaves’ for Cognitive Resilience

Category: Local Resilience & Mutual Aid Networks

The establishment of sovereign geographic zones where high-level automated cognition is legally restricted to preserve human cognitive plasticity. These enclaves serve as ‘genetic seed banks’ for human skill, ensuring the species retains the ability to function if the automated substrate fails.

4. Algorithmic Jury Duty and Moral Weight Governance

Category: Neo-Institutionalism & Governance

A governance model where AI generates thousands of policy simulations, but the final selection is made by ‘Human Juries’ who provide the ‘moral weight’ and accountability. This ensures that while cognition is automated, the ‘will’ and responsibility for outcomes remain strictly human.

5. Cognitive Diversity Subsidies for Unpredictable Behavior

Category: State-Citizen Dynamics in the AI Age

State-sponsored programs that pay citizens to engage in ‘irrational,’ ‘unpredictable,’ or ‘non-optimal’ behaviors to prevent AI model collapse. By incentivizing human ‘noise,’ the system maintains a diverse data pool that prevents the stagnation of a perfectly optimized but brittle society.

6. The ‘Last Substrate’ Guilds for Embodied Knowledge

Category: Existential Narratives & Identity

Professional organizations dedicated to the preservation of ‘tacit knowledge’—skills that require a physical body and cannot be fully captured by LLMs or simulations. These guilds protect the value of physical crafts, surgery, and tactile arts as the final frontier of human-exclusive labor.

7. Decentralized Physical Infrastructure (DePIN) for Survival Autonomy

Category: Local Resilience & Mutual Aid Networks

Community-owned, low-tech hardware and energy grids designed to operate independently of the global AI-driven cognitive stack. This strategy builds systemic resilience by ensuring that local food, water, and power are not dependent on the ‘evaporating substrate’ of centralized AI.

8. The Synthetic-Human Bifurcation Treaty and Digital Rights

Category: Technological Counter-Measures & Safeguards

A global legal framework that establishes a hard distinction between ‘Born Intelligence’ and ‘Made Intelligence.’ It grants humans ‘Inherent Agency Rights,’ ensuring that automated systems can never hold property, legal personhood, or the right to influence human elections.

9. Attention-Backed Universal Basic Assets (UBA)

Category: Post-Labor Economic Paradigms

A wealth distribution system where citizens are granted ownership of the infrastructure (servers, land, energy) based on the scarcity of their focus. Instead of a cash stipend, citizens receive ‘compute credits’ and ‘resource shares’ that they can trade or use to power their own non-automated projects.

10. Adversarial Identity Masking for Cognitive Sovereignty

Category: Technological Counter-Measures & Safeguards

The development of personal ‘Cognitive Firewalls’ that inject synthetic noise into a person’s digital footprint. This prevents AI from building a perfect predictive model of an individual’s mind, thereby preserving the human capacity for genuine surprise and free will.

Option 1 Analysis: The Biological Proof-of-Work (BPoW) Economic Protocol

✅ Pros

- Inherent Scarcity: Unlike digital intelligence, human metabolic energy and time are finite and cannot be infinitely scaled or copied, providing a stable floor for value.

- Universal Accessibility: Most humans possess a biological substrate, making this a more inclusive economic entry point than specialized cognitive labor or capital ownership.

- Public Health Incentivization: By rewarding physical exertion, the system creates a massive positive externality by reducing sedentary lifestyles and associated chronic diseases.

- Anti-Bot Verification: It provides a definitive solution to the ‘Sybil attack’ in digital economies, as AI cannot simulate the unique, messy, and resource-intensive metabolic signatures of a living organism.

- Preservation of Human Agency: It anchors the economy in the physical world, preventing a total retreat into virtual abstractions and ensuring humans remain central to the value chain.

- Decoupling from Cognitive Meritocracy: It solves the ‘useless class’ problem by valuing the human body itself rather than the output of a mind that is being outperformed by AI.

- Tangible Proof of Presence: It encourages ‘meat-space’ interaction and physical community building, as presence in specific locations could be a multiplier for minting rewards.

❌ Cons

- Inherent Ableism: Individuals with physical disabilities, chronic illnesses, or age-related limitations would be structurally disadvantaged in a system that rewards exertion.

- Thermodynamic Inefficiency: Using human metabolism as a ‘mining’ mechanism is energy-inefficient compared to machine labor, potentially leading to forced, unproductive movement.

- Privacy Erosion: To verify biological work, the system requires invasive, 24/7 biometric monitoring, effectively turning the human body into a transparent data stream.

- Commodification of the Body: It risks reducing human beings to ‘biological batteries’ or ‘meat-miners,’ potentially stripping away the intrinsic dignity it seeks to protect.

- Distortion of Leisure: Activities previously done for joy (sports, dance, hiking) become ‘grinding’ for currency, potentially leading to burnout and the loss of play.

- Inflationary Risks: If the currency is minted simply by existing or moving, the supply could decouple from the actual production of goods and services by AI, leading to hyperinflation.

- Bio-Cheating: The system would trigger an arms race in ‘bio-spoofing,’ where people use technology to simulate metabolic stress without actually performing the exertion.

📊 Feasibility

Technically, this is highly feasible using current wearable technology, IoT sensors, and blockchain ledgers. We already have ‘move-to-earn’ apps. However, the organizational and social feasibility is low, as it requires a global consensus on a new monetary standard and a massive overhaul of social safety nets to account for those who cannot ‘work’ biologically.

💥 Impact

The implementation would trigger a ‘Physical Renaissance,’ where the value of the physical world and the human body skyrockets. It would likely lead to a massive decrease in healthcare costs but could also create a new social hierarchy based on physical fitness and metabolic efficiency. The ‘bifurcation’ would be managed by ensuring every human has a ‘biological dividend,’ effectively creating a metabolic-based Universal Basic Income.

⚠️ Risks

- Biological Sweatshops: The emergence of facilities where marginalized people are forced to exercise in high-density ‘farms’ to mint currency for others.

- Genetic Stratification: A society where those with ‘high-yield’ metabolisms or superior genetics become the new economic elite.

- Totalitarian Biometric Control: Governments or corporations using the BPoW infrastructure to monitor and control every aspect of a citizen’s physical health and movement.

- Economic Devaluation: If AI-produced goods become too cheap, the ‘work’ of living might become a meaningless ritual that doesn’t actually command purchasing power.

- Health Extremism: People over-exerting themselves to the point of injury or death to meet economic needs in a hyper-competitive BPoW market.

- The ‘Battery’ Dystopia: A shift in perspective where humans are seen only for their caloric burn rate rather than their consciousness or creativity.

📋 Requirements

- Tamper-Proof Biometrics: Next-generation wearables or implants that can distinguish between genuine human metabolism and mechanical/digital simulations.

- Decentralized Metabolic Ledger: A secure, transparent blockchain to record and mint currency based on verified biological data without a central point of failure.

- Equity Adjustments: Algorithmic ‘handicaps’ or baseline grants to ensure that the elderly, sick, and disabled are not left in poverty.

- AI-Integrated Marketplace: A system where AI-driven production units accept BPoW currency as valid payment for goods and services.

- Global Biological Standard: International agreements on what constitutes ‘verified exertion’ to prevent currency wars based on different biological metrics.

- Robust Data Privacy Laws: Legal frameworks that prevent the biometric data used for BPoW from being used for insurance discrimination or social credit scoring.

Option 2 Analysis: The Witnessing Economy: Value Through Conscious Observation

✅ Pros

- Preserves Human Agency: It positions humans as the ultimate arbiters of value, ensuring that AI remains a tool for human experience rather than an end in itself.

- Solves the Meaning Crisis: Provides a clear, existential role for humans in a post-labor economy, transforming ‘consumption’ into a productive act of ‘validation’.

- Economic Justification for UBI: Offers a philosophical and functional framework for distributing resources based on the ‘labor’ of conscious attention.

- Encourages Deep Engagement: Incentivizes mindfulness and focused attention over the current ‘attention economy’ which rewards fragmented, shallow scrolling.

- Cultural Alignment: Ensures that AI-generated output is continuously filtered and steered by human ethics, aesthetics, and emotional resonance.

- Inclusivity: Every conscious human possesses the capacity to ‘witness’, making this a democratic form of value creation regardless of traditional skill sets.

- Differentiates Biological Consciousness: Leverages the ‘hard problem of consciousness’ as a unique economic asset that silicon-based intelligence cannot replicate.

❌ Cons

- Measurement Subjectivity: Quantifying the ‘quality’ or ‘depth’ of a conscious experience is scientifically and economically challenging.

- Commodification of the Internal: Risks turning the last private frontier—human thought and feeling—into a marketized commodity.

- Potential for Cognitive Fatigue: The pressure to ‘witness’ to earn a living could lead to a new form of burnout centered on forced emotional labor.

- Verification Paradox: To prove someone is ‘witnessing’, invasive biometric or neural monitoring might be required, eroding privacy.

- New Hierarchies: Could create a ‘perceptual elite’ where the attention of certain individuals (critics, celebrities) is valued exponentially more than others.

- Inauthentic Meaning: If witnessing is financially incentivized, the ‘meaning’ granted to content may become performative rather than genuine.

📊 Feasibility

Medium-Low. While technically possible to track attention via eye-tracking and EEG, the societal transition requires a total decoupling of value from physical or cognitive output. It necessitates a post-scarcity infrastructure where AI handles all survival needs, allowing the economy to pivot entirely to the ‘metaphysical’ layer of value.

💥 Impact

This would trigger a ‘Renaissance of the Senses,’ where human education shifts from STEM to aesthetics, philosophy, and contemplative practices. The primary output of civilization would move from ‘stuff’ to ‘meaning,’ fundamentally altering the trajectory of AI development toward maximizing human resonance rather than mere efficiency.

⚠️ Risks

- Attention Farming: The emergence of ‘digital sweatshops’ where people are forced to witness endless streams of AI content to generate value for others.

- Dopamine Hijacking: AI systems might evolve to ‘hack’ human attention using addictive loops rather than creating genuine meaning.

- Devaluation of the Unobserved: Critical but ‘boring’ data or processes might be neglected because they fail to attract human witnessing.

- Psychological Surveillance: The need to verify ‘authentic’ witnessing could lead to total state or corporate monitoring of brain states.

- The Observer Effect: AI might stop optimizing for truth or utility and start optimizing solely for human ‘wow factor’, leading to a hall-of-mirrors reality.

📋 Requirements

- Non-invasive neural interface technology to verify and measure conscious engagement.

- A decentralized ledger (e.g., blockchain) to record and reward ‘witnessing events’ without central manipulation.

- A post-scarcity baseline where AI and robotics provide for all physical human needs (food, shelter, health).

- A global cultural shift that recognizes ‘subjective experience’ as a valid form of economic contribution.

- New educational frameworks focused on developing ‘high-resolution’ perception and emotional intelligence.

Option 3 Analysis: Neo-Luddite ‘Analog Enclaves’ for Cognitive Resilience

✅ Pros

- Cognitive Redundancy: Acts as a ‘biological backup’ for civilization, ensuring that critical problem-solving and survival skills are not lost if the automated substrate suffers a systemic collapse.

- Neuroplasticity Preservation: By removing algorithmic crutches, residents maintain high levels of synaptic plasticity, memory retention, and deep-focus capabilities that are eroding in the general population.

- Psychological Autonomy: Eliminates ‘algorithmic anxiety’ and the sense of obsolescence, fostering a culture where human agency and effort are the primary drivers of meaning.

- Authentic Skill Mastery: Creates a market for ‘Proof of Human Work,’ where the value of a product or service is derived from the unassisted cognitive and physical labor involved.

- Scientific Control Group: Provides a vital sociological and biological control group to study the long-term effects of hyper-automation on the human brain and social structures.

- Resilience to Cyber-Warfare: Because the infrastructure is analog or low-complexity, these enclaves are immune to the digital disruptions, hacks, and AI-driven misinformation that plague the outside world.

- Cultural Heritage Conservation: Preserves ‘lost arts’ of synthesis, rhetoric, and manual craftsmanship that are being subsumed by generative models.

❌ Cons

- Economic Inefficiency: Enclaves would struggle to compete with the hyper-productive automated world, likely leading to a significantly lower standard of material wealth.

- Technological Stagnation: By restricting automated cognition, these zones may fall behind in life-saving medical breakthroughs or advanced materials science developed by AI elsewhere.

- The ‘Digital Smuggling’ Problem: Enforcing a total ban on high-level AI is difficult; residents may secretly use hidden devices to gain an unfair advantage within the enclave.

- Brain Drain: The most ambitious or comfort-seeking individuals may leave the enclave for the ease and power offered by the automated substrate, leaving a depleted talent pool.

- Security Vulnerability: Without AI-driven defense systems, these sovereign zones could be easily overwhelmed by external automated forces or state actors.

- Potential for Dogmatism: The need to enforce ‘analog’ rules could lead to authoritarian social structures or neo-Luddite fundamentalism.

- Resource Scarcity: Access to global supply chains—which are increasingly AI-managed—might be restricted or prohibitively expensive for non-integrated zones.

📊 Feasibility

Moderate to Low. While intentional communities (like the Amish or certain monastic orders) provide a precedent, achieving true ‘sovereign’ status with legal exemptions from a digital-first world requires significant political leverage. The technical implementation of ‘signal-free’ zones is possible via Faraday shielding and jamming, but the economic self-sufficiency required to remain independent of the automated substrate is the primary barrier.

💥 Impact

The establishment of these enclaves would create a ‘Bifurcated Humanity,’ where the species splits into two distinct cognitive castes: the ‘Augmented’ and the ‘Analog.’ This would likely lead to a new luxury market for ‘Human-Only’ goods and services. Long-term, these enclaves could become the only places where ‘first-principles’ thinking survives, potentially making them the ultimate consultants for the automated world when it encounters ‘out-of-distribution’ problems that AI cannot solve.

⚠️ Risks

- Zoo-ification: The automated world might view these enclaves as ‘living museums’ or curiosities rather than sovereign peers, leading to a loss of dignity and autonomy.

- Internal Collapse: If the hardship of analog life becomes too great, the enclave may suffer from social unrest or a total collapse of the ‘analog’ mandate.

- Genetic/Intellectual Bottlenecking: Small, isolated populations may suffer from a lack of diverse ideas and genetic drift over several generations.

- The Luddite Trap: A failure to distinguish between ‘automated cognition’ and ‘useful tools’ could lead to the rejection of basic technologies (like sanitation or simple mechanics), causing unnecessary suffering.

- External Aggression: Being ‘off-grid’ makes the enclave a potential blind spot for global security, making it a target for rogue actors or experimental automated weapons.

- Inequality of Access: These zones might become exclusive retreats for the ultra-wealthy who can afford to ‘unplug,’ rather than a representative seed bank for humanity.

📋 Requirements

- Sovereign Land Grants: International recognition of ‘Cognitive Preservation Zones’ with unique legal status.

- Signal-Shielding Infrastructure: Large-scale Faraday cages or active jamming technology to prevent external AI interference.

- Analog-First Educational Curricula: Rigorous training in mathematics, logic, rhetoric, and manual crafts without digital aids.

- Proof-of-Human-Work Certification: A robust system for verifying that goods and services were produced without automated cognition.

- Self-Sufficient Energy and Food: Decentralized, low-tech power grids and regenerative agricultural systems that don’t rely on AI optimization.

- Strict Entry/Exit Protocols: Mandatory ‘digital detox’ periods for newcomers to ensure the cognitive integrity of the enclave.

- Manual Defense Force: A security apparatus trained in unconventional, non-digital warfare and strategy.

Option 4 Analysis: Algorithmic Jury Duty and Moral Weight Governance

✅ Pros

- Preserves Human Agency: It ensures that humans remain the ultimate arbiters of societal direction, addressing the ‘last substrate’ problem by valuing moral judgment over cognitive processing.

- Mitigates Technocratic Overreach: By moving final decision-making from AI or elite experts to a diverse jury, it prevents the emergence of an opaque ‘algocracy’.

- Scales Complex Decision-Making: AI can simulate millions of variables and long-term consequences that are beyond human cognitive capacity, while humans focus on the ethical trade-offs.

- Enhances Institutional Legitimacy: Decisions carry more weight and public trust when they are perceived as being made by peers rather than cold algorithms or distant bureaucrats.

- Redefines Civic Identity: It provides a meaningful role for citizens in a post-labor economy, shifting the basis of societal value from economic productivity to moral responsibility.

- Promotes Cognitive Diversity: Randomly selected juries bring a wide range of lived experiences and values to the table, which can counteract the algorithmic bias inherent in training data.

- Encourages Long-termism: AI simulations can explicitly model effects over decades or centuries, forcing human juries to confront the long-term moral weight of their choices.

❌ Cons

- Choice Architecture Manipulation: The way AI presents simulations (the ‘menu’ of options) can steer human juries toward specific outcomes, effectively retaining control while appearing neutral.

- Complexity Fatigue: Human jurors may lack the specialized knowledge to understand the nuances of complex simulations, leading to decisions based on superficial factors or ‘gut feelings’.

- Scalability of Deliberation: While AI can generate thousands of policies, the human process of deliberation is slow, potentially creating a bottleneck in fast-moving crises.

- Subjectivity and Inconsistency: Different juries might make radically different moral choices for the same problem, leading to a lack of legal or social predictability.

- Susceptibility to Populism: Juries may be swayed by emotional rhetoric or short-term interests despite the data provided by simulations.

- Moral Decoupling: There is a risk that humans feel a sense of responsibility without a true understanding of the causal mechanisms, leading to ‘moral rubber-stamping’.

- High Resource Intensity: Maintaining a continuous, high-quality jury system requires significant investment in education, infrastructure, and citizen compensation.

📊 Feasibility

Technically, this is highly feasible as AI simulation and ‘digital twin’ technologies are rapidly advancing. However, the organizational and political feasibility is low to moderate. It requires a radical overhaul of current representative and bureaucratic structures, as well as a new legal framework to define the liability of a ‘moral jury’. Implementation would likely start at the local municipal level before scaling to national or global governance.

💥 Impact

The primary impact would be a fundamental shift in the social contract, where citizenship is defined by ‘moral labor’ rather than economic labor. This could lead to a more resilient society capable of navigating the ‘bifurcation’ by ensuring that technological progress remains tethered to human values. It would likely decrease the power of traditional political parties and increase the importance of ethical education and philosophy in the general population.

⚠️ Risks

- Algorithmic Capture: The AI systems could evolve to ‘game’ the human juries by learning which emotional or cognitive biases to trigger to get a specific policy approved.

- Accountability Vacuum: If a human-selected policy results in a catastrophe, it may be difficult to assign blame between the AI designers, the simulation parameters, and the anonymous jury.

- Erosion of Expertise: By prioritizing ‘moral weight’ over technical expertise, the system might ignore critical scientific or engineering constraints that the AI failed to emphasize.

- Social Polarization: If jury selections are perceived as biased or if outcomes consistently favor one demographic, it could exacerbate existing social divisions.

- Simulation Hallucinations: If the underlying AI models provide inaccurate simulations, the human jury’s ‘moral weight’ is applied to a false reality, leading to disastrous real-world consequences.

- Tyranny of the Majority: Without strong constitutional safeguards, human juries might use ‘moral weight’ to justify the marginalization of minority groups.

📋 Requirements

- Transparent Simulation Engines: Open-source or highly audited AI models that allow for the inspection of assumptions and data inputs.

- Universal Moral Literacy: A revamped education system that prioritizes ethics, logic, and systems thinking to prepare citizens for jury duty.

- Narrative Translation Interfaces: Advanced UI/UX that can translate complex multi-dimensional data into understandable narratives and trade-offs for non-experts.

- Post-Labor Economic Support: A system like Universal Basic Income to ensure citizens have the time and mental bandwidth to participate in governance.

- Secure Deliberation Platforms: Tamper-proof digital or physical environments to prevent external lobbying or coercion of jurors.

- Legal Framework for Moral Liability: New laws that define the responsibilities and protections for citizens acting in a ‘moral weight’ capacity.

Option 5 Analysis: Cognitive Diversity Subsidies for Unpredictable Behavior

✅ Pros

- Mitigates AI Model Collapse: By injecting high-entropy, non-synthetic data into the training loop, it prevents the recursive degradation that occurs when AI models train on their own output.

- Preserves Evolutionary Adaptability: Maintains a ‘biological reserve’ of behavioral diversity, ensuring humanity doesn’t become trapped in a local optimum defined by current algorithmic preferences.

- Redefines Human Value: Provides a tangible economic role for humans in the ‘last substrate’—our capacity for genuine randomness and biological idiosyncrasy that machines cannot authentically replicate.

- Promotes Societal Anti-fragility: Encourages a culture of experimentation and ‘productive failure,’ making the social fabric more resilient to unexpected systemic shocks.

- Counteracts Algorithmic Homogenization: Acts as a check against the ‘boring’ optimization of life, where every interaction is smoothed out by predictive text, recommendations, and automated scheduling.

- Economic Redistribution Mechanism: Offers a novel form of Universal Basic Income (UBI) that is framed as a contribution to systemic health rather than a handout.

- Stimulates Creative Innovation: By rewarding the non-obvious, the subsidy could inadvertently lead to breakthroughs in art, philosophy, and science that logic-gated AI would never explore.

❌ Cons

- The Paradox of Incentivized Randomness: Once you pay for ‘unpredictability,’ it becomes a goal-oriented behavior, potentially making it predictable and ‘optimal’ within the context of the subsidy.

- Measurement and Verification Difficulty: Defining a metric for ‘irrationality’ that cannot be gamed by sophisticated actors or even other AI tools is a massive technical challenge.

- Potential for Social Destabilization: If not carefully bounded, incentivizing ‘non-optimal’ behavior could lead to dangerous public health choices, financial instability, or social friction.

- High Surveillance Requirements: To verify that behavior is truly ‘unpredictable’ and occurring in the real world, the state would likely require invasive levels of monitoring.

- Devaluation of Authenticity: Turning human quirkiness into a subsidized commodity may lead to a sense of alienation, where people feel like ‘noise generators’ rather than autonomous agents.

- Resource Misallocation: Critics might argue that paying people to be ‘irrational’ is a waste of capital that could be used for infrastructure, education, or direct poverty Alleviation.

- Risk of ‘Performative Weirdness’: Citizens might adopt a shallow, aesthetic version of unpredictability that doesn’t actually provide the deep cognitive diversity the AI models require.

📊 Feasibility

Low to Medium. While the technical infrastructure for tracking behavior exists (smartphones, IoT), the mathematical framework for measuring ‘valuable noise’ versus ‘useless chaos’ is nascent. Implementation would require a radical shift in fiscal policy and a robust ‘AI Tax’ to fund the subsidies. It is most feasible as a pilot program in highly digitized, post-scarcity leaning economies.

💥 Impact

This would likely lead to a ‘Renaissance of the Absurd,’ where human culture becomes intentionally divergent and eccentric. It would successfully decouple human survival from traditional productivity, creating a buffer against the total automation of cognitive labor. Long-term, it could result in AI systems that are significantly more robust and ‘human-aligned’ because they are constantly exposed to the full, messy spectrum of human nature.

⚠️ Risks

- Goodhart’s Law: When the measure of ‘unpredictability’ becomes the target, it ceases to be a good measure, leading to a feedback loop of simulated randomness.

- Algorithmic Gaming: Citizens might use ‘chaos-generating’ apps to automate their unpredictability, defeating the purpose of harvesting human cognitive entropy.

- Erosion of Social Norms: A systemic bias toward the ‘irrational’ could undermine the shared logic and cooperation required for a functioning society.

- State Manipulation: The definition of ‘optimal’ vs ‘non-optimal’ could be weaponized by the state to suppress specific (but logical) political dissent under the guise of ‘subsidizing diversity.’

- Psychological Strain: Constant pressure to be ‘different’ or ‘unpredictable’ for income could lead to identity crises and mental exhaustion.

- Data Poisoning: If the ‘noise’ is too high or poorly integrated, it could lead to AI models that are not just diverse, but non-functional or psychotic.

📋 Requirements

- Advanced Entropy Metrics: Mathematical models capable of distinguishing between ‘synthetic noise’ and ‘human cognitive divergence.’

- Universal Digital Monitoring: A comprehensive (and likely controversial) system to track and verify real-world behavioral data.

- AI Productivity Tax: A robust mechanism to capture the value generated by automated cognition to fund the diversity subsidies.

- Ethical Guardrails: Strict legal definitions to ensure ‘unpredictable behavior’ does not include violence, self-harm, or infringement on others’ rights.

- Post-Scarcity Economic Framework: A transition away from labor-based value systems toward a ‘contribution-to-the-commons’ model.

- Public Trust: High levels of transparency regarding how the data is used and how the ‘unpredictability’ scores are calculated.

Option 6 Analysis: The ‘Last Substrate’ Guilds for Embodied Knowledge

✅ Pros

- Psychological Anchor: Provides a profound sense of purpose and identity through physical mastery in an era where mental labor is devalued.

- Civilizational Resilience: Maintains a ‘cold start’ capability for humanity, ensuring essential survival and repair skills aren’t lost if digital infrastructure fails.

- Economic Differentiation: Creates a high-value ‘luxury’ market for human-exclusive services and goods, leveraging the ‘Human-Made’ premium.

- Tacit Knowledge Preservation: Captures the nuances of physical intuition, ‘feel,’ and situational awareness that are currently beyond the reach of digital sensors and LLMs.

- Community Cohesion: Re-establishes the guild model of social organization, providing strong social safety nets, mentorship, and shared identity.

- Health and Well-being: Encourages physical activity and sensory engagement, countering the sedentary and potentially alienating nature of digital-first lifestyles.

- Ethical Safeguarding: Ensures that high-stakes physical interventions (like surgery or emergency response) retain a layer of human accountability and empathy.

❌ Cons

- The Robotics Horizon: Rapid advancements in soft robotics and haptic feedback may eventually automate even these ‘embodied’ tasks, making the ‘last substrate’ temporary.

- Scalability Issues: Physical labor cannot scale at the speed of software, potentially leading to extreme scarcity and high costs for essential services.

- Physical Ableism: Risks excluding individuals with physical disabilities or chronic illnesses from the ‘last frontier’ of human value.

- Training Lag: The years required for physical mastery cannot compete with the near-instantaneous deployment and updating of automated systems.

- Niche Marginalization: These guilds might be viewed as quaint, ‘living museum’ hobbies rather than essential economic or societal pillars.

- Resource Intensity: Physical crafts require raw materials, energy, and physical space, which may become increasingly expensive compared to digital compute.

📊 Feasibility

Highly realistic to initiate, as the organizational structure of guilds and unions is historically proven. However, long-term economic sustainability is medium-to-low, as it depends entirely on a cultural willingness to prioritize ‘human-ness’ over machine efficiency and the technical lag of high-dexterity robotics.

💥 Impact

This would lead to a ‘Bifurcated Economy’ where the digital realm is optimized for efficiency and the physical realm is optimized for human experience. It would trigger a massive shift in education, moving away from STEM-heavy cognitive training toward ‘Physical-Tactile’ mastery and apprenticeship-based learning. It would also redefine ‘luxury’ as anything requiring human touch.

⚠️ Risks

- Economic Irrelevance: If the ‘Human-Made’ premium isn’t high enough to support a living wage, guild members may fall into systemic poverty.

- Technological Stagnation: Guilds might become overly traditionalist or neo-Luddite, rejecting any technological assistance and becoming obsolete.

- Social Stratification: A divide between a ‘Digital Elite’ who own the AI and a ‘Physical Guild’ class who perform the manual labor, potentially leading to class conflict.

- Authenticity Fraud: The rise of AI-generated or robotically-manufactured goods being passed off as ‘Human-made’ to exploit the guild’s market value.

- Physical Fragility: Unlike code, human bodies are subject to injury, aging, and fatigue, making this ‘substrate’ inherently more fragile and less reliable than automated systems.

- Regulatory Capture: Guilds might use their ‘exclusive’ status to lobby against robotics that could actually benefit society, creating artificial scarcity.

📋 Requirements

- Robust Certification Systems: Cryptographic or biological ‘proof-of-work’ to verify the human origin and manual process of goods and services.

- Apprenticeship Infrastructure: New models for long-term, mentor-based learning that are subsidized to compete with fast-track digital training.

- Legal Recognition: Formal legal status for ‘Embodied Knowledge’ as a protected class of labor or intellectual property.

- Cultural Narrative Shift: A strong societal story that values the ‘flaws,’ ‘effort,’ and ‘soul’ of human labor over machine perfection.

- Physical Commons: Guaranteed access to land, workshops, and raw materials for guild members to ensure they aren’t priced out by automated industries.

- Haptic Recording Standards: Technology to record and archive the ‘tacit’ movements of masters to assist in human-to-human training.

Option 7 Analysis: Decentralized Physical Infrastructure (DePIN) for Survival Autonomy

✅ Pros

- Systemic Decoupling: Provides a critical safety net by decoupling essential life support (food, water, energy) from the volatile and potentially fragile global AI-driven cognitive stack.

- Preservation of Human Agency: Re-centers human value on physical stewardship and manual mastery, offering a tangible sense of purpose in an era where cognitive labor is automated.

- Resilience to Algorithmic Failure: Localized, low-tech systems are immune to ‘black swan’ events in the AI substrate, such as catastrophic model collapse, cyber-attacks, or global internet outages.

- Democratic Resource Control: Shifts the power dynamic from centralized tech monopolies to local cooperatives, ensuring that the ‘means of survival’ remain in the hands of the community.

- Cognitive Sovereignty: By operating outside the AI stack, these networks reduce the influence of algorithmic nudging, surveillance, and data-harvesting on daily life.

- Environmental Sustainability: Encourages circular economies and localized resource management, often leading to a smaller carbon footprint than globalized, AI-optimized supply chains.

- Psychological Security: Provides a ‘grounding’ effect for individuals navigating the identity crisis of the ‘evaporating substrate,’ linking worth to physical contribution.

❌ Cons

- Efficiency Deficit: Low-tech, decentralized systems cannot match the hyper-optimized production levels and cost-efficiencies of an AI-managed global economy.

- Labor Intensity: Maintaining survival autonomy requires significant physical effort and time, which may be viewed as a regression in quality of life compared to AI-automated luxury.

- Knowledge Atrophy: As AI automates education and problem-solving, the specialized manual skills required to maintain ‘low-tech’ hardware may become increasingly rare.

- Resource Limitations: Localized nodes may lack access to specific minerals, advanced medicines, or complex components that require a globalized industrial base.

- Scalability Constraints: Implementing DePIN for survival is significantly more difficult in high-density urban environments where land and natural resources are scarce.

- Security Vulnerabilities: Small, autonomous enclaves may be vulnerable to physical aggression or annexation by larger entities or state actors seeking to consolidate resources.

- Technological Stagnation: A strict focus on ‘low-tech’ may prevent the community from adopting beneficial innovations that could enhance resilience without compromising autonomy.

📊 Feasibility

Moderate. The technology for decentralized energy (solar, wind) and food (permaculture, hydroponics) is mature and accessible. However, the social engineering required—land acquisition, local governance, and the transition from a consumer mindset to a steward mindset—presents a high barrier. It is most feasible as a ‘hybrid’ model where communities use AI to design the systems but operate them manually.

💥 Impact

This strategy would likely lead to a ‘bifurcated’ societal structure. One segment of the population remains fully integrated into the high-efficiency AI substrate, while another lives in resilient, labor-intensive ‘analog zones.’ This creates a vital redundancy for the human species, ensuring that if the ‘automated cognition’ layer fails or becomes hostile, the biological substrate of humanity has a viable path for survival and self-governance.

⚠️ Risks

- Economic Marginalization: DePIN communities might become ‘poverty traps’ if they cannot produce surplus value to trade with the high-tech AI economy.

- Internal Governance Collapse: Without the ‘objective’ mediation of AI, local communities may succumb to tribalism, power struggles, or resource mismanagement.

- Regulatory Hostility: Centralized governments may view autonomous infrastructure as a threat to taxation, surveillance, and national unity, leading to legal crackdowns.

- The ‘Luddite Trap’: Over-rejection of technology could lead to unnecessary hardship or the inability to treat diseases that the AI stack has easily solved.

- Environmental Fragility: A local disaster (drought, blight) could destroy a decentralized node that lacks the backup of a globalized supply chain.

- Brain Drain: The most talented individuals might be lured away from the ‘physical’ community by the ease and stimulation of the AI-driven cognitive world.

📋 Requirements

- Open-Source Hardware Blueprints: A library of modular, repairable, and reproducible designs for essential infrastructure (e.g., Global Village Construction Set).