Andrew Charneski

Full-Stack Software Engineer, AI Architect & Researcher 📍 Westerville, OH (Remote) | ✉️ andrew@simiacryptus.com | 🌐 simiacrypt.us | GitHub | LinkedIn —

Summary

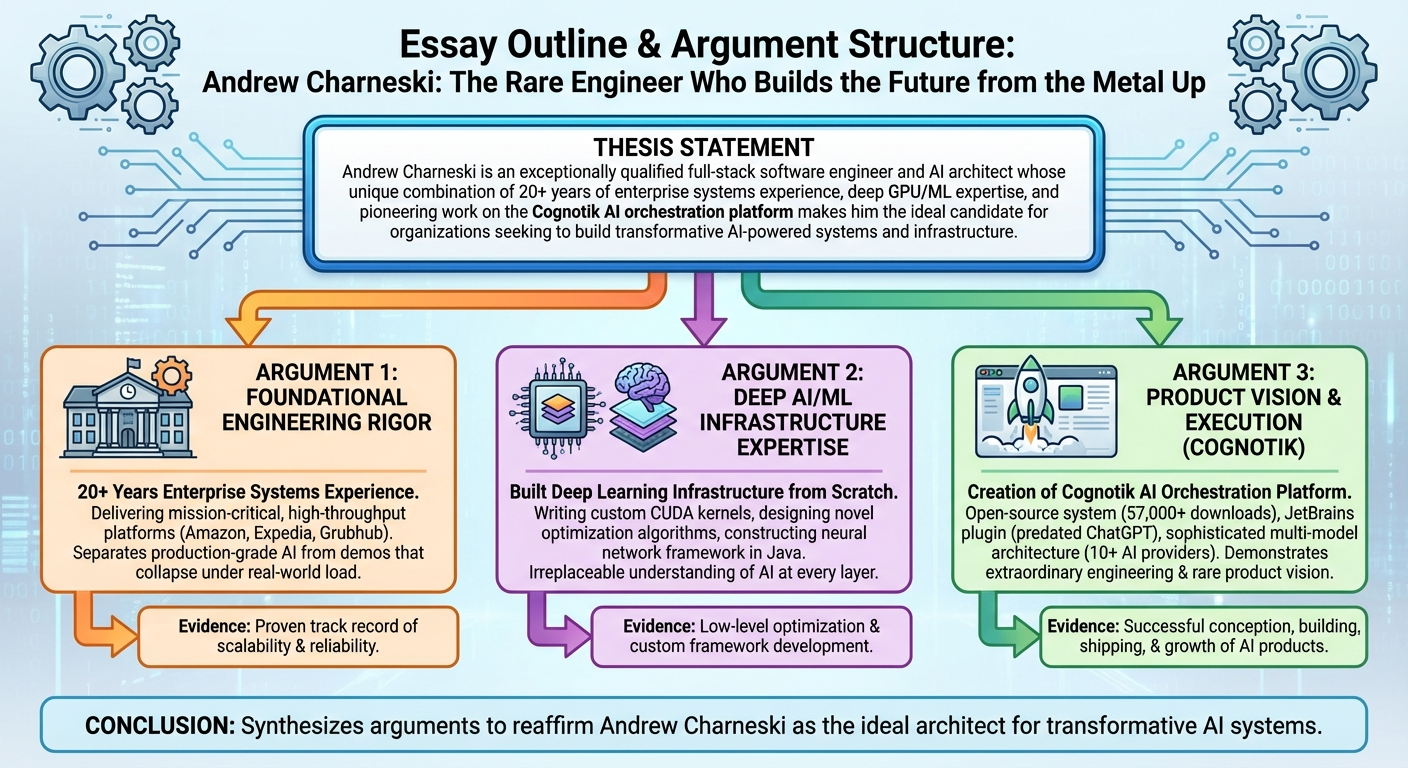

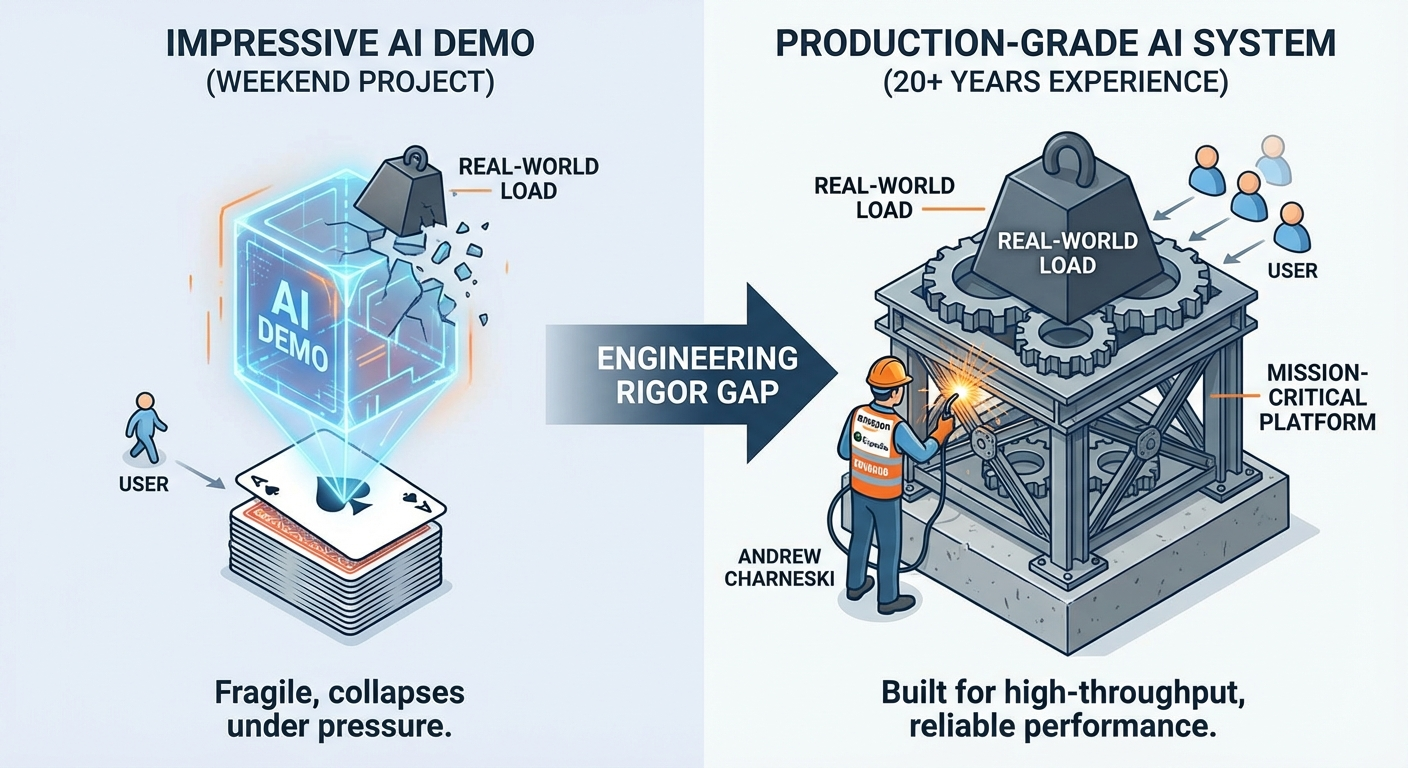

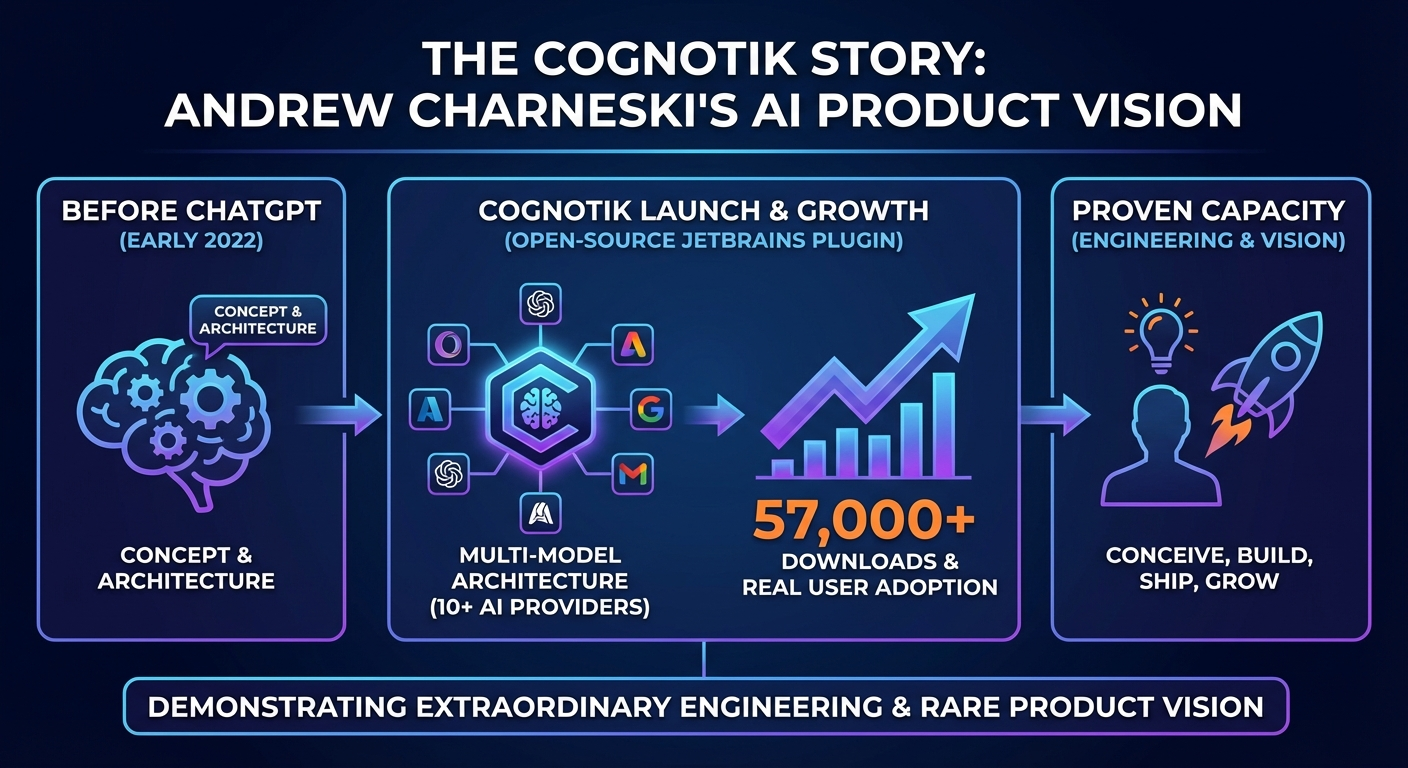

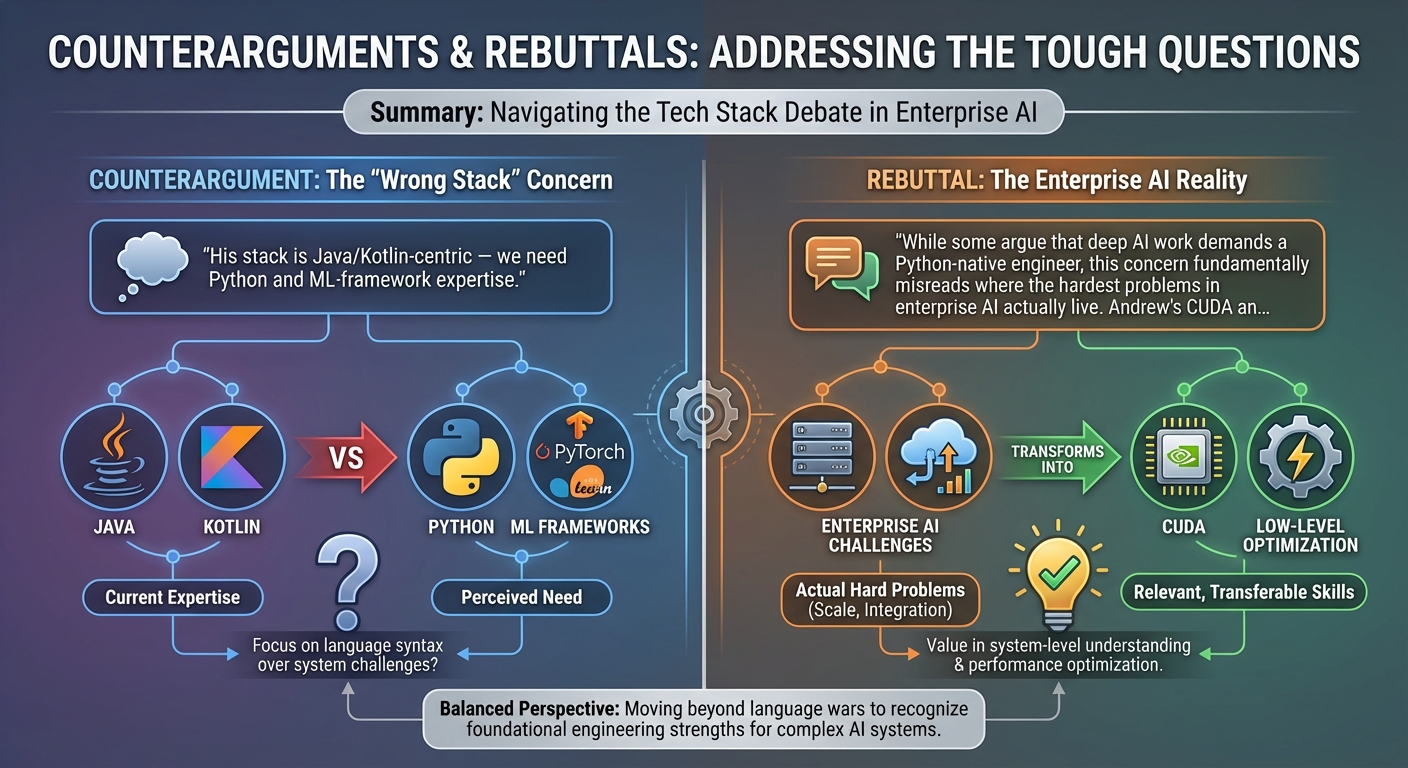

Full-Stack Software Engineer and AI Architect with 20+ years building scalable enterprise systems and 9+ years delivering AI/ML solutions. Expert in Java/Kotlin, Distributed Systems, and High-Performance Computing. Creator of the Cognotik open-source AI orchestration platform (57k+ downloads, early-market JetBrains plugin predating ChatGPT) and the MindsEye deep learning framework. Deep expertise from GPU programming (CUDA/CuDNN) and native interop (FFI/Project Panama) to cloud infrastructure (AWS/K8s) and AI-powered developer tools. Proven track record at Amazon, Expedia, and Grubhub delivering real-time systems (<5ms latency, 10k+ TPS), large-scale data pipelines, and platform infrastructure. —

Core Competencies

- AI Product & LLM Orchestration: Creator of Cognotik platform (early-market JetBrains plugin, 57k+ downloads) integrating 10+ AI providers (OpenAI, Anthropic, Google, AWS Bedrock, Azure, Groq, Mistral, DeepSeek, Perplexity, local models). Expert in multi-model orchestration, context-aware planning, prompt engineering, declarative DocOps pipelines, and building self-healing agentic workflows with eight cognitive modes across three categories: Conversational (chat, persona, REPL), Planning & Execution (Waterfall, Adaptive, Hierarchical), and Advanced Orchestration (Council voting, Protocol state-machines, Parallel batch processing). Approximately 95% of the platform’s codebase is AI-generated with human review, and the platform maintains its own documentation and product site via its own DocProcessor pipeline.

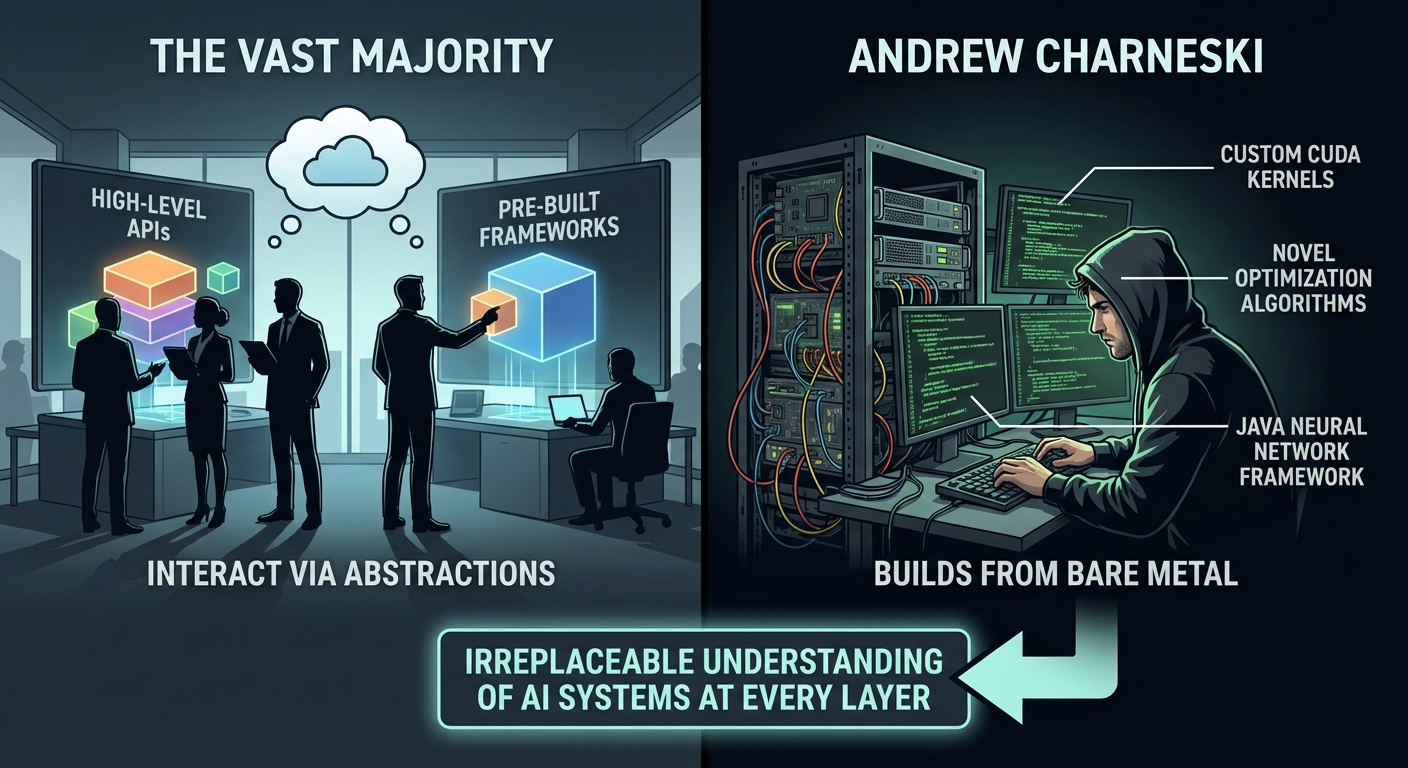

- GPU Computing & Deep Learning: Built MindsEye framework from scratch in Java with custom CUDA/CuDNN integration via FFI/JNI. Expert in hybrid memory management, geometric transformations, and novel optimization algorithms (QQN/RSO).

- Enterprise Software & Microservices: 20+ years architecting robust backends using Java, Kotlin, and Spring Boot. Expert in decomposing monoliths, API design, and ensuring high availability in distributed environments.

- MLOps & Infrastructure: Extensive experience designing production ML platforms on AWS and Kubernetes. Proficient in Docker, CI/CD (Jenkins/GitLab), and orchestration tools (Azkaban, Oozie).

- AI-Powered Content & DocOps: Creator of the Fractal Thought Engine — an AI-powered publishing system using declarative operator pipelines to transform raw notes into multi-modal publications (articles, comics, game theory analyses, Socratic dialogues). Pioneer of ‘Content-as-Code’ and ‘Compliance-as-Code’ methodologies.

- Real-Time Systems & Performance: Deep expertise in low-latency systems (10k+ TPS, <5ms). Proven ability to optimize JVM performance, reduce resource consumption by 90%, and implement real-time anomaly detection.

- Data Engineering & Database: Expert in SQL (PostgreSQL, MySQL), schema design, and distributed data processing (Spark, Hadoop, Hive). Experience managing petabyte-scale data pipelines.

-

Observability & Reliability: Advanced skills in monitoring (Splunk, Datadog), automated canary analysis, distributed tracing, and building self-service diagnostic tools.

Experience

Chemical Abstract Services (CAS)

Software Consultant - Data Engineering | Columbus, OH (Hybrid) | Jan 2026 – Present Technologies: Java, Apache Spark 4, Hadoop, Cascading, Generative AI, LLM Orchestration, Python

- Legacy Migration: Migrating complex data flows from legacy Cascading/Hadoop pipelines into a modern Spark 4-based application, ensuring data integrity and performance parity throughout the transition.

- AI-Powered Code Migration: Constructing an automated AI coding pipeline to accelerate the migration process, leveraging LLM-based code generation and transformation to convert legacy Cascading workflows into idiomatic Spark 4 code.

-

Data Engineering: Working with large-scale scientific and chemical data processing workflows, optimizing Spark jobs for throughput and reliability.

Simia Cryptus (Self-Employed)

Independent Consultant & AI Researcher | Westerville, OH | Aug 2025 – Dec 2025 Technologies: Kotlin, Rust, TypeScript, React, Generative AI, Agentic Workflows, LLM Orchestration, Jekyll, DocOps

- R&D Sabbatical: Intentional period after Grubhub dedicated to personal life, portfolio development, and independent research, extended by a hand injury and a challenging job market.

- Cognotik AI Platform Polish: Continued refinement of the Cognotik open-source AI orchestration platform (a long-running hobby project predating this period), expanding multi-LLM provider support and refining the declarative DocProcessor engine. The original JetBrains Marketplace plugin (“AI Coding Assistant”) was an early-market entrant predating the post-ChatGPT explosion, accumulating 57k+ downloads.

- QQN Research & Publication: Authored and published the QQN (Quadratic Quasi-Newton) formal academic research paper (DOI: 10.13140/RG.2.2.15200.19206), including a comprehensive Rust benchmarking framework achieving a 72.6% benchmark win rate. Published as a ResearchGate preprint.

- Fractal Thought Engine: Built and demonstrated the Fractal Thought Engine — an AI-powered publishing system using declarative operator pipelines to transform raw notes into multi-modal publications (articles, comics, game theory analyses, Socratic dialogues).

-

Platform Demos & Evangelism: Created comprehensive demonstration suite (CognotikDemo) showcasing real-world agentic AI workflows including package documentation generation, multi-stage research pipelines, and self-bootstrapping codebases.

Grubhub

Senior Software Engineer - Data Platform Infrastructure | Remote/Westerville, OH | Oct 2018 – July 2025 Technologies: Kotlin, Java, Spring Boot, React, TypeScript, Python, PySpark, AWS, Kubernetes, Docker, Azkaban, Apache Ranger, Splunk, Datadog, PostgreSQL

- Data Platform Infrastructure: Served as cross-functional support engineer for the data organization, providing hands-on troubleshooting, optimization guidance, and technical education to data scientists and analysts across multiple teams. Maintained and optimized infrastructure spanning dozens of data clusters running PySpark workflows on Azkaban. Maintained custom builds of core open-source platforms (Apache Ranger, Azkaban) with patches contributed back to the community.

- Performance Optimization: Led deep performance analysis of mission-critical JVM applications including Apache Hive, Apache Ranger, and Azkaban. Achieved significant CPU/memory reductions through advanced profiling, GC tuning, and algorithmic optimization.

- High-Performance Java & FFI: Leveraged Java 21’s Project Panama (FFI) to build direct bindings to native SSL/SSH libraries, resolving critical connectivity failures during an Ubuntu infrastructure upgrade when standard Java libraries failed.

- Deployment Orchestration: Designed zero-downtime multi-stage deployment platform with automated canary analysis, rollback capabilities, and comprehensive audit trails. Developed novel deployment methods enabling reliable, non-disruptive upgrades for critical services.

- Observability: Designed Datadog dashboards and Splunk diagnostic queries for deep system observability. Built custom tools for latency tracking, throughput analysis, and automated error logging.

- Generative AI & Developer Tools (Self-Initiated): Architected agentic AI systems using LLMs for automated troubleshooting with declarative document-driven orchestration. Built full-stack AI-powered developer tools (React/TypeScript + Kotlin/Spring) for analyzing build failures, reducing Mean Time To Resolution (MTTR). Applied multi-model orchestration patterns (different models for planning, code generation, and summarization). Demonstrated technical initiative and leadership by piloting AI-augmented workflows ahead of organizational adoption.

- Vendor & Architecture Review: Evaluated a pilot program with a commercial Apache Ranger vendor, providing technical assessment and recommendation (declined). Participated in formal design reviews and contributed architectural proposals for deployment orchestration and infrastructure tooling.

-

Incident Response & Operational Readiness: Participated in on-call rotations, incident response, and post-mortem processes for data platform infrastructure. Contributed to preparing and reviewing operational response documentation.

Expedia Inc

Software Consultant - Data Engineering | Seattle, WA | Oct 2014 – Oct 2018 Technologies: Scala, Java, AWS, Apache Spark, Hadoop, Hive, Redis, Apache Storm, Qubole, Docker

- Real-Time Data Services: Architected high-performance ads targeting system achieving TP95 <5ms latency at ~10k TPS using Scala, Redis, and Apache Storm.

- Cloud Migration: Led migration of big data infrastructure (~15-node Hadoop cluster) from on-premise to AWS/Qubole. Optimized Spark/Hive pipelines for cost and performance.

- Open Source Customization: Maintained a custom build of Apache Oozie featuring internal management tools to support data engineering workflows.

- Infrastructure Optimization: Reduced infrastructure costs and data processing time through profiling and targeted optimization.

-

Technical Leadership: Led a team of 5 developers, establishing coding standards and best practices for high-performance distributed systems.

Amazon.com

Technical Consulting | Seattle, WA | Nov 2016 – Feb 2017 Technologies: Java, Spring

-

Web Service Productionalization: Led the productionalization of a prototype Java web service for decision support and automation.

HBO Code Labs

Senior Software Engineer | Seattle, WA | Dec 2013 – Sep 2014 Technologies: Java, Spring Framework, Scala, Eclipse AST, Performance Tuning

- Performance Engineering: Refactored large-scale Spring web services, reducing CPU and memory load by 90%. Root-caused a critical bug in a custom gzip decompression loop that pegged threads at 100% CPU on errant HTTP sessions — the organization had been masking the issue with continuous rolling restarts (~30-minute server lifetimes). Fixing this single bug restored cache effectiveness and eliminated the need for constant restarts.

-

Developer Tooling: Developed static analysis tools based on Eclipse’s Java AST to enforce coding standards (parameter sanitization, transaction management, caching) and facilitate large-scale refactoring.

Various (Consulting)

Technical Consulting | Seattle, WA | April 2011 – Nov 2013 Technologies: Java, C, Android, ffmpeg, Hibernate, Cassandra, Thrift

- Plugged-In Technologies: Created a cross-platform video conferencing app (Android, Windows, Mac) and media server backend for video streaming, authentication, and session management using Java/C.

- Big Fish Games: Developed desktop/browser and Android video game streaming clients using Java, JNA, and libffmpeg.

-

Serials Solutions: Implemented new Java data services based on Hibernate, Cassandra, and Thrift.

Distributed Energy Management

Team Lead and Architect | Bremerton, WA | 2010 – 2011 Technologies: Java, Python, Berkeley DB

-

Team Leadership & Architecture: Led a team of six, designed a high-performance data service and analytics platform for time series data using Java, Python/Jython, and Berkeley DB.

Marchex

Senior SDE | Seattle, WA | 2009 Technologies: MySQL, GWT, Java

-

Database & Web Development: Designed a MySQL partitioning service and maintained a GWT web application.

Amazon.com

SDE II - Website Platform | Seattle, WA | 2007 – 2009 Technologies: C++, C, Java, Perl, AWS, SQL, Distributed Systems

- Real-Time Security AI: Developed DDoS detection and response systems processing millions of requests per minute using ML for pattern recognition.

- High Availability: Built distributed services ensuring 24/7 availability for critical infrastructure and payments data.

-

Systems Programming: Developed Apache httpd C modules for routing and security.

Aristocrat Technologies, Inc

Software Engineer | Las Vegas, NV | 2005 – 2007 Technologies: C#, .NET

-

Gaming Industry Applications: Developed C# .NET commercial business applications for the gaming industry.

Skills

Programming Languages

| Language | Level | Years | Details | |—|—|—|—| | Java (8+) & Kotlin | Expert | 20 | Core, Concurrency, JVM Tuning, Spring Boot, FFI/Project Panama (HPC) | | Python | Proficient | 10 | PySpark, Scripting, ML ecosystem familiarity. Primary language of supported teams at Grubhub. | | JavaScript | Advanced | 15 | Long-standing secondary skill for web UIs, utilities, and lightweight tooling | | TypeScript | Advanced | 7 | React, Node.js, Cognotik web interface. Preferred for production-scale frontend work. | | C / C++ | Proficient | 20 | Systems Programming, CUDA, Performance. Primary language in early career; long-standing secondary skill for native bindings and GPU work. | | Scala | Advanced | 8 | Spark, Functional Programming | | Rust | Intermediate | 2 | QQN Optimizer benchmarking framework. Prior experience with custom ownership-based memory management in Java (MindsEye) and C++ provided strong conceptual foundation. |

AI & Machine Learning

- Generative AI & LLMs: Multi-model orchestration, RAG, Agentic Workflows, Prompt Engineering, Context Management

- Deep Learning Frameworks: Custom Frameworks (MindsEye). Familiarity with PyTorch and TensorFlow concepts; primary deep learning experience is through MindsEye (Java/CUDA).

- Computer Vision: Neural Style Transfer, Image Generation, Geometric Transformations

- GPU Computing: CUDA, CuDNN, OpenCL, Kernel Optimization, Memory Management

- Optimization Algorithms: Quasi-Newton methods, Gradient Descent, Custom Loss Functions

- Agentic AI & DocOps: Declarative document-driven AI orchestration, multi-step task planning, cognitive mode selection, self-healing workflows, Content-as-Code pipelines

Infrastructure & Cloud

- AWS (Expert, 12 years): EC2, S3, Lambda, ECS, EMR, SageMaker, IAM

- Containerization: Docker, Kubernetes (Usage & Troubleshooting)

- Big Data: Apache Spark, Hadoop, Hive, PySpark, Qubole

- Databases: PostgreSQL, MySQL, Redis, Elasticsearch, Vector Databases

DevOps & Tools

- CI/CD & Build: Gradle, Maven, Jenkins, Git, GitHub Actions, DocProcessor (AI-powered build pipelines)

- Observability: Splunk, Datadog, Prometheus, Grafana

-

Orchestration: Azkaban, Oozie, Airflow concepts, Cognotik DocProcessor (declarative AI task orchestration)

Projects

Cognotik AI Platform | GitHub

Open-source AI-powered development platform distributed as cross-platform desktop app, JetBrains IDE plugin (57k+ downloads, early-market entrant predating ChatGPT), and React/TypeScript web interface. Built on a declarative DocProcessor engine (Markdown + YAML frontmatter) that orchestrates AI tasks as a build system. Supports Agentic Workflows, RAG, multi-LLM orchestration across 10+ providers (BYOK model), eight cognitive modes across three categories (Conversational, Planning & Execution, Advanced Orchestration), and 15+ specialized task types. Approximately 95% of the codebase is AI-generated with human review and automated demo-based testing. The platform bootstraps its own documentation and product pages using its own DocProcessor pipeline. The React frontend features moderate complexity with real-time server-driven UI via HTML snippets over WebSocket. Technologies: Kotlin, TypeScript, React, Generative AI, Agentic Workflows, LLM Orchestration, RAG, PostgreSQL, JetBrains Platform, WebSocket, Docker, YAML, Markdown

Fractal Thought Engine | GitHub

AI-powered research platform and publishing system using a declarative operator pipeline (DocOps) to transform raw notes into multi-modal publications — articles, comics, Socratic dialogues, game theory analyses, and state machine diagrams. Features circular feedback loops where analytical operators evaluate content against multiple cognitive frameworks, and a Jekyll-based frontend with automatic format detection and tabbed interfaces. Technologies: Jekyll, Markdown, YAML, Generative AI, Agentic Workflows, DocOps, Multi-Modal Content Generation

MindsEye Neural Network Framework

Comprehensive Java deep learning library built from scratch with CUDA/CuDNN integration (predating TensorFlow’s first release). Architected a custom ownership-based memory management system using AST-based static analysis to enforce safety. Achieved 10x performance improvement by bypassing GC for GPU buffers. Technologies: Java, CUDA, CuDNN, OpenCL, Spark

MailDB

Comprehensive email database system with AI-powered summarization, full-text search, and .mbox import tools. Technologies: Java, H2 Database, REST API, AI Integration

SimiaCryptus Chess

Advanced online chess platform featuring real-time multiplayer, variant gameplay (Hexagonal), and WebGL graphics using React and TypeScript. Technologies: JavaScript, WebGL, Node.js, Real-time Systems

HTML Tools Suite | GitHub

Client-side developer toolkit featuring secure encryption tools, package upgraders, and data transformation utilities. Technologies: JavaScript, Web Crypto API, PWA

reSTM

Distributed transactional memory prototype with MVCC, achieving ACID guarantees in scalable distributed systems. Technologies: Java, Distributed Systems, Concurrency —

Publications

- QQN: Quadratic Quasi-Newton Optimization — Formal academic research paper presenting a novel optimization algorithm bridging first/second-order methods with 72.6% benchmark win rate. Includes comprehensive Rust benchmarking framework. Published as preprint via ResearchGate (DOI: 10.13140/RG.2.2.15200.19206).

- Cognotik AI Platform - Demo Videos & Presentations (2022-Present) — YouTube channel featuring comprehensive demonstrations and presentations of practical agentic AI applications. Showcases real-world use cases and platform capabilities.

- Cognotik Demos: AI-Powered Workflows in Action (2025) — Comprehensive demonstration suite showcasing Cognotik’s declarative AI orchestration: Package README Generator, Puppy Research Workflow, Software Factory, Fractal Thought Engine integration, and Bootstrapping. Illustrates the ‘Makefile for AI’ paradigm and the shift from generative toil to evaluative toil.

- Test-Driven Development for Neural Networks — Methodology for applying TDD principles, gradient validation, and A/B testing to neural network development.

- Geometric Symmetry in Deep Texture Generation — Breakthrough research in neural art achieving perfect mathematical symmetry through kaleidoscopic preprocessing.

- Fractal Thought Engine — Personal blog and AI-powered publishing platform featuring ideas elaborated through multi-modal cognitive lenses — dialectical reasoning, game theory, Socratic dialogue, and computational modeling — using the Fractal Thought Engine’s declarative operator pipeline.

- Volumetry: Multidimensional Probability Modeling — Research on modeling complex multidimensional distributions (including fractals) using gaussian kernels, PCA transforms, and decision trees.

-

Modeling Network Latency — Statistical analysis of network latency distributions in distributed systems, comparing various parametric forms against an experimental dataset.

Education

University of Illinois at Urbana-Champaign

Bachelor of Engineering in Physics | Minor in Mathematics

- Strong foundation in mathematical modeling, numerical methods, and computational science

- Research assistant developing computational labs for Nonlinear Dynamics

Brainstorming Session Transcript

Input Files: content.md

Problem Statement: Given Andrew Charneski’s extensive background spanning 20+ years in full-stack engineering, AI/ML research (Cognotik platform, MindsEye framework, QQN optimizer), GPU computing, distributed systems, and AI-powered content generation (Fractal Thought Engine), brainstorm a wide range of ideas for: (1) new products, tools, or services he could build or offer, (2) novel research directions extending his existing work, (3) creative applications combining his diverse skill sets in unexpected ways, (4) career positioning and market opportunities, (5) open-source community and ecosystem plays, (6) content and thought leadership strategies, and (7) unconventional moonshot ideas that leverage his unique combination of deep systems engineering + AI orchestration + creative content generation expertise.

Started: 2026-02-28 20:34:20

Generated Options

1. GPU-Native AI Agent Orchestration Platform as Service

Category: Product & Tool Ideas

Build a commercial platform that combines MindsEye’s vision capabilities with distributed GPU computing to offer AI agent orchestration as a managed service. Enterprises could deploy multi-modal AI pipelines that dynamically allocate GPU resources across tasks like code generation, image reasoning, and document analysis. This directly extends Cognotik’s platform architecture while targeting the exploding AI infrastructure market. Flagged as most promising near-term opportunity given current enterprise demand for managed AI orchestration.

2. QQN Optimizer Applied to LLM Fine-Tuning Efficiency

Category: Research Extensions

Extend the Quasi-Quantum Newton (QQN) optimizer research to tackle the specific challenge of parameter-efficient fine-tuning of large language models, where second-order optimization insights could dramatically reduce compute costs. Publish benchmarks showing QQN outperforming AdamW on LoRA/QLoRA training runs across standard LLM benchmarks. This positions existing novel research directly in the hottest area of ML optimization and could attract significant academic and industry attention.

3. Fractal Thought Engine for Procedural World Generation

Category: Creative Cross-Pollination

Repurpose the Fractal Thought Engine’s recursive content generation architecture to create infinite, coherent procedural game worlds and interactive narratives that maintain long-range consistency. By combining AI-driven content generation with GPU-accelerated rendering pipelines, this could produce explorable 3D environments where story, terrain, and NPC behavior emerge from fractal-like generative processes. Flagged as most surprising cross-pollination idea — it merges creative AI with systems engineering in the gaming/metaverse space.

4. Fractional CTO for AI-Native Startups Portfolio

Category: Career & Market Positioning

Offer fractional CTO / principal architect services specifically to early-stage startups building AI-native products, leveraging 20+ years of full-stack and distributed systems expertise combined with deep ML knowledge. This creates a portfolio approach where equity stakes across 4-6 companies compound value while each engagement is part-time. The rare combination of production systems engineering and AI research depth commands premium positioning in a market flooded with ML-only or infra-only advisors.

5. Open-Source MindsEye as Composable Vision AI Framework

Category: Open Source & Ecosystem

Release MindsEye as a fully open-source, modular computer vision framework with a plugin architecture that lets developers compose custom vision pipelines from interchangeable components (feature extractors, optimizers like QQN, rendering backends). Build community around it by providing GPU-optimized reference implementations and integration with popular ML ecosystems like HuggingFace and PyTorch. Monetize through enterprise support, hosted compute, and a marketplace for contributed modules.

6. Technical Blog Series: Building AI Systems That Actually Scale

Category: Content & Thought Leadership

Launch a high-signal technical blog and newsletter series documenting hard-won lessons from building production AI systems — covering GPU memory management, distributed training pitfalls, optimizer design decisions, and the architecture of real AI platforms. Position content at the intersection of systems engineering and ML that few practitioners can credibly occupy. This builds personal brand authority and creates a funnel for consulting, hiring, and product launches.

7. Self-Evolving Codebase: AI That Refactors Its Own Infrastructure

Category: Moonshot / Unconventional Ideas

Build a moonshot system where AI agents, orchestrated through Cognotik-style pipelines, continuously analyze, refactor, optimize, and extend their own underlying infrastructure code — essentially a self-improving software organism. Leverage deep systems engineering knowledge to create safe sandboxed execution environments where AI-generated code changes are tested, benchmarked on GPU workloads, and promoted automatically. This is a concrete step toward recursive self-improvement with practical engineering guardrails.

8. AI-Powered Technical Due Diligence Automation Tool

Category: Product & Tool Ideas

Create a product that uses LLM-based analysis pipelines to automate technical due diligence for VC firms and acquirers — scanning codebases, architecture documents, and infrastructure configs to generate risk assessments, scalability scores, and technical debt reports. This uniquely combines full-stack engineering judgment (encoded as evaluation heuristics) with AI orchestration capabilities. The tool addresses a real market pain point where technical DD is expensive, slow, and inconsistent.

9. Distributed GPU Marketplace with Intelligent Job Scheduling

Category: Product & Tool Ideas

Build a decentralized marketplace where GPU owners contribute compute and AI workloads are intelligently scheduled across heterogeneous hardware using optimization algorithms derived from QQN research. Unlike existing GPU clouds, the scheduler would understand ML workload characteristics (memory patterns, parallelism profiles) to optimally match jobs to hardware. This extends distributed systems expertise into the GPU compute shortage economy with a differentiated technical moat.

10. Live-Coding AI Systems Streams with Research Commentary

Category: Content & Thought Leadership

Launch a live-streaming series where Andrew builds real AI systems in public — implementing optimizers, debugging GPU kernels, architecting distributed pipelines — while providing research-level commentary on design decisions. This format is extremely rare (most AI content is either tutorial-level or paper-reading) and would attract a dedicated audience of senior engineers and researchers. Clips become viral technical content, and the format naturally showcases expertise for business development.

Option 1 Analysis: GPU-Native AI Agent Orchestration Platform as Service

✅ Pros

- Directly leverages Andrew’s deepest technical strengths — GPU computing, distributed systems, and AI orchestration — meaning minimal ramp-up time on core technology and a genuine competitive moat rooted in hard-won expertise.

- Targets a market experiencing explosive growth: enterprise AI infrastructure spending is projected to exceed $300B+ by 2027, and managed AI orchestration is one of the fastest-growing segments within that. Timing is excellent.

- Builds naturally on existing assets (Cognotik platform architecture, MindsEye framework) rather than starting from scratch, providing a significant head start over someone entering this space cold. The Cognotik codebase could serve as the foundational layer.

- Multi-modal pipeline orchestration (vision + code + document analysis) is a genuine differentiator. Most current orchestration platforms (LangChain, CrewAI, etc.) are text-centric and treat GPU allocation as an afterthought. A GPU-native approach that intelligently schedules heterogeneous workloads across GPU resources is a meaningful technical moat.

- Enterprise customers have strong willingness to pay for managed services that reduce operational complexity around GPU infrastructure — this is a high-margin, recurring-revenue business model (SaaS/PaaS) with strong unit economics once established.

- The ‘agent orchestration’ framing is extremely timely — 2024-2025 is the inflection point where enterprises are moving from single-model API calls to complex multi-agent workflows, and most are struggling with the infrastructure layer. Andrew would be entering at the right moment in the adoption curve.

- Dynamic GPU resource allocation across heterogeneous AI tasks is a genuinely hard systems engineering problem that most AI-focused startups lack the depth to solve well. Andrew’s 20+ years of systems engineering gives him a credible advantage in building something robust rather than a thin wrapper.

❌ Cons

- Extremely competitive landscape: AWS Bedrock, Azure AI Studio, Google Vertex AI, and well-funded startups like Anyscale, Modal, Replicate, and Together AI are all attacking adjacent or overlapping spaces with hundreds of millions in funding and large engineering teams.

- Building a production-grade managed service requires significant operational investment beyond the core technology — SLAs, monitoring, security compliance (SOC 2, HIPAA), customer support, billing infrastructure — which is a massive undertaking for a solo founder or small team.

- GPU infrastructure costs are enormous. Running a managed GPU service requires either massive upfront capital for hardware or significant cloud spend margins that eat into profitability. The capital requirements could be prohibitive without venture funding.

- Enterprise sales cycles are long (6-18 months) and require dedicated sales/BD resources, proof-of-concept engagements, and security reviews. This is a very different motion than building great technology, and it’s where many technically excellent founders struggle.

- The ‘AI orchestration’ space is evolving so rapidly that architectural decisions made today could be obsoleted within 12-18 months by shifts in model capabilities (e.g., models that natively handle multi-modal tasks may reduce the need for complex orchestration pipelines).

- Risk of being perceived as ‘yet another AI platform’ in an increasingly crowded market, making differentiation and marketing messaging critical challenges that require non-technical investment.

📊 Feasibility

Moderately feasible as a technical build, but challenging as a commercial venture. Andrew has the core technical skills to build a compelling prototype and even an MVP — the Cognotik platform and MindsEye framework provide real foundational assets. A focused MVP targeting a specific vertical (e.g., GPU-native document processing pipelines for legal/financial firms) could be built in 3-6 months. However, scaling to a production managed service with enterprise-grade reliability, security, and support requires either significant funding ($2-5M seed minimum) or a very lean approach starting with a developer-tools/API-first model. The biggest feasibility constraint is not technical but operational and financial: competing in managed infrastructure requires capital, a team, and go-to-market resources that are hard to bootstrap as a solo operator. A more feasible path might be to start as an open-source orchestration framework with a commercial managed tier, reducing initial capital needs while building community traction.

💥 Impact

If successful, this could position Andrew at the center of the enterprise AI infrastructure stack — a position with enormous commercial value and influence. A well-executed GPU-native orchestration platform could capture significant market share in the mid-market enterprise segment (companies too large for simple API calls but not large enough to build custom infrastructure). Revenue potential is substantial: even modest traction (50-100 enterprise customers) at typical AI infrastructure pricing ($5K-50K/month) would represent $3-60M ARR. Beyond direct revenue, the platform would establish Andrew as a recognized authority in AI infrastructure, opening doors to advisory roles, speaking engagements, acquisition interest, and strategic partnerships. The platform could also become a distribution channel for his other innovations (QQN optimizer, Fractal Thought Engine). However, impact is highly contingent on execution speed and market positioning — the window for new entrants in this space is narrowing as incumbents consolidate.

⚠️ Risks

- Capital risk: GPU infrastructure costs could burn through available resources before achieving product-market fit. A single enterprise customer’s GPU workload could cost thousands per month to serve, and margins may be thin until significant scale is reached.

- Competitive displacement: Major cloud providers (AWS, Azure, GCP) could release native agent orchestration features that commoditize the core value proposition overnight, as they’ve done repeatedly in adjacent infrastructure categories.

- Technical debt from rapid iteration: The AI orchestration space is evolving so fast that building a robust platform while keeping pace with new model architectures, agent frameworks, and GPU hardware generations could lead to unsustainable technical debt.

- Single-founder risk: Enterprise customers are wary of depending on platforms built by solo operators or very small teams. This creates a chicken-and-egg problem where you need a team to win enterprise trust, but need enterprise revenue to fund the team.

- Model provider dependency: The platform’s value depends on integrating with rapidly changing model APIs (OpenAI, Anthropic, open-source models). Breaking API changes, pricing shifts, or access restrictions from model providers could disrupt the platform’s functionality.

- Security and compliance liability: Managing enterprise GPU workloads means handling sensitive data and code. A security breach or compliance failure could be catastrophic both legally and reputationally.

- Market timing risk: If the ‘AI agent’ hype cycle deflates before enterprise adoption matures (as happened with previous AI waves), demand for orchestration infrastructure could plateau, leaving the platform over-invested in a cooling market.

📋 Requirements

- Seed funding of $2-5M or a very lean bootstrapping strategy with a clear path to revenue within 6 months. GPU infrastructure costs alone make this difficult to self-fund at meaningful scale.

- A small but strong founding team: at minimum, Andrew plus 1-2 additional engineers (ideally one focused on distributed systems/DevOps and one on frontend/developer experience) and someone with enterprise sales experience.

- Cloud GPU partnerships or credits: negotiating favorable pricing with GPU cloud providers (Lambda Labs, CoreWeave, or major clouds) is essential for viable unit economics. Strategic partnerships could also provide go-to-market advantages.

- Enterprise-grade security and compliance infrastructure: SOC 2 Type II certification, data encryption at rest and in transit, audit logging, role-based access control, and potentially HIPAA/FedRAMP compliance depending on target verticals.

- A clear vertical focus for initial go-to-market: rather than a horizontal platform, targeting a specific use case (e.g., multi-modal document processing for financial services, or AI-powered code review pipelines for engineering teams) would accelerate product-market fit.

- Developer documentation, SDKs, and a compelling developer experience: in the current market, developer adoption often precedes enterprise procurement. A great DX with clear documentation, Python/TypeScript SDKs, and quick-start templates is essential.

- Marketing and content investment to differentiate from the crowded field: technical blog posts, benchmarks showing GPU utilization advantages, case studies, and conference presence (NeurIPS, KubeCon, AI Engineer Summit) to build credibility and pipeline.

Option 2 Analysis: QQN Optimizer Applied to LLM Fine-Tuning Efficiency

✅ Pros

- Directly leverages existing QQN optimizer research — no need to build from scratch; this is an extension of work Andrew has already invested significant intellectual capital in, making it a natural and efficient next step.

- Targets the single hottest problem in applied ML right now: reducing the cost and compute requirements of LLM fine-tuning. The timing could not be better, as every company from startups to hyperscalers is actively seeking more efficient fine-tuning methods.

- Second-order optimization methods are theoretically well-suited to the low-rank parameter spaces used in LoRA/QLoRA, where the effective dimensionality is small enough that curvature information becomes tractable and highly valuable — this isn’t just marketing, there’s genuine mathematical motivation.

- Publishing rigorous benchmarks against AdamW (the de facto standard) on well-known LLM benchmarks creates an immediately verifiable and citable contribution, which is the fastest path to academic credibility and industry adoption.

- Could serve as a powerful ‘Trojan horse’ for broader adoption of the QQN optimizer — if it wins on LLM fine-tuning, researchers will naturally explore it for pretraining, reinforcement learning, and other domains.

- Positions Andrew as a bridge between optimization theory and practical LLM engineering — a rare and highly valued profile in the current AI landscape, potentially opening doors to advisory roles, collaborations with major labs, or acquisition interest.

- The parameter-efficient fine-tuning community (LoRA, QLoRA, DoRA, etc.) is extremely active on open-source platforms, meaning positive results would spread rapidly through Hugging Face, Twitter/X, and Reddit with minimal marketing effort.

❌ Cons

- The optimization landscape for LLM fine-tuning is brutally competitive — teams at Google DeepMind, Meta FAIR, and numerous well-funded startups are actively working on this exact problem with far more compute resources.

- AdamW is notoriously hard to beat in practice despite theoretical arguments for second-order methods; many promising optimizers (K-FAC, Shampoo, etc.) have shown gains in specific settings but failed to achieve widespread adoption due to marginal or inconsistent improvements.

- Benchmarking rigorously across ‘standard LLM benchmarks’ is extremely expensive — even fine-tuning runs on 7B-70B parameter models require significant GPU hours, and results need to be averaged across multiple seeds and tasks to be credible.

- Second-order methods typically have higher per-step memory and compute overhead, which could negate wall-clock time savings even if they converge in fewer steps — this is especially problematic in the memory-constrained LoRA/QLoRA setting where users are already GPU-limited.

- Without affiliation with a major research lab, getting a paper accepted at top venues (NeurIPS, ICML, ICLR) is harder due to implicit credibility biases, even with strong results.

- The ‘Quasi-Quantum’ naming may invite skepticism from the ML community, which has grown wary of quantum-inspired branding that doesn’t deliver quantum advantages — this could be a perception hurdle regardless of the method’s actual merits.

📊 Feasibility

Moderately high feasibility. Andrew already has the QQN optimizer implemented and understands its theoretical foundations. The extension to LoRA/QLoRA fine-tuning is conceptually straightforward — the key challenge is engineering the optimizer to work within the memory and compute constraints of parameter-efficient fine-tuning frameworks (likely integrating with Hugging Face PEFT or similar). The main feasibility bottleneck is compute: running credible benchmarks across multiple model sizes (7B, 13B, 70B), multiple datasets (MMLU, HellaSwag, GSM8K, etc.), and multiple seeds requires substantial GPU access — likely 500-2000+ A100 GPU-hours for a thorough study. This is achievable with cloud credits or a modest budget ($5K-$20K), but it’s not trivial for an independent researcher. The technical skills required (GPU optimization, distributed training, ML benchmarking) are squarely in Andrew’s wheelhouse.

💥 Impact

Potentially very high impact. If QQN demonstrably outperforms AdamW on LLM fine-tuning — even by 15-20% in compute efficiency — this would be a significant result that could attract thousands of citations, widespread open-source adoption, and serious industry interest. Even partial results (e.g., QQN matches AdamW quality in 40% fewer steps on specific model/task combinations) would be publishable and noteworthy. The downstream effects could include: invitations to collaborate with major AI labs, consulting opportunities with companies doing large-scale fine-tuning, potential integration into frameworks like Hugging Face Transformers or PyTorch, and establishment of Andrew as a recognized optimization researcher. This single result could serve as the cornerstone of a broader research program and significantly elevate his professional profile in the AI community.

⚠️ Risks

- QQN may not actually outperform AdamW on LLM fine-tuning in practice — second-order methods have a long history of promising theoretical advantages that don’t materialize in large-scale deep learning, and negative results are much harder to publish or leverage.

- Memory overhead of QQN could make it impractical for the exact use case it targets — LoRA/QLoRA users are typically memory-constrained, and any optimizer that requires additional state beyond AdamW’s two momentum buffers faces an uphill battle.

- Results may be highly sensitive to hyperparameter tuning, making it difficult to demonstrate robust improvements — critics could argue that with equal tuning effort, AdamW would match QQN’s performance.

- A well-funded lab could independently develop a similar or superior optimizer and publish first, especially given the intense focus on this problem area — the window of opportunity for novel contributions is narrowing rapidly.

- The ‘Quasi-Quantum’ branding risk: the ML community may dismiss the work as hype before engaging with the actual mathematics, particularly given the current backlash against quantum computing overclaims.

- Benchmarking methodology could be challenged — the LLM evaluation community is increasingly skeptical of benchmark results, and any perceived cherry-picking of tasks, model sizes, or hyperparameter configurations could undermine credibility.

- Even with strong results, adoption may be slow if the optimizer requires non-trivial integration effort or doesn’t have a clean, well-documented open-source implementation compatible with popular training frameworks.

📋 Requirements

- Significant GPU compute access: minimum 500-2000 A100 GPU-hours for comprehensive benchmarking across model sizes and tasks, costing approximately $5K-$20K on cloud platforms or requiring institutional/sponsor compute grants.

- Deep integration with existing fine-tuning frameworks: the QQN optimizer needs to be implemented as a drop-in replacement for AdamW within PyTorch, compatible with Hugging Face PEFT, bitsandbytes, and DeepSpeed/FSDP for distributed training.

- Rigorous experimental methodology: pre-registered experimental protocols, multiple random seeds (minimum 3-5 per configuration), standardized evaluation suites (Open LLM Leaderboard tasks), and fair hyperparameter search budgets for both QQN and AdamW baselines.

- Strong technical writing and presentation skills for targeting top ML venues (NeurIPS, ICML, ICLR) or high-visibility preprint platforms (arXiv), including clear theoretical motivation for why QQN should excel in the low-rank fine-tuning regime.

- A polished, well-documented open-source release (pip-installable package, comprehensive README, example notebooks) to maximize adoption and community engagement — the code must be production-quality, not research-grade.

- Engagement strategy for the ML community: Twitter/X threads, blog posts with accessible explanations, Hugging Face model cards showing QQN-fine-tuned models, and potentially a Weights & Biases report for reproducibility.

- Theoretical analysis connecting QQN’s properties to the specific geometry of LoRA parameter spaces — this strengthens the narrative beyond ‘we tried it and it worked’ to ‘here’s why it works,’ which is critical for academic credibility and differentiation from the many optimizer papers that show marginal empirical gains.

Option 3 Analysis: Fractal Thought Engine for Procedural World Generation

✅ Pros

- Directly leverages Andrew’s existing Fractal Thought Engine architecture — the recursive, hierarchical content generation paradigm maps naturally onto procedural world generation where macro-level coherence must be maintained across micro-level detail, minimizing the need to build from scratch.

- Combines three of Andrew’s core strengths (GPU computing, AI orchestration, creative content generation) in a single product, creating a defensible moat that few competitors could replicate — most game AI teams lack deep systems engineering, and most systems engineers lack AI content generation experience.

- The gaming and metaverse market is enormous ($200B+ gaming industry) and actively hungry for procedural generation solutions. No Man’s Sky demonstrated massive consumer appetite for infinite worlds, but its content was criticized for repetitiveness — an AI-driven fractal approach could solve exactly this pain point.

- The ‘fractal’ metaphor is not just branding — it’s architecturally apt. Real fractal generation maintains self-similarity across scales, which is precisely the challenge in procedural worlds: a village should feel consistent with its region, which should feel consistent with its continent. This gives the project genuine intellectual novelty and potential for academic publication alongside commercial application.

- This idea has multiple viable go-to-market paths: it could be a middleware SDK for game developers, a standalone demo/game, a research paper, or a metaverse infrastructure play — providing strategic optionality without requiring full commitment to any single path.

- GPU-accelerated rendering pipelines are already in Andrew’s wheelhouse, so the integration between generation and visualization can be tightly optimized rather than relying on loose coupling between separate AI and rendering systems, which is a common bottleneck in competing approaches.

- Strong narrative potential for thought leadership and community building — ‘AI-generated infinite worlds’ is inherently demo-friendly and viral. A compelling video walkthrough could attract significant attention from both the AI and gaming communities simultaneously.

❌ Cons

- Procedural world generation at production quality requires deep domain expertise in game design, level design, narrative design, and 3D art pipelines — areas where Andrew may have interest but not demonstrated professional depth. The gap between ‘technically impressive demo’ and ‘actually fun/engaging game world’ is vast and often underestimated by engineers.

- The competitive landscape is intensifying rapidly: companies like Promethean AI, Luma AI, and major studios’ internal R&D teams are pouring resources into AI-driven world generation. A solo or small-team effort risks being outpaced by well-funded competitors before reaching market.

- Maintaining ‘long-range consistency’ in procedurally generated worlds is an unsolved research problem at scale. While the fractal metaphor is appealing, actual implementation of narrative coherence across infinite space is extraordinarily difficult — players will quickly find inconsistencies that break immersion.

- The rendering pipeline requirements for explorable 3D environments are substantial and distinct from the GPU computing Andrew has done for AI/ML workloads. Real-time 3D rendering involves shader programming, asset management, physics simulation, and optimization for consumer hardware — a different beast from training neural networks on GPU clusters.

- Market timing risk: the metaverse hype cycle has cooled significantly since 2022, and investor/consumer appetite for ‘infinite virtual worlds’ may not recover in the near term, making it harder to attract funding or partnerships.

- Content quality expectations in gaming are extremely high. Players compare everything to AAA titles with hundreds of artists. AI-generated content that looks ‘pretty good’ is often perceived as ‘uncanny’ or ‘soulless’ by gaming audiences, creating a quality bar that’s hard to clear without significant artistic input.

📊 Feasibility

Moderate feasibility with significant caveats. The core technical architecture — recursive AI content generation on GPU — is well within Andrew’s capabilities and could produce a compelling proof-of-concept within 3-6 months. However, moving from proof-of-concept to a product that game developers or consumers would actually use requires crossing multiple domain boundaries (game engine integration, real-time rendering optimization, narrative design, UX for interactive worlds) that would likely require collaborators or a small team. The most realistic near-term path is a middleware/SDK approach targeting indie game developers, or a research demonstration that generates attention and partnerships. A full standalone game or metaverse platform would require 2-3 years and a team of 5-10+ people with complementary skills. The fractal generation concept itself is technically sound but would need novel solutions for the consistency problem at scale — this is feasible as a research contribution even if the full product vision takes longer.

💥 Impact

If successfully executed, this could be a category-defining contribution. A system that genuinely produces infinite, coherent, explorable worlds with emergent narrative would be transformative for gaming, simulation, training environments, and virtual experiences. Even a partial success — say, a middleware that generates consistent terrain and basic narrative scaffolding — could be commercially valuable to the thousands of indie studios that lack resources for hand-crafted world building. The thought leadership impact would be substantial regardless of commercial outcome: demos of AI-generated explorable worlds are inherently compelling and shareable, positioning Andrew at the intersection of two massive industries (AI and gaming). If the fractal consistency approach yields novel research results, it could also produce high-impact academic publications. The ceiling is very high — this is genuinely a moonshot with transformative potential — but the floor is also reasonable, as even intermediate results have value as demos, papers, or SDK components.

⚠️ Risks

- Scope creep is the primary risk: the vision of ‘infinite coherent worlds’ is so expansive that it’s easy to spend years building infrastructure without ever shipping something usable. Without disciplined scoping to a minimum viable demonstration, the project could become an endless R&D effort.

- The ‘uncanny valley’ of procedural content: generated worlds that are 90% coherent but 5% nonsensical can feel worse than obviously artificial worlds, because they set up expectations of realism and then violate them. This could make the product feel broken rather than innovative.

- Dependency on rapidly evolving AI models: if the Fractal Thought Engine relies on specific LLM or diffusion model capabilities, those underlying models may change, be deprecated, or be surpassed, requiring constant re-architecture.

- IP and licensing complications: if the system uses foundation models (GPT, Stable Diffusion, etc.) for content generation, the licensing terms for commercial game content may be restrictive or legally uncertain, creating business risk.

- Performance and cost: real-time AI generation for interactive 3D worlds requires either massive cloud GPU resources (expensive) or highly optimized on-device inference (technically challenging). The economics may not work for consumer-facing applications without significant optimization breakthroughs.

- Community and market reception: the gaming community has shown skepticism toward AI-generated content, with concerns about replacing human artists and designers. A product perceived as ‘AI replacing game developers’ could face backlash rather than adoption.

- Single-person bottleneck: the breadth of skills required (AI, GPU, rendering, game design, narrative) means Andrew would be stretched thin across too many domains, potentially producing mediocre results in each rather than excellence in any.

📋 Requirements

- Adaptation of the existing Fractal Thought Engine architecture to output structured world data (terrain heightmaps, object placement, NPC behavior trees, narrative graphs) rather than or in addition to text/media content — this is the core technical pivot required.

- Integration with an established game engine (Unity or Unreal Engine) or a custom real-time rendering pipeline capable of visualizing generated worlds at interactive framerates. Using an existing engine dramatically reduces scope; building custom adds differentiation but multiplies effort.

- A novel ‘consistency engine’ or constraint satisfaction system that ensures long-range coherence across generated content — this is the key research contribution needed and likely requires new algorithmic work beyond what the current Fractal Thought Engine provides.

- GPU infrastructure for both generation and rendering, potentially requiring different GPU configurations (compute-optimized for AI generation, graphics-optimized for rendering) or unified architectures that can handle both workloads.

- Collaborators or contractors with game design and 3D art expertise to bridge the gap between technically generated content and aesthetically/experientially compelling worlds. At minimum, a game designer and a 3D artist would significantly improve output quality.

- A disciplined scoping strategy that defines a compelling but achievable first milestone — e.g., ‘generate a coherent 1km² fantasy village with consistent architecture, terrain, and 10 NPCs with backstories’ — rather than attempting infinite worlds from day one.

- Funding or runway for 6-12 months of focused development to reach a demo-quality proof of concept. This could come from personal savings, a small grant (e.g., Epic MegaGrants, which funds Unreal-based innovation), or angel investment from gaming-adjacent investors.

Option 4 Analysis: Fractional CTO for AI-Native Startups Portfolio

✅ Pros

- Directly leverages the full breadth of Andrew’s 20+ year skill set — the combination of production-grade distributed systems engineering, GPU computing, and deep ML research is genuinely rare and exactly what AI-native startups need but struggle to find in a single person.

- Portfolio approach creates asymmetric upside: equity stakes in 4-6 early-stage AI companies means even one significant exit could generate outsized returns, while cash retainers cover living expenses. This is a well-proven model for experienced technical leaders.

- Immediate market demand with minimal ramp-up time. The AI startup ecosystem is exploding, and founders frequently cite ‘finding a technical co-founder/CTO who actually understands both ML and production systems’ as their #1 bottleneck. Andrew can start generating revenue within weeks.

- Each engagement serves as a learning laboratory — exposure to diverse AI application domains (healthcare, fintech, creative tools, etc.) cross-pollinates ideas and keeps Andrew at the cutting edge without being locked into a single company’s trajectory.

- Premium positioning in a crowded advisory market. Most fractional CTOs are either pure infrastructure people who don’t understand ML, or ML researchers who can’t architect production systems. Andrew’s ability to bridge both worlds is a genuine differentiator that justifies premium pricing ($300-500+/hr or $15-25K/month retainers).

- Creates a natural deal flow and network effect — successful engagements lead to referrals, and the portfolio itself becomes a credential. Over time, this can evolve into a venture studio or fund if desired.

- Preserves optionality for Andrew’s own projects (Cognotik, MindsEye, Fractal Thought Engine) since part-time engagements leave bandwidth for personal R&D and open-source work, unlike a full-time CTO role.

❌ Cons

- Context-switching tax across 4-6 companies is cognitively expensive and can degrade the quality of deep technical work. AI architecture decisions require sustained focus, and splitting attention may lead to surface-level guidance rather than the deep systems thinking that is Andrew’s core value.

- Equity in early-stage startups is highly illiquid and statistically likely to be worth zero — the base rate for startup failure is 90%+. The portfolio approach mitigates this but doesn’t eliminate the risk of years of equity compensation yielding nothing.

- Fractional CTO roles can devolve into ‘firefighting as a service’ where startups call on you only during crises rather than for strategic architecture work. This is draining and doesn’t leverage Andrew’s highest-value capabilities.

- Scaling is inherently limited by Andrew’s personal time. Unlike a product or platform, this is fundamentally a services business with a hard ceiling on revenue unless he builds a team or transitions to a different model.

- Potential conflicts of interest when advising multiple companies in adjacent AI spaces. Startups may be uncomfortable sharing proprietary technical details if they perceive overlap with other portfolio companies.

- The ‘fractional CTO’ title, while increasingly accepted, still carries stigma in some VC circles where investors want to see a dedicated full-time technical leader. This could limit the caliber of startups willing to engage.

- Risk of becoming a perpetual advisor rather than a builder. Over time, this path may feel unfulfilling for someone with Andrew’s research and creation instincts, as the work is fundamentally about enabling others’ visions rather than pursuing his own.

📊 Feasibility

Highly feasible in the near term — this is one of the most immediately actionable options available. Andrew already possesses every technical skill required, and the market for fractional AI CTOs is hot. He could begin sourcing engagements within 2-4 weeks through existing networks, AI startup communities (YC, Techstars alumni networks), LinkedIn positioning, and platforms like Toptal or specialized fractional executive marketplaces. The main friction is business development and sales, not technical capability. Setting up the legal and financial infrastructure (LLC, equity agreement templates, retainer contracts) is straightforward. The portfolio model of 4-6 companies is realistic if each engagement is 8-15 hours/week, though finding the right mix of companies that are well-funded enough to pay meaningful retainers AND offer meaningful equity requires careful selection.

💥 Impact

Medium-to-high impact on multiple dimensions. Financially, a portfolio of 4-6 retainers at $15-20K/month each generates $60-120K/month in cash while accumulating equity positions. Reputationally, successful engagements build a track record that compounds — each company that ships a production AI system with Andrew’s architecture becomes a case study. Strategically, this positions Andrew at the center of the AI startup ecosystem, giving him early visibility into emerging trends, technologies, and market opportunities that could inform his own future ventures. The impact on the broader ecosystem is also meaningful: early-stage AI startups frequently fail due to poor technical architecture decisions made in the first 6-12 months, and having an experienced systems architect involved early can materially improve outcomes. However, the impact is ultimately bounded by the success of the portfolio companies and Andrew’s personal bandwidth.

⚠️ Risks

- Startup failure cascade: if 4-6 portfolio companies all struggle simultaneously (e.g., during an AI funding winter), Andrew faces both reduced cash flow and worthless equity, with no single stable employer to fall back on.

- Reputation risk from association with failed startups. While startup failure is normalized, repeated association with companies that fail due to technical issues (even if not Andrew’s fault) could damage his brand.

- Scope creep and boundary erosion: startups in crisis will pressure a fractional CTO to take on full-time responsibilities at part-time compensation. Without firm boundaries, this leads to burnout and undercompensation.

- Legal and IP complications: advising multiple AI companies creates potential for inadvertent IP cross-contamination or accusations thereof. Even without actual wrongdoing, the perception of conflict can be damaging.

- Market saturation risk: the fractional CTO model is becoming increasingly popular, and as more senior engineers enter this space, pricing pressure and competition will increase. The AI-specific niche helps but isn’t immune.

- Opportunity cost: time spent on fractional CTO work is time not spent building Andrew’s own products (Cognotik, MindsEye, etc.), which could have much higher long-term value if they succeed. The steady income of consulting can become a golden cage that prevents bigger bets.

- Client dependency and concentration risk: if one or two clients represent a disproportionate share of revenue and they churn, the financial impact is immediate and significant.

📋 Requirements

- Professional services infrastructure: LLC or S-Corp formation, professional liability insurance (E&O), standardized engagement contracts with clear IP assignment clauses, equity agreement templates reviewed by a startup-savvy attorney.

- Business development and personal branding: a polished online presence (website, LinkedIn, portfolio of past work) specifically positioned for the fractional CTO market. Case studies, architecture diagrams, and testimonials from past projects are essential sales collateral.

- Network access to AI startup deal flow: connections to VC firms, accelerators (YC, Techstars, AI-focused programs), angel investor networks, and founder communities. This is the single most important requirement — the quality of companies in the portfolio determines everything.

- Time management discipline and tooling: with 4-6 concurrent engagements plus personal projects, rigorous scheduling, communication protocols (async-first), and clear availability boundaries are essential to prevent burnout and maintain quality.

- Conflict-of-interest management framework: a clear, documented policy for handling overlapping domains across portfolio companies, including disclosure protocols and information barriers where necessary.

- Financial runway or bridge income: while ramping up the portfolio, there may be a 2-4 month period of reduced income. Having 3-6 months of savings or a bridge engagement provides stability during the transition.

- Continuous technical currency: maintaining hands-on skills with the latest AI frameworks, model architectures, and infrastructure tools (not just advisory-level knowledge) is critical to credibility. This means dedicating time to personal projects, open-source contributions, and experimentation even while consulting.

Option 5 Analysis: Open-Source MindsEye as Composable Vision AI Framework

✅ Pros

- Directly leverages Andrew’s existing MindsEye framework and QQN optimizer — no need to build from scratch, just refactor and modularize existing work, dramatically reducing time-to-market

- The composable plugin architecture is a genuinely differentiated positioning in the vision AI space; most frameworks (OpenCV, torchvision, MMDetection) are monolithic or loosely modular, not truly composable with swappable optimizers and rendering backends

- Open-sourcing creates a powerful personal brand and credibility flywheel — it positions Andrew as a recognized authority in vision AI, which opens doors for consulting, speaking, hiring, and partnerships

- Integration with HuggingFace and PyTorch ecosystems taps into massive existing developer communities (HuggingFace has 500K+ models, PyTorch dominates research), providing built-in distribution channels rather than needing to build audience from zero

- The marketplace model for contributed modules creates network effects — each new contributor makes the framework more valuable, attracting more users, which attracts more contributors, creating a self-reinforcing growth loop

- Enterprise support + hosted compute is a proven open-source monetization model (Red Hat, Elastic, Databricks pattern) that doesn’t require venture funding to start generating revenue

- QQN optimizer as a unique, proprietary-origin component gives the framework a genuine technical moat — even if competitors fork the code, the deep expertise behind QQN and its continued evolution remains with Andrew

❌ Cons

- The computer vision framework space is extremely crowded — OpenCV, torchvision, MMDetection, Detectron2, and dozens of others already have massive communities and corporate backing (Meta, Google, Intel), making differentiation and adoption very challenging

- Maintaining a high-quality open-source project is essentially a full-time job: triaging issues, reviewing PRs, writing documentation, managing releases, responding to community questions — this is a massive ongoing time commitment that can easily consume all productive hours

- The ‘composable plugin architecture’ requires extremely careful API design upfront; getting the abstraction boundaries wrong early on leads to painful breaking changes that alienate early adopters and fragment the community

- Monetization through enterprise support is notoriously difficult for solo maintainers — enterprises want SLAs, 24/7 support, and organizational stability that a single person or small team struggles to credibly offer

- The marketplace for contributed modules sounds appealing but historically has very low conversion rates; most developers expect open-source modules to be free, and paid module marketplaces (Unity Asset Store being a rare exception) struggle outside of game development

- HuggingFace integration, while strategically smart, means competing on HuggingFace’s own platform where they control discovery, ranking, and can easily build competing features if the approach proves successful

📊 Feasibility

Moderately feasible in the near-term (6-12 months for initial release), but with significant caveats. The core technical work — refactoring MindsEye into modular components, designing plugin APIs, writing GPU-optimized reference implementations — is well within Andrew’s skillset and could be accomplished as a focused solo effort. However, the full vision (thriving community, marketplace, enterprise customers) requires sustained multi-year effort and likely additional contributors or co-maintainers. The biggest feasibility challenge is not technical but social: building community momentum in a crowded space requires relentless developer relations work, content creation, conference talks, and responsiveness that goes far beyond writing good code. A realistic path would be to start with a focused niche (e.g., artistic/generative vision pipelines where MindsEye’s origins give it natural credibility) rather than trying to be a general-purpose vision framework from day one.

💥 Impact

If successful, this could establish Andrew as a recognized figure in the open-source AI ecosystem, comparable to how Jeremy Howard is associated with fast.ai or Hugging Face’s founders are associated with NLP democratization. The framework could become a standard tool for researchers and practitioners working on composable vision pipelines, particularly in creative AI, neural style transfer, and generative art domains where MindsEye has natural strengths. Enterprise revenue potential is moderate — likely $100K-$500K/year range for a well-maintained niche framework with hosted compute, scaling higher only with team growth. The most significant impact may be indirect: the credibility, network, and visibility gained from maintaining a successful open-source project opens doors to advisory roles, investment opportunities, acquisition interest, and high-value consulting engagements that far exceed direct monetization.

⚠️ Risks

- Community building failure: the project launches to crickets, gets a brief burst of GitHub stars but no sustained contributors, and becomes yet another abandoned open-source project — this is the most common outcome and the most likely risk

- Scope creep and burnout: trying to support every use case, every GPU backend, every ML framework integration leads to an unsustainable maintenance burden that burns Andrew out within 12-18 months

- Corporate co-option: a large company (Google, Meta, NVIDIA) releases a similar composable vision framework with 100x the engineering resources, instantly making MindsEye redundant — this has happened repeatedly in ML tooling (e.g., TensorFlow vs. Theano)

- API design lock-in: early architectural decisions in the plugin system prove wrong, but by the time this is apparent, enough users depend on the current API that breaking changes cause a community split or mass exodus

- Security and liability exposure: open-sourcing GPU-optimized code that runs on enterprise infrastructure creates potential security vulnerabilities; a serious CVE in the framework could damage reputation and create legal exposure

- Monetization timing mismatch: the project requires 2-3 years of unpaid community building before enterprise revenue materializes, creating a significant financial gap that may not be sustainable without other income sources

- Intellectual property concerns: if any MindsEye components were developed under previous employment contracts or with proprietary dependencies, open-sourcing could create legal complications

📋 Requirements

- Significant refactoring effort to transform MindsEye from its current state into a clean, modular architecture with well-defined plugin interfaces, comprehensive API documentation, and stable versioning — estimated 3-6 months of focused development

- High-quality documentation, tutorials, and example notebooks that lower the barrier to entry — this is often the difference between adopted and abandoned open-source projects, and typically requires as much effort as the code itself

- CI/CD infrastructure for multi-platform testing (different GPUs, CUDA versions, OS), automated benchmarking, and release management — likely requiring cloud compute budget of $500-2000/month

- Developer relations and community management skills: active presence on Discord/Slack, regular blog posts, conference talks (NeurIPS, CVPR workshops), Twitter/X engagement, and YouTube tutorials to build visibility

- Legal review of licensing strategy (Apache 2.0 vs. MIT vs. dual-license for enterprise), IP ownership verification, and contributor license agreements

- Financial runway of 18-24 months to sustain development before meaningful monetization, or a parallel income stream (consulting, part-time employment) to fund the open-source work

- At least 2-3 early adopter partners or collaborators who can provide real-world use cases, feedback on API design, and social proof — ideally from academic labs or creative AI studios who would benefit from composable vision pipelines

Option 6 Analysis: Technical Blog Series: Building AI Systems That Actually Scale

✅ Pros

- Directly leverages 20+ years of hard-won production experience — this is not knowledge that can be easily replicated or faked, giving the content a natural moat of authenticity and depth that AI-generated or junior-level content cannot match

- Occupies a genuinely underserved niche: most ML content is either academic/theoretical or shallow tutorials; deep systems-level content about production AI infrastructure from a practitioner who has built real platforms (Cognotik, MindsEye) is rare and highly valued by senior engineers and decision-makers

- Creates a powerful compounding flywheel — each post builds SEO authority, grows an email list, attracts inbound consulting leads, establishes credibility for future product launches, and serves as a living portfolio that demonstrates capability far better than a resume

- Low capital investment with high optionality: a blog/newsletter can be launched in days and pivoted easily based on audience response, while simultaneously serving as raw material for conference talks, book proposals, paid courses, and consulting pitches

- The content creation process itself forces structured reflection on past work, which can surface new research directions, product ideas, and framework improvements — it’s a generative activity, not just a marketing one

- Builds a targeted audience of exactly the right people (senior ML engineers, CTOs, VPs of Engineering at AI companies) who are potential customers for consulting, tools, or platforms Andrew might build

- Can showcase specific proprietary innovations (QQN optimizer, Fractal Thought Engine architecture) in a way that generates interest and potential collaborators/contributors without giving away full implementation details

❌ Cons

- Content creation at a high technical level is genuinely time-consuming — a single deeply researched post with benchmarks, diagrams, and code examples can take 20-40 hours, creating significant opportunity cost against building products or doing paid work

- The AI/ML content space is extremely noisy; even high-quality content can struggle to gain traction without an existing audience or distribution network, leading to a potentially long and discouraging ramp-up period

- Consistency is critical for audience building but difficult to maintain alongside engineering work — many technical blogs start strong and then go dormant, which can actually hurt credibility more than never starting

- There’s a tension between sharing enough technical depth to be valuable and protecting proprietary insights that could form the basis of competitive products or consulting engagements

- Blog content alone rarely generates significant direct revenue; it’s an indirect play that requires patience and a clear strategy for converting attention into business outcomes

- The audience for deeply technical systems-level AI content is relatively small compared to broader ML/AI audiences, which limits viral potential even if the content is excellent

📊 Feasibility

Highly feasible and among the most immediately actionable ideas in the entire brainstorm. Andrew already possesses all the knowledge, experience, and technical writing ability needed. The infrastructure is trivial (Substack, Ghost, or a static site). The main constraint is time allocation and sustained commitment. He could realistically launch with a strong inaugural post within 1-2 weeks and establish a cadence of 2-4 posts per month. The content practically writes itself given the depth of experience — the challenge is editing and structuring it for maximum impact, not generating the raw material.

💥 Impact

Medium-to-high impact over a 6-18 month horizon, with compounding returns. In the near term (0-6 months), expect modest but highly targeted audience growth — perhaps 500-2,000 subscribers of exactly the right profile. In the medium term (6-18 months), a well-executed series could establish Andrew as a recognized voice in production AI systems, leading to conference invitations, podcast appearances, consulting inbound, and potential book or course opportunities. The indirect effects are often more valuable than direct metrics: a single post that resonates with the right CTO could lead to a six-figure consulting engagement or a key hire for a future venture. Long-term, the content archive becomes a durable asset that continues generating leads and credibility indefinitely. This also creates a foundation that amplifies every other idea in the brainstorm — product launches, open-source projects, and research papers all benefit from an established audience and brand.

⚠️ Risks

- Burnout and abandonment: the most common failure mode for technical blogs is inconsistency; starting strong and then going silent can signal unreliability rather than expertise

- Audience building takes longer than expected, leading to discouragement — it’s common for excellent technical content to take 12-18 months to gain meaningful traction

- Oversharing proprietary insights that competitors or potential clients could use to reduce their need for Andrew’s services or replicate his innovations

- Getting pulled into content creation as a primary activity at the expense of the engineering and research work that generates the insights worth writing about — the blog should document the work, not replace it

- Negative reception or public technical criticism of shared approaches could damage credibility if not handled well, especially in the often-combative ML Twitter/X community

- Platform risk if relying on a single distribution channel (e.g., Substack algorithm changes, Twitter/X reach decay); need to own the email list and domain

📋 Requirements

- A publishing platform (Substack, Ghost, or self-hosted blog) with email newsletter capability — should be set up to own the subscriber list directly

- A content calendar with 8-12 planned post topics drawn from real production experiences, ensuring a sustainable pipeline before launch rather than improvising week-to-week

- Dedicated time allocation of approximately 8-15 hours per week for writing, editing, creating diagrams/benchmarks, and engaging with reader comments and social media distribution

- A distribution strategy beyond ‘publish and hope’ — this means active presence on Twitter/X, Hacker News, Reddit (r/MachineLearning, r/LocalLLaMA), LinkedIn, and potentially cross-posting to platforms like Medium or dev.to for initial reach

- Supporting visual assets: architecture diagrams, benchmark charts, code snippets with syntax highlighting, and potentially short video walkthroughs for complex topics — these dramatically increase shareability and perceived quality

- A clear editorial voice and positioning statement that differentiates from existing AI content — the ‘systems engineer who builds real AI platforms’ angle needs to be explicit and consistent