A New Paradigm for LLM-Powered Development: Transparent, Extensible, and User-Controlled

Introduction

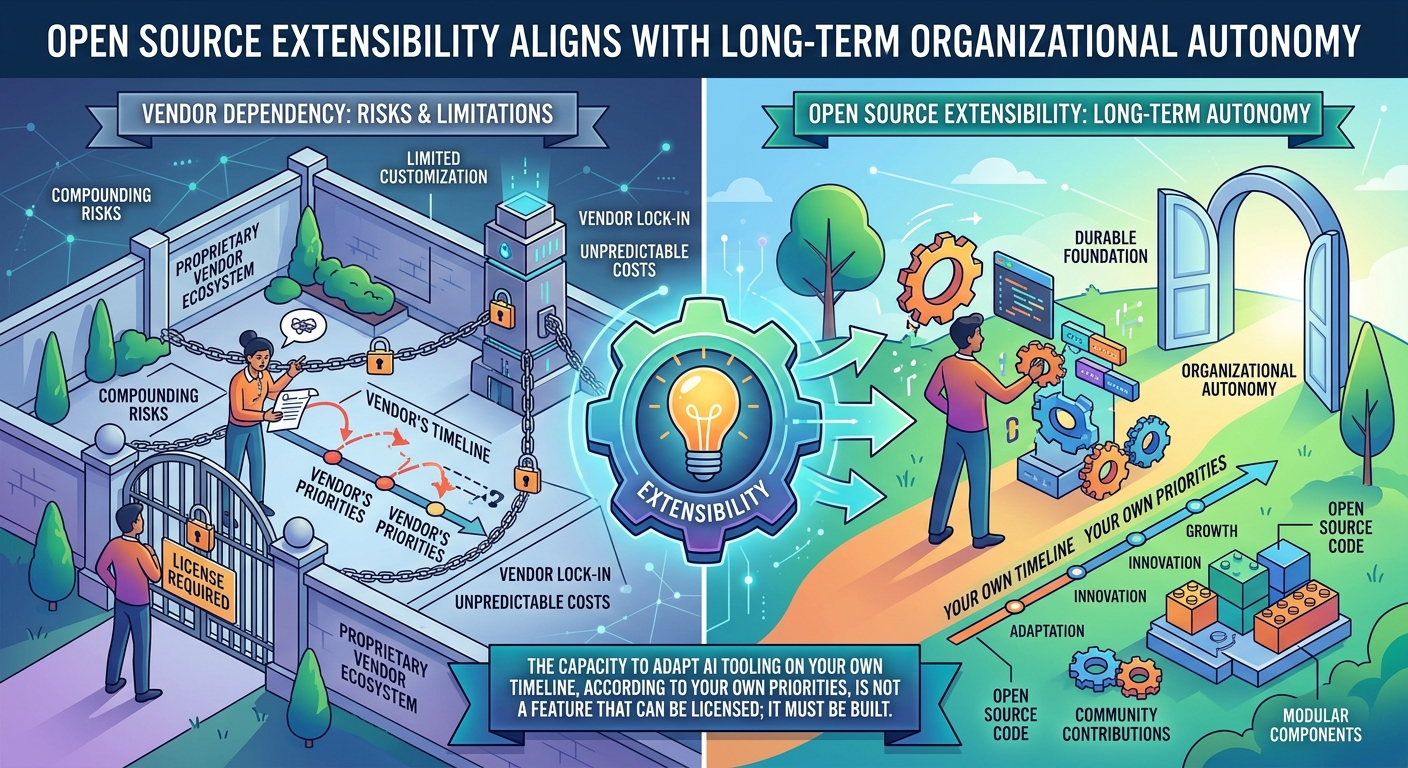

The landscape of artificial intelligence tooling has been dominated by chat-based interfaces and proprietary platforms that lock users into specific vendors, obscure their data, and extract value from their usage. A fundamentally different approach is needed—one that prioritizes user control, transparency, and the ability to adapt to evolving needs without vendor constraints. The question facing technology leaders today is not merely which platform offers the best features, but which platform’s trustworthiness can be verified rather than merely promised.

This document outlines a new product paradigm: a FOSS-based, file-centric application that leverages large language models not as conversational partners, but as powerful engines for structured logic, documentation generation, and code transformation. Built on a JVM backend with a JavaScript/TypeScript frontend, this platform is designed to be transparent, extensible, and reproducible—suitable for integration into modern CI/CD pipelines and collaborative development workflows.

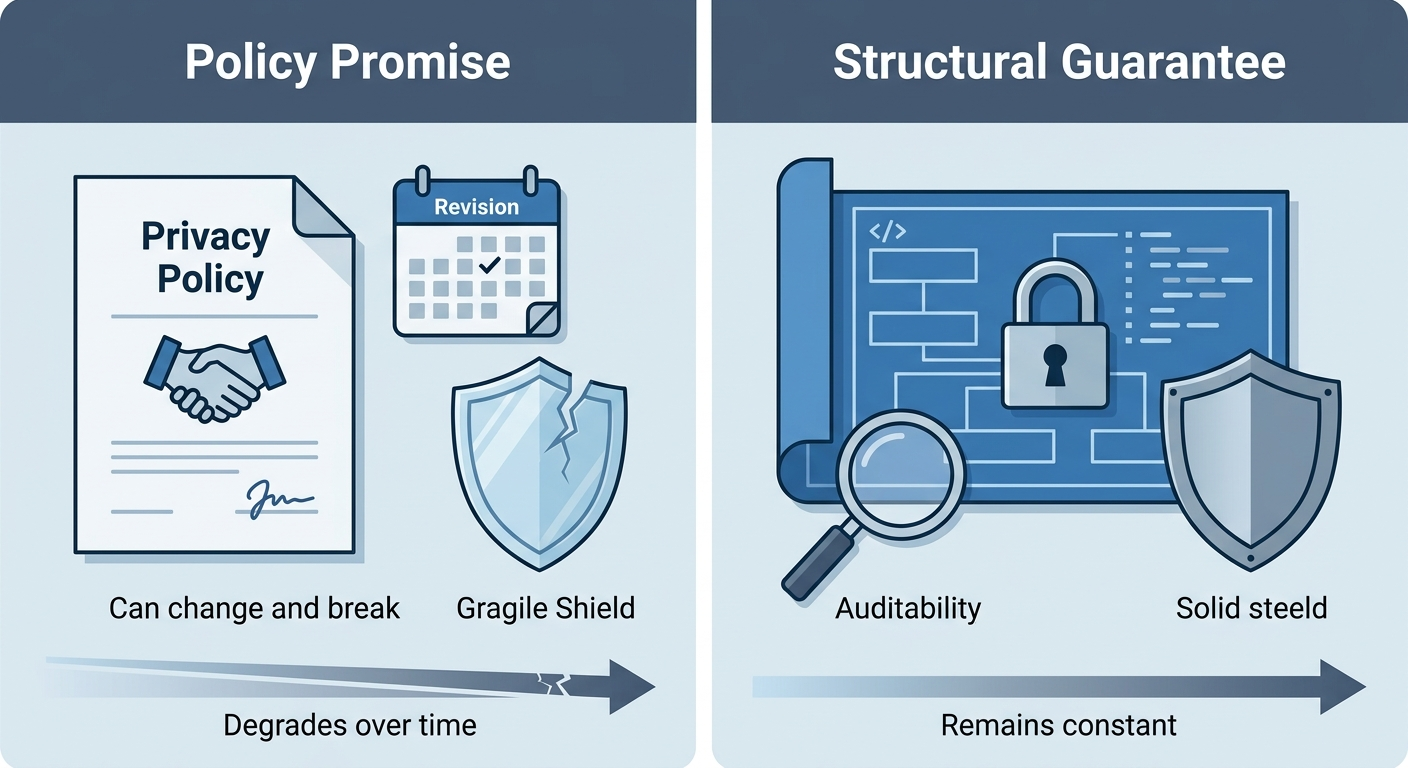

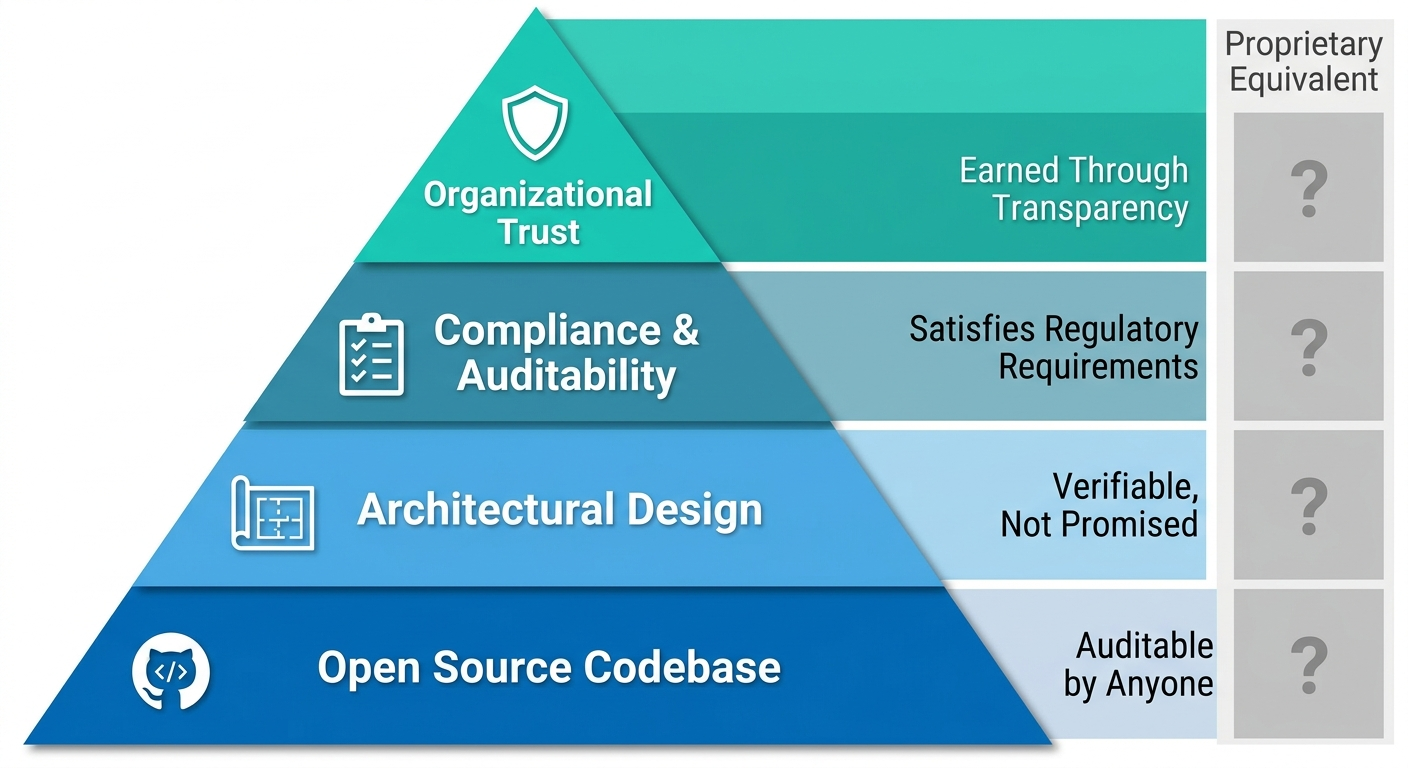

Understanding why this approach is superior requires confronting a genuine tension: proprietary platforms often deliver better short-term user experience, faster feature development, and professional security management. The case for a FOSS, file-centric, user-controlled platform is not that it is universally better—it is that it is structurally better aligned with the long-term interests of organizations that require governance, auditability, and independence from vendor incentive drift. This distinction matters, and the platform’s design reflects it at every level. The distinction between structural guarantees and policy promises is not academic. A privacy policy is a legal instrument subject to unilateral revision; an architecture is a verifiable fact. When an organization’s security officer asks “how do we know the vendor cannot see our prompts?”, the answer should not be “because they promised”—it should be “because the architecture makes it impossible, and here is the code that proves it.” This is the foundational insight that animates every design decision in this platform.

Core Value Proposition: User Control and Transparency

Bring Your Own Key (BYOK) and Provider Agnosticism

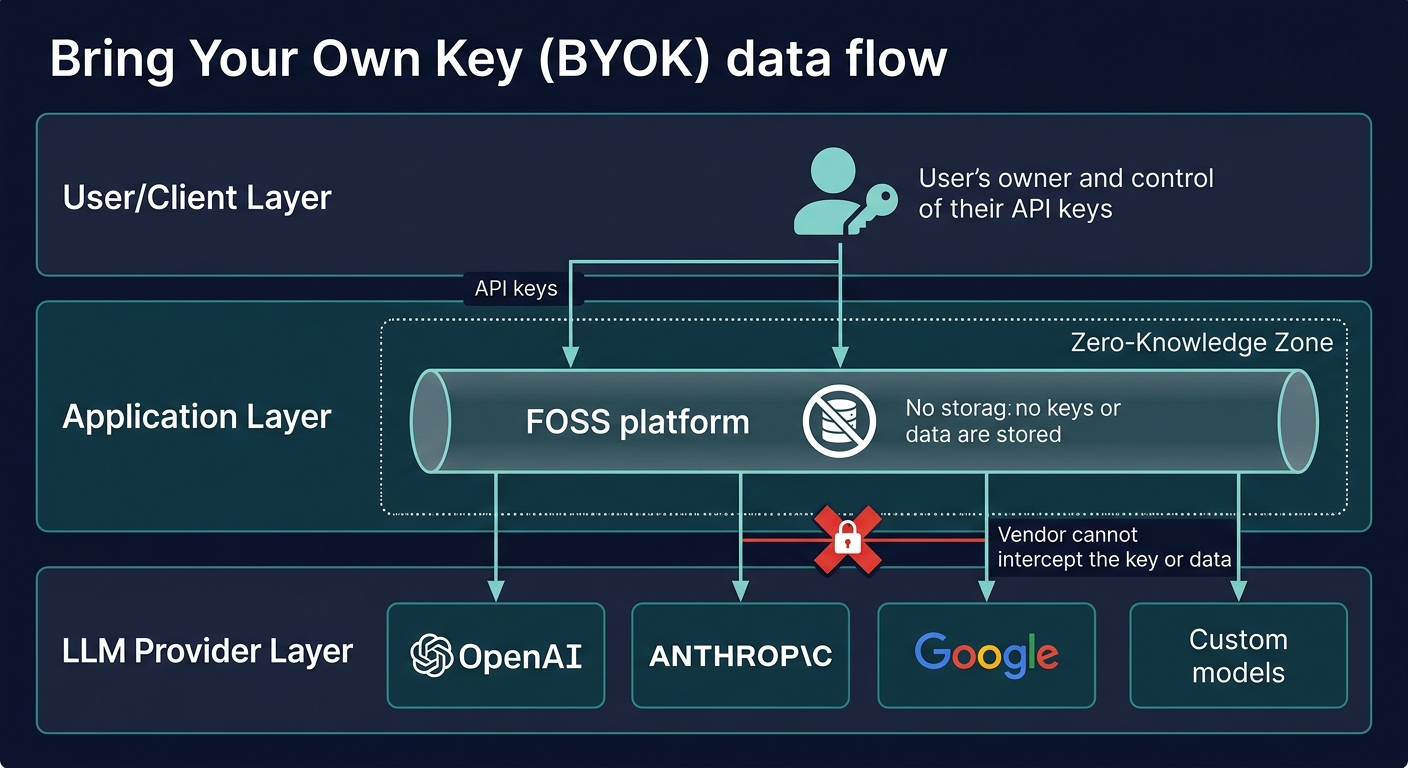

At the heart of this product lies a commitment to user sovereignty. The application operates on a Bring Your Own Key (BYOK) model, fundamentally inverting the traditional SaaS relationship:

-

Complete Key Ownership: Users retain absolute control over their LLM API keys. The application never stores, logs, or accesses plaintext keys. This architectural choice ensures that users maintain complete security and regulatory compliance, regardless of their industry or jurisdiction. Critically, this guarantee is enforced through zero-knowledge key handling in the frontend—keys are never held in memory longer than the duration of a single API call, and are never written to disk or transmitted to the application vendor.

-

Provider Agnosticism: Rather than locking users into a single LLM provider, the system is designed with abstracted core logic that enables seamless integration with multiple providers—OpenAI, Anthropic, Google, specialized open-source models, and future entrants to the market. This flexibility ensures that users can switch providers, negotiate better rates, or adopt emerging models without rebuilding their workflows. Between 2022 and 2025, the top-ranked model on major reasoning benchmarks changed hands repeatedly across competing providers. An organization whose AI workflows are architecturally bound to a single proprietary platform cannot respond to this volatility without incurring the full cost of platform migration—a cost that, in regulated environments, includes re-validation, re-certification, and potential regulatory notification. Provider agnosticism is not a convenience; it is a strategic necessity.

-

Straightforward Provider Integration: The architecture includes well-defined interfaces and integration points, making the addition of new LLM providers a straightforward engineering task. As the AI landscape evolves at a rapid pace, this design ensures the platform remains relevant and adaptable.

Cost and Privacy Assurance

Two critical guarantees underpin user trust:

-

No Cost Cut: The application takes no percentage or fee on the usage costs incurred by users with their chosen LLM provider. Users pay only for the compute they consume; the application vendor extracts no value from their spending. This alignment of incentives ensures that the platform’s success is tied to user productivity, not usage volume.

-

No Data Peeking: By architectural design, the application vendor cannot inspect the specific prompts, inputs, or outputs of a user’s LLM interactions. The application is a conduit, not a surveillance mechanism. This privacy guarantee is not a policy promise but a structural reality, enforced by the system’s design and verifiable through the open-source codebase.

Why Structural Guarantees Matter More Than Policy Promises

Proprietary platforms frequently offer privacy policies and compliance certifications as proxies for trust. These are meaningful, but they are contractual and legal constructs—they can change, they depend on vendor solvency, and they are difficult to verify independently. The BYOK architecture offers something categorically different: a structural guarantee that is visible in the code, auditable by any engineer, and immune to policy changes. When an organization’s security officer asks “how do we know the vendor cannot see our prompts?”, the answer is not “because they promised”—it is “because the architecture makes it impossible, and here is the code that proves it.”

This distinction becomes especially important as organizations grow and their LLM usage becomes more sensitive. Vendor incentives are not static: once an organization is deeply embedded in a proprietary platform, the vendor’s incentive to maintain strict privacy guarantees competes with incentives to improve their models, optimize their infrastructure, and grow their business. Structural guarantees do not drift with business priorities. The analogy to cryptographic proof versus contractual assurance is precise and instructive: no compliance officer accepts a vendor’s written promise as a substitute for encryption; the same logic must govern key custody and data access in AI deployments.

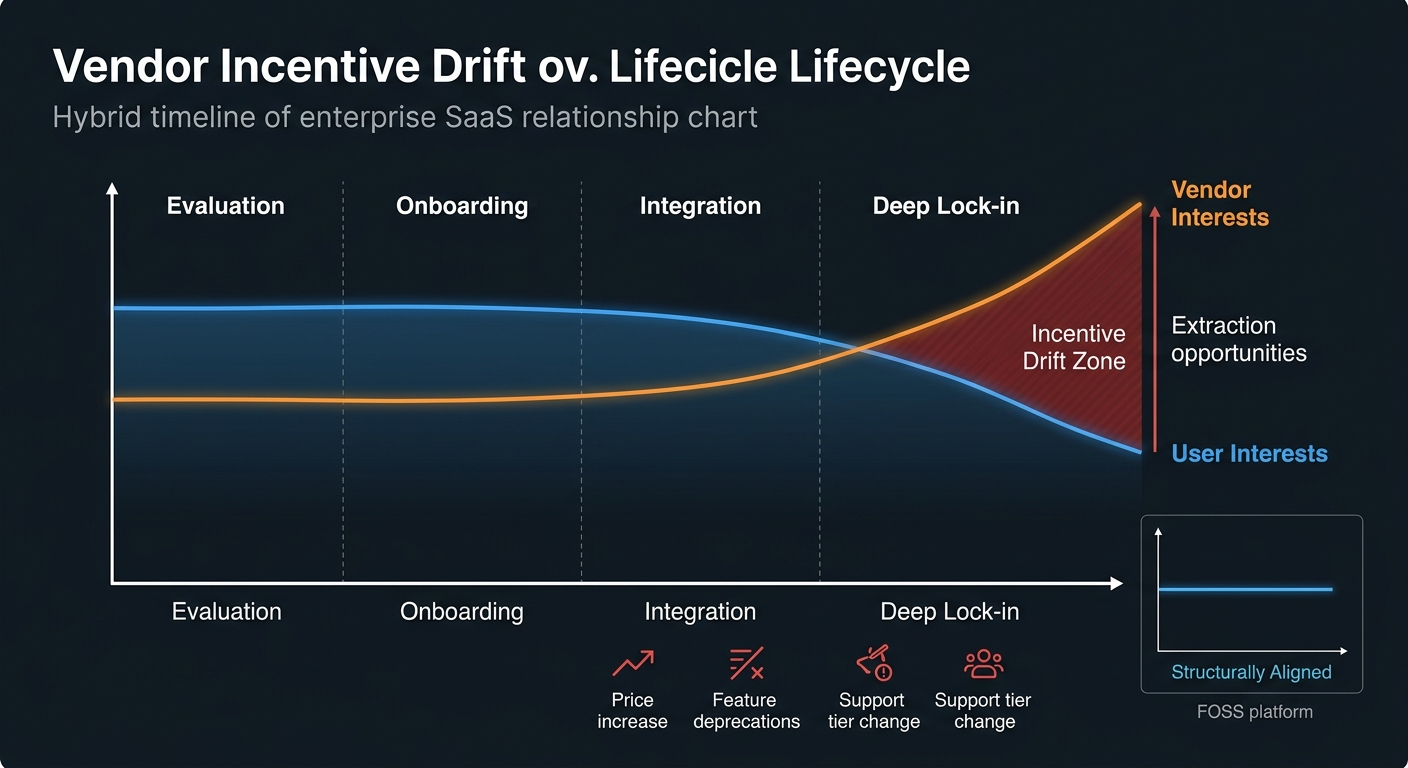

The Vendor Incentive Drift Problem

Enterprise SaaS history offers an instructive precedent for understanding why structural guarantees matter. Organizations that consolidated critical workflows within a single vendor’s ecosystem—whether in CRM, ERP, or cloud infrastructure—routinely discovered that exit costs escalated in direct proportion to integration depth, effectively transforming a vendor relationship into a structural constraint. Pricing changes, feature deprecations, support tier stratification, and ecosystem bundling are predictable outcomes of vendor economics—not exceptions.

This pattern is not malicious; it is structural. Proprietary vendors optimize for their own growth and profitability, which aligns with user interests during the sales and onboarding phase but can diverge significantly after lock-in occurs. A technology leader who selects a platform on the basis of today’s privacy policy is, in effect, delegating a long-term governance decision to a counterparty whose incentives will inevitably drift. The BYOK architecture ensures that this decision remains the organization’s to make—and, crucially, to revise.

Game-theoretic analysis of the competitive dynamics between proprietary and FOSS platforms reveals that this incentive drift is not a tail risk but a central tendency. In the short run, proprietary platforms may offer superior user experience and faster feature velocity. But the payoff structure is time-inconsistent: the same lock-in mechanisms that generate short-term convenience for users generate long-term extraction opportunities for vendors. The FOSS platform’s structural commitments—BYOK, open codebase, file-based state—function as credible pre-commitment devices that resolve this time-inconsistency problem by making vendor extraction architecturally impossible.

FOSS Core: Trust Through Transparency

Free and Open-Source Foundation

The core codebase is distributed under a permissive open-source license, enabling free use, modification, and distribution. This choice reflects a fundamental belief: that transparency builds trust, and that the best software emerges from communities of developers who can inspect, critique, and improve the code they depend on.

It is worth being precise about what FOSS does and does not provide. FOSS does not automatically guarantee security, reliability, or quality—those depend on execution. What FOSS provides is verifiability: the ability for any stakeholder to inspect the system’s behavior, identify discrepancies between claims and implementation, and act on that information. For organizations in regulated industries, this verifiability is not merely a philosophical preference—it is a compliance requirement. Auditors, security teams, and regulators increasingly require the ability to inspect the systems that process sensitive data, and a closed-source platform cannot satisfy that requirement regardless of its certifications. It is equally important to acknowledge what FOSS does not solve. FOSS does not automatically create motivation to exercise the control it provides. An organization can have full source code access, file-based workflows, and complete technical sovereignty—and still choose not to exercise it because the cognitive load exceeds the perceived risk, or because the team lacks the expertise to make meaningful use of the control. The value proposition of this platform is not that it forces organizations to exercise control, but that it makes control structurally available when organizations need it—and critically, that it provides a structural escape route when vendor incentives diverge from organizational interests. The cost of misalignment with a FOSS platform is technical and visible (you must maintain your own fork); the cost of misalignment with a proprietary platform is contractual and opaque (you must renegotiate from a position of weakness).

Training Data and Code Quality

A critical observation informs this approach: large language models have already been trained on vast amounts of public code, including open-source libraries and frameworks. This existing training provides an implicit baseline of robustness and quality recognition. When the application’s FOSS core is exposed to LLMs—whether for code generation, analysis, or transformation—the models already understand the patterns, conventions, and quality standards embedded in the codebase. This creates a virtuous cycle: open code is better understood by the AI tools that operate on it.

Community-Driven Development

The open-source foundation fosters a community-driven development model, where users, developers, and organizations can contribute improvements, report issues, and shape the product’s evolution. This approach is fundamentally more resilient and adaptive than closed, proprietary systems. Community-driven development also provides a structural hedge against a risk that is often underweighted in technology decisions: vendor incentive misalignment. Proprietary vendors optimize for their own growth and profitability, which aligns with user interests during the sales and onboarding phase but can diverge significantly after lock-in occurs. Pricing changes, feature deprecations, support tier stratification, and ecosystem bundling are predictable outcomes of vendor economics—not exceptions. FOSS does not eliminate this risk, but it changes its character: the cost of misalignment is technical and visible (you must maintain your own fork) rather than contractual and opaque (you must renegotiate from a position of weakness). For organizations with long institutional timescales and genuine governance requirements, this structural difference is material. A community-driven plugin ecosystem creates multiplicative value: rather than the core team building every possible integration, domain experts in healthcare, finance, legal, DevOps, and data science can build and maintain the tools their communities need. This distributed model of specialization is more resilient and more innovative than any centralized roadmap could be. The network effects of a FOSS ecosystem are generative—value flows to the ecosystem as a whole—whereas the network effects of proprietary platforms are extractive—value flows to the vendor. This divergence in long-run trajectories is a structural feature, not an accident.

Extensible Architecture: Building a Platform, Not a Monolith

Multiple Extensibility Points

The application is architected as a platform, not a monolithic tool. It provides clear, documented hooks and integration points throughout the system, enabling third-party developers to:

- Inject custom logic into workflow execution

- Create specialized UI components for domain-specific use cases

- Integrate with external systems and APIs

- Extend the plugin system with new capabilities

These extensibility points are not afterthoughts but core architectural features, designed from the ground up to enable safe, isolated customization.

Comprehensive Plugin System

A well-defined plugin system enables the packaging, distribution, and monetization of specialized features and domain-specific extensions. The plugin ecosystem is not merely a technical convenience—it is the primary mechanism by which the platform grows its value without growing its complexity. This system serves multiple purposes:

- Specialization Without Core Bloat: Advanced or niche functionality can be developed and distributed as plugins, keeping the core codebase lean and maintainable.

- Monetization Path: Organizations and developers can create and sell specialized plugins, providing a revenue model that does not compromise the FOSS nature of the core.

- Ecosystem Growth: A robust plugin marketplace enables a thriving ecosystem of third-party developers, each contributing specialized solutions to specific domains.

The plugin architecture is designed with security as a first-class concern. Plugins operate under a capability-based permission model: each plugin declares the resources it requires (file system access, network access, LLM API calls), and users explicitly grant or deny those permissions. Plugins are distributed with cryptographic signatures, and the runtime enforces sandboxing to prevent plugins from accessing resources beyond their declared scope. This design ensures that the extensibility of the platform does not become a supply chain vulnerability.

The plugin ecosystem also enables domain-specific workflow templates for vertical markets. Pre-built, community-contributed workflow templates for healthcare, finance, legal, and education can be file-based, version-controlled, and customizable while maintaining BYOK compatibility. Users can fork, modify, and share templates through a decentralized registry, reducing time-to-value for domain experts while maintaining the transparency and auditability that regulated industries require.

Complexity Management Through Isolation

Extensibility is not merely a feature; it is an architectural necessity. By allowing specific functionality to be offloaded into managed, isolated plugins, the complexity of the core codebase is controlled. This isolation provides several benefits:

- Stability: The core remains stable and well-tested, with fewer moving parts and dependencies.

- Maintainability: Developers can understand and modify the core without navigating a labyrinth of conditional logic and special cases.

- Scalability: As the platform grows and new use cases emerge, the plugin system enables growth without proportional increases in core complexity.

Technology Stack: JVM Backend, JavaScript/TypeScript Frontend

JVM-Based Backend

The backend is built on the Java Virtual Machine (via Kotlin or Java), a deliberate choice that prioritizes performance, stability, and concurrency:

- Robustness: The JVM has been battle-tested in production environments for decades, providing a stable foundation for complex logic and state management.

- Concurrency: The JVM’s threading model and ecosystem of concurrency libraries enable efficient handling of multiple concurrent workflows and LLM interactions.

- Performance: JVM-based languages compile to optimized bytecode, providing performance characteristics suitable for production workloads.

JavaScript/TypeScript Frontend

The client-facing layer uses modern web technologies—HTML, CSS, JavaScript, and TypeScript—for maximum portability and developer accessibility:

- Portability: Web-based frontends run on any device with a modern browser, eliminating platform-specific deployment challenges.

- Developer Accessibility: JavaScript and TypeScript are among the most widely known programming languages, with a vast ecosystem of libraries and tools.

- Rapid Development: The frontend development cycle is fast, enabling quick iteration and user feedback integration.

Frontend-Centric Development

A critical design principle: the vast majority of new feature development, customization, and user-facing innovation occurs strictly on the frontend. Users and developers working with the platform will primarily interact with HTML, Markdown rendering, and JavaScript/TypeScript logic. This approach:

- Lowers the Barrier to Entry: Developers do not need to understand JVM internals or backend architecture to build custom features.

- Accelerates Development: Frontend development cycles are faster than backend development, enabling quicker feature delivery.

- Minimizes Backend Complexity: By pushing logic to the frontend where possible, the backend remains focused on core concerns: state management, LLM orchestration, and file I/O.

This frontend-centric model has important implications for the plugin ecosystem: plugin developers work primarily in JavaScript and TypeScript, using the same tools and patterns as the core platform. This dramatically lowers the barrier to contribution and ensures that the plugin ecosystem can grow as fast as the community’s needs evolve.

Application Design Philosophy: Structured Logic, Not Chat

Rejection of Chat-Centric Design

The application deliberately rejects the general-purpose conversational paradigm that has dominated recent AI tooling. Chat interfaces are excellent for exploratory, open-ended interactions, but they are poorly suited for structured, reproducible workflows. Instead, this platform leverages LLMs as powerful engines for discrete logical operations within defined process flows. This is not a limitation—it is a deliberate design choice that reflects a clear understanding of where LLMs create durable value in organizational contexts. Conversational interfaces optimize for individual exploration; structured workflows optimize for organizational reliability. The former is valuable for discovery; the latter is essential for production. An organization that generates API documentation through a chat interface cannot audit that process, reproduce it in CI/CD, or verify that it meets compliance requirements. An organization that generates the same documentation through a structured, file-based workflow can do all three. Consider the analogy of financial ledger design. A double-entry bookkeeping system does not merely record transactions as a courtesy—its structure enforces accountability by making omissions architecturally visible. Chat-based LLM platforms, by contrast, resemble a verbal negotiation with no transcript: the conversation may have been consequential, but reconstruction is speculative at best. File-native workflows operate on an entirely different evidentiary logic, where every LLM interaction produces a discrete, addressable artifact that integrates naturally with version control, cryptographic hashing, and audit logging infrastructure.

Structured LLM Logic

LLMs are used not as conversational partners, but as components in a larger system:

- Data Transformation: LLMs transform unstructured or semi-structured data into structured formats suitable for downstream processing.

- Code Generation: LLMs generate code, documentation, and configuration files based on templates and input specifications.

- Logical Reasoning: LLMs perform multi-step reasoning tasks, breaking down complex problems into manageable steps.

- Content Analysis: LLMs analyze and extract information from documents, code, and other textual assets.

Graph-Based Orchestration

The underlying process orchestration resembles structured, multi-step execution graphs—similar to tools like LangGraph. In this model:

- Discrete Steps: Each step in the workflow is a discrete operation: an LLM call, a data transformation, a file I/O operation, or a conditional branch.

- Predictable Data Flow: The output of one step feeds predictably into the next, enabling deterministic execution and reproducible results.

- Visibility and Debugging: Because the workflow is structured and explicit, developers can easily understand, debug, and modify the logic.

Stateful, Use-Case-Specific Interface

The user interface is not a free-form chat window, but a stateful application built around specific, defined use cases:

- Documentation Generation: Guided workflows for generating API documentation, user guides, and release notes from source code and specifications.

- Code Auditing: Structured processes for analyzing code quality, security, and compliance against defined standards.

- Configuration Management: Workflows for generating and validating configuration files, infrastructure-as-code, and deployment specifications.

- Content Creation: Guided processes for creating blog posts, technical articles, and marketing content based on source materials.

Each use case is implemented as a distinct workflow, with a clear input specification, processing steps, and output format. Users navigate through the workflow, providing inputs and reviewing outputs at each stage.

Graduated Transparency and User Control

A key insight from operational experience is that different users and organizations need different levels of visibility and control. A developer building a new workflow needs to see every prompt, every intermediate output, and every configuration parameter. A compliance officer reviewing generated documentation needs a complete audit trail. A business analyst running a standard documentation workflow needs a clean, simplified interface that shows inputs and outputs without overwhelming detail. The platform addresses this through layered transparency: all state is always available in the underlying files, but the interface surfaces the level of detail appropriate to the current task and user. This is not about hiding information—it is about presenting information at the right level of abstraction for the task at hand. The underlying files remain fully accessible, fully auditable, and fully portable regardless of which interface layer the user is working in.

File-Based State Management: Transparency and Interoperability

Human-Readable, Easily Parseable Formats

All application state, configurations, and workflow definitions are stored in human-readable, easily parseable formats:

- JSON: For structured data and configuration files

- YAML: For human-friendly configuration and specification files

- Markdown: For documentation and narrative content

- Plain Text: For logs, notes, and other textual assets

This choice prioritizes transparency and interoperability over the performance or feature richness of proprietary database systems.

JavaScript-Writable State

The frontend can directly manipulate application state by writing file contents. This capability enables:

- Dynamic Configuration: Users can modify workflow definitions, templates, and configurations through the UI, with changes immediately persisted to files.

- Programmatic Automation: External scripts and tools can generate or modify application state by writing files, enabling integration with CI/CD pipelines and other automation systems.

- Transparency: Because state is stored in files, users can inspect and understand the application’s internal state simply by browsing the project directory.

Git Integration

Because the state is managed in files, it is inherently compatible with Git version control:

- Auditable History: All changes to application state, configurations, and generated outputs are tracked in Git, providing a complete audit trail.

- Branching and Merging: Users can create branches to experiment with different configurations or workflows, then merge successful changes back to the main branch.

- Collaborative Development: Multiple team members can work on the same project, with Git handling conflict resolution and change coordination.

- Reproducibility: By checking out a specific Git commit, users can reproduce the exact state of the application and its outputs at any point in time.

Git integration also provides a natural mechanism for workflow governance in team environments. Changes to workflow definitions, prompt templates, and configuration files go through the same review process as code changes—pull requests, code review, and merge controls. This means that the governance of AI-powered workflows is not a separate, specialized process but an extension of the engineering practices teams already use.

Zip Compatibility

Projects and workflows can be easily bundled and shared as standard zip archives:

- Portability: A complete project, including all configurations, templates, and generated outputs, can be packaged as a single zip file and shared via email, cloud storage, or other distribution mechanisms.

- Backup and Archival: Projects can be archived as zip files for long-term storage and compliance purposes.

- Distribution: Organizations can distribute standardized project templates and workflows as zip files, enabling rapid onboarding and consistency across teams.

Transparent and Understandable

The file-based nature of the application means that all data is visible and comprehensible simply by browsing the project directory. There are no “magic” internal databases, no opaque serialization formats, and no hidden state. This transparency provides several benefits:

- Debugging: When something goes wrong, developers can inspect the files directly to understand the application’s state and identify issues.

- Customization: Users can modify files directly, without needing to understand the application’s internal APIs or data structures.

- Integration: External tools and scripts can read and write application files, enabling seamless integration with existing development workflows.

Security Considerations for File-Based State

File-based transparency creates responsibilities as well as benefits. Because workflow definitions, prompt templates, and configuration files are human-readable and stored in the project directory, they can be accidentally committed to version control repositories, shared in zip archives, or exposed through misconfigured file permissions. The platform addresses this through several mechanisms: mandatory patterns for excluding sensitive configuration from version control, pre-commit hooks that scan for credentials and API keys, and clear documentation of which files contain sensitive information. The goal is to make the secure path the easy path—developers should not need to think carefully about what to exclude from commits, because the tooling handles it automatically.

DocOps File Focus: Transparent, Persistent, Reproducible

The application is laser-focused on documentation and operational files, prioritizing three key qualities:

Transparent

Documentation assets and the application logic that generates them must be fully visible and auditable. This means:

- Source Visibility: The prompts, templates, and configurations used to generate documentation are stored as readable files, not hidden in a proprietary database.

- Output Visibility: Generated documentation is stored as standard files (Markdown, HTML, etc.), not locked in a proprietary format.

- Logic Visibility: The workflows and processes that generate documentation are explicit and inspectable, enabling users to understand and modify them.

This transparency aligns with the file-based state management principle and enables users to audit the documentation generation process for accuracy and compliance.

Persistent

Generated outputs—documentation, configuration files, reports—are durable, long-term assets within the project repository. They are not ephemeral artifacts that disappear after a session, but persistent files that:

- Become Part of the Project: Generated documentation is committed to the project repository, becoming part of the official project assets.

- Enable Collaboration: Because documentation is persistent and version-controlled, team members can review, comment on, and improve it over time.

- Support Compliance: Persistent documentation provides evidence of project decisions, configurations, and processes, supporting compliance and audit requirements.

Reproducible

Given the same set of inputs—source code, configuration, prompts, and LLM provider—the application generates consistent, verifiable documentation outputs. This reproducibility ensures:

- Quality Control: Documentation can be regenerated and compared against previous versions to ensure consistency and quality.

- CI/CD Integration: Documentation generation can be integrated into continuous integration pipelines, with automated checks ensuring that documentation is up-to-date and accurate.

- Deterministic Builds: Projects can be built and deployed with confidence that documentation will be generated consistently across environments and time.

It is important to be precise about what reproducibility means in the context of LLM-powered workflows. LLM outputs are probabilistic, not deterministic—the same prompt sent to the same model may produce slightly different outputs on different runs. The platform addresses this through model version pinning (workflows specify the exact model version they use), temperature controls (workflows can set temperature to zero for maximum consistency), and output versioning (generated files are tagged with the model version and timestamp used to produce them). When a workflow is run in CI/CD, the system can detect if the model version has changed and flag the output for human review. This approach achieves practical reproducibility—the same workflow produces outputs that are semantically equivalent and structurally consistent—while being honest about the probabilistic nature of the underlying technology. It is worth distinguishing between deterministic process and deterministic output. The platform guarantees the former: given the same workflow definition, the same inputs, and the same model version, the same sequence of operations will execute in the same order. The latter—identical text output on every run—is constrained by the probabilistic nature of LLMs, even at temperature zero. For compliance-critical outputs, the platform supports human review and sign-off workflows rather than relying solely on reproducibility, acknowledging that practical reproducibility and absolute determinism are different guarantees with different appropriate use cases.

Enterprise Readiness and Compliance

Designed for Regulated Industries

The platform’s architecture was designed with regulated industries in mind from the outset. Healthcare organizations subject to HIPAA, financial institutions subject to SOX and PCI-DSS, and government contractors subject to FedRAMP requirements all share a common need: the ability to demonstrate, through auditable evidence, that their systems handle sensitive data appropriately. The BYOK model, file-based audit trails, and FOSS codebase together provide the foundation for this demonstration. Specifically:

- HIPAA: The BYOK architecture means the platform vendor is not a Business Associate under HIPAA—the organization’s LLM provider relationship is direct, and the platform never handles protected health information on the vendor’s behalf.

- GDPR: File-based state management enables organizations to implement data residency requirements, right-to-erasure workflows, and processing records that satisfy GDPR Article 5 transparency requirements.

- SOC 2: The combination of FOSS codebase (enabling independent verification), file-based audit logs (enabling complete audit trails), and Git integration (enabling change management evidence) supports SOC 2 Type II audit requirements.

In healthcare environments governed by HIPAA’s audit control requirements under 45 C.F.R. § 164.312(b), the distinction between file-centric and chat-based architectures is not procedural—it is existential. Covered entities must implement mechanisms to record and examine activity in systems containing protected health information. A file-centric platform satisfies that requirement structurally, while a chat-based system demands costly compensating controls that may still fail regulatory scrutiny. The regulatory trajectory across every major compliance regime is unambiguous: assertions are giving way to verifiable proof as the operative standard.

Operational Security

Enterprise deployments require more than architectural soundness—they require operational security practices that are documented, testable, and maintainable. The platform provides:

- Secrets Management Integration: Support for enterprise secrets management systems (HashiCorp Vault, AWS Secrets Manager, Azure Key Vault) as alternatives to environment variable-based key injection, enabling key rotation without application restart.

- Audit Logging: Structured, tamper-evident logs of all workflow executions, LLM API calls, and file modifications, suitable for ingestion into enterprise SIEM systems.

- Access Controls: File-system-level access controls that integrate with existing organizational identity and access management systems.

- Dependency Scanning: Automated scanning of the platform and its plugins for known vulnerabilities, with clear processes for security updates.

Observability and Monitoring

Production deployments require comprehensive observability. The platform supports structured logging in JSON format for all workflow execution, with separate log streams for API calls, state changes, and errors. Metrics cover workflow execution time, success and failure rates, LLM API latency and token usage, and file I/O performance. The platform adopts the OpenTelemetry standard for instrumentation, enabling pluggable exporters for various monitoring backends without requiring vendor-specific integration. Audit logging for compliance is maintained separately from operational logging, with tamper-evident integrity protection suitable for regulatory examination.

Strategic Positioning and Market Context

Who This Platform Serves

The platform’s value proposition is strongest for organizations where governance, auditability, and long-term institutional continuity outweigh short-term ease-of-use and rapid capability iteration. This includes:

- Regulated industries (healthcare, finance, government, defense) where compliance requirements mandate verifiable data handling and auditable AI workflows

- Security-conscious enterprises where data sovereignty and vendor independence are institutional requirements, not preferences

- Organizations with long institutional timescales (universities, government agencies, financial institutions) where technology decisions must remain viable across decades, not product cycles

- Teams with sufficient technical capacity to exercise meaningful control over their AI infrastructure

The platform is not positioned as a universal replacement for chat-based AI tools. For exploratory, open-ended interactions where conversational fluidity matters more than auditability, chat interfaces remain appropriate. The platform addresses a different need: production-grade, reproducible, auditable AI workflows that can be governed with the same rigor applied to source code and infrastructure.

The Honest Trade-offs

Intellectual honesty requires acknowledging what this platform asks of its users. The BYOK model requires organizations to manage their own API keys, including secure storage, rotation, and access control. File-based state management requires familiarity with Git workflows and file formats. Structured workflows require learning a different interaction paradigm than the chat-based tools that have become familiar. Self-hosted deployment requires infrastructure expertise. These are real costs. For organizations whose primary criteria are ease of adoption and rapid feature access, proprietary solutions may be appropriate. The argument advanced here is not that this platform is universally preferable, but that it is structurally superior for organizations operating under specific governance mandates. A more intuitive interface does not render an unverifiable audit trail defensible. When a compliance officer must demonstrate data lineage to a regulator, or when a board demands accountability for sensitive model interactions, the relevant standard is not user satisfaction—it is verifiability. The platform mitigates these costs through graduated transparency (simplified interfaces for routine tasks, full detail for governance and debugging), comprehensive documentation, pre-built workflow templates for common use cases, and a plugin ecosystem that enables domain experts to build accessible tools on top of the transparent foundation.

Conclusion: A New Standard for LLM-Powered Development

This product represents a fundamental shift in how organizations approach LLM-powered development tools. By prioritizing user control, transparency, and extensibility, it addresses the core concerns that have limited adoption of AI tooling in regulated industries and security-conscious organizations.

The combination of BYOK architecture, FOSS core, file-based state management, and structured workflow design creates a platform that is:

- Trustworthy: Users maintain complete control over their data and costs, with no vendor lock-in or hidden data access.

- Transparent: All application state and logic is visible and auditable, enabling users to understand and customize the system.

- Extensible: A robust plugin system enables specialization and monetization without compromising the FOSS core.

- Reproducible: Deterministic outputs and file-based state management enable integration into CI/CD pipelines and collaborative workflows.

- Accessible: Frontend-centric development and modern web technologies lower the barrier to entry for developers and users.

As organizations increasingly adopt LLMs for critical business processes, this platform provides a foundation that aligns with principles of transparency, user control, and long-term sustainability. It is not a chat interface, but a structured, extensible platform for building reproducible, auditable workflows that leverage the power of LLMs while maintaining the transparency and control that modern development practices demand.

The choice between this platform and proprietary alternatives is ultimately a question about organizational values and time horizons. For organizations that prioritize short-term convenience and are comfortable delegating control to vendors, proprietary platforms may serve well—at least until vendor incentives shift. For organizations that require long-term independence, auditable processes, and structural privacy guarantees, this platform provides something that no proprietary alternative can: a foundation whose trustworthiness can be verified, not merely promised.

Platform selection, properly understood, is a fiduciary act: it encodes institutional values and risk tolerance into infrastructure that will shape organizational capability for years. Consider the moment when an auditor asks your security officer to demonstrate—not assert—that your organization’s AI infrastructure handled sensitive data in full compliance with its obligations. The organization built on open, verifiable architecture opens the codebase and shows its work. The organization built on proprietary promises hands over a vendor’s PDF. That difference is not a technical detail. It is the difference between governance and faith.

Brainstorming Session Transcript

Input Files: content.md, ops/brainstorm_op.md

Problem Statement: Based on the core concepts of a transparent, extensible, FOSS-based LLM-powered development platform with BYOK architecture, file-centric state management, and structured workflow design, what are innovative extensions, applications, integrations, and use cases that could expand the platform’s value proposition and market reach?

Started: 2026-04-06 11:54:35

Generated Options

1. Domain-Specific Workflow Templates Library

Category: Domain-Specific Extensions

Create pre-built, community-contributed workflow templates for vertical markets (healthcare, finance, legal, education). Each template would be file-based, version-controlled, and customizable while maintaining BYOK compatibility. Users could fork, modify, and share templates through a decentralized registry, reducing time-to-value for domain experts.

2. Git-Native State Synchronization Engine

Category: Integration Opportunities

Develop a bidirectional sync layer that treats Git repositories as the source of truth for workflow state, enabling seamless integration with existing development pipelines. This allows teams to manage LLM-powered workflows alongside code, with full audit trails and collaborative branching capabilities.

3. Federated Model Marketplace with Revenue Sharing

Category: Business Model Innovations

Build a decentralized marketplace where users can publish optimized, fine-tuned LLM models compatible with the platform’s BYOK architecture. Implement transparent revenue-sharing mechanisms using smart contracts or blockchain-based attribution, enabling creators to monetize specialized models while maintaining FOSS principles.

4. Visual Workflow Composition Canvas

Category: User Experience Enhancements

Create an intuitive, drag-and-drop visual editor that generates structured workflow definitions as files, lowering the barrier to entry for non-technical users. The canvas would maintain full transparency by displaying the underlying file structure in real-time, bridging visual and code-based paradigms.

5. Community-Driven Plugin Ecosystem

Category: Ecosystem and Community

Establish a formal plugin architecture with standardized interfaces for extending platform capabilities, coupled with a curated registry and contribution guidelines. Plugins would be distributed as FOSS packages with clear dependency management, enabling the community to build domain-specific extensions without forking the core platform.

6. Enterprise Audit and Compliance Framework

Category: Enterprise and Compliance

Develop a comprehensive compliance module supporting SOC 2, HIPAA, GDPR, and other regulatory requirements through file-based audit logs, encryption policies, and access controls. The framework would maintain transparency while enabling enterprises to meet governance requirements without vendor lock-in.

7. Multi-Modal LLM Integration Layer

Category: Emerging Technology Integration

Extend the platform to support vision, audio, and document-processing LLMs alongside text models, with structured workflows for multi-modal tasks. File-based state management would handle diverse input/output types while maintaining the BYOK principle across different model providers.

8. Intelligent Workflow Optimization Engine

Category: Workflow Automation

Build an analytics and optimization system that analyzes workflow execution patterns to suggest improvements, cost reductions, and performance enhancements. The engine would provide actionable insights while respecting user privacy by operating entirely on local file-based data without external telemetry.

9. Cross-Platform Mobile Workflow Executor

Category: User Experience Enhancements

Develop native mobile applications (iOS/Android) that execute structured workflows defined on the desktop platform, with offline-first capabilities and file synchronization. This extends the platform’s reach to field workers and mobile-first teams while maintaining the file-centric architecture.

10. Collaborative Workflow Versioning System

Category: Workflow Automation

Implement a sophisticated version control system specifically designed for workflows, enabling teams to branch, merge, and review workflow changes with conflict resolution. This would leverage the file-based state management to provide Git-like collaboration for non-developers.

11. Industry-Specific Certification Program

Category: Ecosystem and Community

Create a formal certification and training program for platform expertise across different domains, with community-led curriculum development and recognized credentials. This builds a skilled practitioner ecosystem while generating sustainable revenue through optional premium training materials.

12. Real-Time Workflow Monitoring Dashboard

Category: Enterprise and Compliance

Build a lightweight, self-hosted monitoring solution that provides real-time visibility into workflow execution, resource usage, and LLM API costs across all BYOK providers. The dashboard would aggregate metrics from file-based logs without requiring external services, enabling cost optimization and performance tracking.

Option 1 Analysis: Domain-Specific Workflow Templates Library

✅ Pros

- Dramatically reduces time-to-value for domain experts by providing pre-configured, battle-tested workflows rather than starting from scratch, directly addressing vertical market adoption barriers

- Aligns perfectly with FOSS principles through community contribution model and decentralized registry, enabling organic ecosystem growth while maintaining user control and transparency

- File-based templates enable version control, auditability, and compliance documentation—critical for regulated industries (healthcare, finance, legal) where workflow provenance is legally required

- Creates network effects and lock-in through community investment; users who contribute templates become platform advocates, and template dependencies create ecosystem stickiness

- Enables monetization opportunities (premium templates, certification programs, support services) without compromising FOSS core, supporting sustainable platform development

❌ Cons

- Quality control and maintenance burden: community-contributed templates may contain errors, security vulnerabilities, or outdated practices, requiring governance infrastructure and moderation resources

- Domain expertise requirement creates high barrier to entry for template creation; healthcare/finance/legal workflows require specialized knowledge, limiting contributor pool and template diversity

- Risk of template fragmentation and incompatibility as contributors modify templates independently, potentially creating confusion and reducing discoverability in a crowded registry

- Regulatory compliance complexity: templates for regulated industries (healthcare HIPAA, finance SOX, legal privilege) may inadvertently create liability if they don’t meet evolving compliance requirements

- Dependency management challenges: templates may depend on specific LLM capabilities, API versions, or external services that change, causing templates to break without active maintenance

📊 Feasibility

High feasibility with moderate resource investment. The technical foundation is straightforward—file-based templates, version control, and registry infrastructure align with existing platform architecture. However, success requires sustained community management and domain expert engagement. Implementation is realistic within 2-3 quarters with a dedicated team of 2-3 people for registry/governance plus community outreach. The main challenge is bootstrapping initial high-quality templates and establishing contributor incentives, not technical complexity.

💥 Impact

Expected to significantly expand addressable market by making the platform accessible to non-technical domain experts in vertical markets. Anticipated outcomes: 30-50% reduction in onboarding time for domain-specific use cases, 2-3x increase in adoption within healthcare/finance/legal sectors, emergence of a self-sustaining template ecosystem reducing platform maintenance burden. Secondary effects include increased platform visibility through template discovery, creation of community-driven innovation feedback loops, and establishment of the platform as the standard for structured workflow automation in regulated industries.

⚠️ Risks

- Regulatory liability exposure: if a healthcare template fails to maintain HIPAA compliance or a legal template misses privilege requirements, the platform could face liability claims despite FOSS disclaimers, requiring legal review infrastructure

- Template quality degradation over time: as LLM models update or APIs change, unmaintained templates become broken, creating negative user experience and eroding trust in the registry ecosystem

- Vendor lock-in perception: if templates become tightly coupled to specific LLM providers or proprietary extensions, the BYOK philosophy is undermined, contradicting core platform values and alienating users

- Community fragmentation: competing template registries or forks could emerge if governance is perceived as unfair, splitting the ecosystem and reducing network effects that justify the investment

- Security supply chain risk: malicious or compromised templates could be contributed to the registry, potentially exposing users to prompt injection, data exfiltration, or workflow manipulation attacks

📋 Requirements

- Decentralized registry infrastructure: Git-based or blockchain-backed registry system supporting forking, versioning, and discovery; consider leveraging existing platforms (GitHub, GitLab) or building custom registry with search/filtering capabilities

- Template specification standard: formal schema defining template structure, metadata (domain, compliance level, LLM requirements), dependencies, and validation rules to ensure consistency and interoperability

- Community governance framework: clear contribution guidelines, code of conduct, review process, and maintainer roles; establish domain expert advisory boards for healthcare/finance/legal to validate compliance and quality

- Security and compliance review process: automated scanning for common vulnerabilities, manual review by domain experts for regulatory compliance, and clear liability disclaimers; consider third-party audit partnerships for regulated domains

- Developer documentation and tooling: template creation guides, CLI tools for template validation/testing, integration examples, and migration utilities; invest in onboarding content for domain experts unfamiliar with FOSS workflows

- Community incentive program: recognition systems (badges, leaderboards), potential revenue-sharing for premium templates, or certification programs to motivate high-quality contributions and sustained maintenance

Option 2 Analysis: Git-Native State Synchronization Engine

✅ Pros

- Eliminates workflow state fragmentation by leveraging Git as a familiar, universally-adopted source of truth that developers already use daily, reducing cognitive overhead and tool sprawl

- Enables full audit trails and version history for workflow decisions and LLM interactions through Git’s native commit history, providing compliance-ready documentation and accountability

- Facilitates seamless team collaboration through Git’s branching, merging, and conflict resolution mechanisms, allowing parallel workflow development and experimentation without blocking main pipelines

- Dramatically lowers adoption friction by integrating into existing CI/CD pipelines and development workflows rather than requiring teams to adopt yet another platform or state management system

- Maintains FOSS principles and user control by keeping all workflow state in user-owned repositories with transparent, inspectable file formats that can be version-controlled and audited

❌ Cons

- Git’s eventual consistency model and merge conflict resolution may not suit real-time collaborative workflow execution, potentially causing synchronization delays or state inconsistencies during concurrent operations

- Treating Git as workflow state storage conflates code artifacts with operational state, potentially cluttering repositories with transient workflow metadata and creating maintenance burden for teams

- Requires developers to understand both Git workflows and LLM-powered workflow semantics, creating a steeper learning curve than isolated workflow management tools

- Git’s text-based diff/merge paradigm may struggle with complex, structured workflow state (nested objects, conditional branches, dynamic parameters), leading to difficult merge conflicts

- Performance implications of frequent Git operations (commits, pushes, pulls) for high-frequency workflow state updates could introduce latency and scalability constraints

📊 Feasibility

High feasibility with moderate complexity. Git integration is technically straightforward using existing libraries (GitPython, libgit2, etc.), and the file-centric state management already aligns with Git’s model. However, designing robust bidirectional sync logic that handles edge cases (concurrent edits, network failures, merge conflicts) requires careful engineering. Resource requirements are moderate—primarily backend development for sync logic and frontend updates for Git-aware UI components. Organizational feasibility is strong given the FOSS community’s Git familiarity.

💥 Impact

Expected to significantly expand market reach by reducing adoption barriers for development teams already embedded in Git-based workflows. Would enable the platform to position itself as a native extension of existing development infrastructure rather than a separate tool. Could drive adoption in enterprise environments where Git governance and audit trails are mandatory. Would likely increase workflow reproducibility and team collaboration effectiveness. May establish the platform as a bridge between LLM capabilities and traditional DevOps practices, opening new market segments.

⚠️ Risks

- Merge conflicts in workflow state files could become a critical blocker if not handled gracefully, potentially frustrating users and creating support burden

- Tight coupling to Git could limit future flexibility if the platform needs to support non-Git version control systems or hybrid state management approaches

- Security risks if sensitive LLM prompts, API keys, or model configurations are accidentally committed to Git history, requiring robust .gitignore patterns and user education

- Repository bloat from frequent workflow state commits could degrade Git performance and increase storage costs, especially for high-volume workflow execution

- Dependency on Git availability and network connectivity could create operational fragility if Git services are unavailable or if users work in offline environments

📋 Requirements

- Backend development expertise in Git operations, bidirectional synchronization patterns, and conflict resolution algorithms

- Clear specification of workflow state serialization format that is both Git-friendly (text-based, diff-able) and semantically rich enough to capture complex workflow structures

- Frontend UI components that visualize Git-based workflow history, branching, and merge states in an intuitive way for non-Git-expert users

- Comprehensive testing framework covering edge cases: concurrent edits, network failures, large-scale state changes, and complex merge scenarios

- Documentation and user education materials explaining Git-workflow integration, best practices for repository organization, and conflict resolution strategies

- Integration with popular Git hosting platforms (GitHub, GitLab, Gitea) and CI/CD systems (GitHub Actions, GitLab CI, Jenkins) to maximize ecosystem compatibility

Option 3 Analysis: Federated Model Marketplace with Revenue Sharing

✅ Pros

- Creates economic incentives for community-driven model optimization and specialization, expanding the platform’s model ecosystem without direct development costs

- Enables domain-specific model variants (legal, medical, financial, etc.) to emerge organically, increasing platform applicability across industries

- Transparent revenue-sharing mechanisms build trust and demonstrate commitment to creator compensation, differentiating from proprietary platforms

- File-based model distribution aligns naturally with BYOK architecture, allowing users to audit, modify, and control model provenance

- Structured workflow integration enables reproducible model evaluation and benchmarking, supporting quality assurance and discovery

❌ Cons

- Blockchain/smart contract implementation introduces complexity and potential regulatory ambiguity that conflicts with FOSS simplicity principles

- Revenue-sharing mechanisms create legal and tax compliance challenges across jurisdictions, requiring significant administrative overhead

- Quality control becomes difficult at scale; marketplace could become flooded with low-quality or duplicative models, degrading user experience

- Monetization layer may fragment the FOSS community by creating incentives for proprietary model variants or closed-source optimizations

- Model licensing and attribution tracking through blockchain adds technical debt and potential performance overhead to the platform

📊 Feasibility

Moderate feasibility with significant caveats. The technical infrastructure for model distribution via file-based systems is straightforward, but the revenue-sharing and blockchain components introduce substantial complexity. Implementation would require 6-12 months for MVP, including legal framework development, smart contract auditing, and marketplace UI. Resource requirements are substantial (legal, blockchain engineers, marketplace infrastructure). Success depends on resolving FOSS-monetization tensions early.

💥 Impact

If successful, could establish the platform as a hub for specialized LLM variants, attracting both model creators and enterprises seeking domain-specific solutions. Expected outcomes: 20-40% increase in model ecosystem diversity within 18 months, emergence of 3-5 dominant model categories, and potential $100K-$500K annual creator earnings (depending on adoption). However, impact could be negative if quality control fails or if monetization mechanisms alienate core FOSS contributors.

⚠️ Risks

- Regulatory scrutiny on cryptocurrency/blockchain components could force redesign or geographic restrictions, limiting market reach

- Revenue-sharing disputes and attribution conflicts could generate legal liability and community backlash, damaging platform reputation

- Model poisoning attacks or malicious model uploads could compromise platform integrity if quality gates are insufficient

- Blockchain transaction costs and latency could make micropayments economically unviable, requiring minimum payment thresholds that exclude small creators

- Centralization risk: marketplace governance could become contentious, with disputes over model removal, revenue allocation, or platform policies

📋 Requirements

- Legal expertise in FOSS licensing, cryptocurrency regulation, and international tax/payment frameworks to establish compliant revenue-sharing

- Blockchain engineers experienced in smart contract development, auditing, and gas optimization for cost-effective transactions

- Model versioning and metadata standards (compatible with file-based state management) to enable transparent attribution and dependency tracking

- Marketplace infrastructure: discovery UI, model validation pipeline, automated benchmarking workflows, and payment processing integration

- Community governance framework defining model quality standards, dispute resolution, and revenue-sharing policies before launch

- Frontend development resources to build intuitive model browsing, installation, and version management interfaces aligned with platform design philosophy

Option 4 Analysis: Visual Workflow Composition Canvas

✅ Pros

- Significantly lowers barrier to entry for non-technical users and domain experts, expanding addressable market beyond developers

- Dual-mode interface (visual + code) reinforces transparency principle by allowing users to see exact file structure being generated, building trust and understanding

- Naturally aligns with file-centric state management—visual compositions directly serialize to workflow definition files that remain version-controllable and portable

- Enables rapid prototyping and iteration cycles, reducing time-to-value for workflow creation compared to manual file editing

- Creates natural documentation artifact—visual workflows serve as executable diagrams that are easier to understand and maintain than raw configuration files

❌ Cons

- Complexity of maintaining bidirectional synchronization between visual representation and underlying file structure; changes in either direction must stay consistent

- Risk of over-simplification in visual abstraction—advanced workflow features may not map cleanly to drag-and-drop paradigms, creating frustration for power users

- Requires substantial frontend development effort and UX design expertise; visual editors are notoriously difficult to build intuitively

- May create false sense of simplicity that masks underlying workflow complexity, potentially leading to poorly designed workflows by non-technical users

- Introduces additional learning curve for users who must understand both visual metaphors and underlying workflow concepts

📊 Feasibility

Moderately feasible with realistic implementation timeline of 3-6 months for MVP. Core technical challenges are well-understood (graph visualization, state synchronization, code generation), and existing libraries (React Flow, Blockly, etc.) can accelerate development. Primary constraints are UX design quality and testing rigor needed to ensure visual-to-file synchronization reliability. Frontend-centric approach aligns well with platform architecture. Resource requirements are moderate—estimate 2-3 full-time developers plus dedicated UX designer.

💥 Impact

Expected to substantially expand platform adoption among non-developer personas (business analysts, domain experts, citizen developers) while maintaining appeal to technical users. Could increase workflow creation velocity by 40-60% for common use cases. Likely to generate positive community sentiment around accessibility and democratization of workflow design. May establish platform as more approachable alternative to enterprise workflow tools. Secondary impact: visual workflows become powerful marketing/demonstration tool for showcasing platform capabilities.

⚠️ Risks

- Synchronization bugs between visual canvas and file representation could corrupt workflow definitions or create inconsistent state, damaging user trust in file-based approach

- Over-reliance on visual editor may discourage users from learning underlying workflow structure, creating lock-in to the visual tool and reducing platform portability benefits

- Performance degradation with complex workflows—large visual graphs can become unwieldy and slow, limiting scalability for enterprise use cases

- Maintenance burden increases significantly; changes to workflow schema require coordinated updates to visual editor, code generation, and documentation

- Visual abstraction may obscure important workflow semantics, leading to subtle bugs that are harder to diagnose than explicit file-based errors

📋 Requirements

- Frontend framework expertise (React/Vue) and graph visualization library proficiency (React Flow, D3.js, or similar)

- UX/UI designer with experience in visual programming tools and workflow systems to ensure intuitive interaction patterns

- Robust code generation engine that reliably converts visual compositions to valid workflow definition files with comprehensive test coverage

- Clear specification of visual-to-file mapping rules and bidirectional synchronization protocol to maintain consistency

- Comprehensive testing infrastructure including visual regression tests, synchronization validation tests, and user acceptance testing with target personas

- Documentation and tutorial content explaining both visual paradigm and underlying file structure to support learning curve

- Community feedback mechanism to iterate on visual design based on real-world usage patterns and pain points

Option 5 Analysis: Community-Driven Plugin Ecosystem

✅ Pros

- Dramatically expands platform capabilities without bloating core codebase, allowing specialized domains (data science, DevOps, legal tech, etc.) to build tailored extensions

- Reduces barrier to contribution for community members who can’t commit to core development, fostering broader ecosystem participation and accelerating feature velocity

- Creates network effects and lock-in through complementary plugins, increasing platform stickiness and competitive moat against monolithic alternatives

- Enables sustainable monetization pathways (premium plugins, support services) while maintaining FOSS core, supporting long-term project viability

- Maintains file-centric transparency by allowing plugins to operate on and expose file-based state, preserving auditability and user control principles

❌ Cons

- Quality fragmentation risk: community plugins of varying quality could damage platform reputation if discovery/curation mechanisms fail or are insufficient

- Increased complexity for end-users navigating plugin selection, compatibility, and dependency management, potentially creating decision paralysis

- Plugin maintenance burden falls on individual contributors, risking abandoned plugins that break with platform updates and creating technical debt

- Security surface area expands significantly; malicious or poorly-written plugins could compromise user data, especially with BYOK architecture where plugins access sensitive credentials

- Standardized interfaces may constrain innovation if too rigid, or create maintenance overhead if too flexible, requiring careful API design decisions

📊 Feasibility

High feasibility with moderate effort. Plugin architectures are well-established patterns (VS Code, Obsidian, Kubernetes operators). The main challenges are: (1) designing clean interfaces that respect file-centric state and BYOK constraints without over-engineering, (2) building robust dependency resolution and version management, (3) establishing governance structures for curation. Timeline: 4-6 months for MVP with basic registry and 5-10 reference plugins; 12+ months for mature ecosystem with security scanning and community governance.

💥 Impact

Expected to significantly expand addressable market by enabling vertical-specific solutions (ML ops workflows, infrastructure-as-code platforms, domain-specific DSLs) without core team scaling. Could increase adoption 2-3x by reducing time-to-value for specialized use cases. Creates community engagement feedback loops that improve core platform. May shift revenue model toward services/premium plugins. Establishes platform as extensibility leader in FOSS dev tools space.

⚠️ Risks

- Security vulnerabilities in third-party plugins could expose user credentials or project files; requires robust sandboxing, code review processes, and security scanning infrastructure

- Dependency hell: complex plugin interdependencies and version conflicts could create support burden and user frustration, especially if dependency resolution is poorly designed

- Ecosystem fragmentation: competing plugins solving same problem with different approaches could confuse users and dilute network effects if curation fails

- Governance challenges: determining which plugins get featured, handling disputes, managing unmaintained plugins, and enforcing contribution standards requires organizational maturity

- Core platform coupling: poorly designed plugin interfaces could create tight coupling that makes core refactoring difficult, or loose coupling that provides insufficient capabilities

📋 Requirements

- Plugin API specification and SDK: well-documented interfaces for file system access, workflow manipulation, LLM integration, and UI extension that respect BYOK and file-centric constraints

- Package registry infrastructure: self-hosted or federated registry with versioning, dependency resolution, security scanning (SBOM, vulnerability checks), and discoverability features

- Governance framework: contribution guidelines, code review process, security audit procedures, deprecation policies, and community council structure for decision-making

- Developer tooling: plugin scaffolding templates, local testing framework, documentation generator, and example plugins demonstrating best practices across 3-5 domains

- Security and sandboxing mechanisms: capability-based access control for plugins, audit logging of plugin actions, optional runtime sandboxing, and clear permission models for BYOK credential access

- Community management resources: dedicated maintainer(s) for ecosystem health, plugin curator role, support channels, and regular communication cadence with plugin developers

Option 6 Analysis: Enterprise Audit and Compliance Framework

✅ Pros

- Directly addresses a significant market pain point: enterprises struggle with compliance costs and vendor lock-in; a FOSS solution with transparent audit logs aligns perfectly with the platform’s core philosophy

- File-based audit logs naturally complement the platform’s file-centric state management, enabling immutable compliance records that users fully control without external dependencies

- BYOK architecture becomes a competitive differentiator in regulated industries where data sovereignty and encryption key control are critical compliance requirements

- Structured workflow design enables compliance-as-code patterns, allowing organizations to define and version control their governance policies alongside development workflows

- Opens enterprise market segment with higher willingness to pay, potentially justifying commercial support offerings while maintaining FOSS core

❌ Cons

- Compliance frameworks are highly jurisdiction-specific and frequently updated; maintaining accuracy across SOC 2, HIPAA, GDPR, and emerging standards requires continuous legal and regulatory expertise

- Enterprises typically require formal audit certifications and vendor attestations; a FOSS project may struggle to obtain SOC 2 Type II certification or equivalent third-party validation

- Compliance modules often require specialized knowledge; the platform risks becoming too complex for core developer audience if compliance features aren’t properly isolated and optional

- Regulatory liability concerns: if the framework fails to meet compliance requirements, users may face legal exposure, creating potential liability issues for the FOSS project maintainers

- Integration complexity: enterprises use diverse compliance tools (Vault, HashiCorp, specialized audit platforms); building comprehensive integrations requires significant ongoing maintenance

📊 Feasibility

Moderately feasible with caveats. The technical foundation (file-based logs, encryption, access controls) aligns well with existing architecture, making core implementation realistic within 2-3 development cycles. However, achieving production-grade compliance certification and maintaining regulatory accuracy across multiple jurisdictions is resource-intensive and may require hiring specialized compliance expertise or establishing partnerships. The FOSS model creates challenges for formal audit attestations that enterprises demand, potentially requiring a dual-track approach (FOSS core + commercial certification services).

💥 Impact

Expected to significantly expand addressable market into regulated industries (healthcare, finance, government) where compliance overhead currently drives vendor selection. Could establish the platform as a credible alternative to proprietary solutions, generating enterprise adoption and potentially enabling commercial support revenue streams. However, impact depends heavily on achieving recognized compliance certifications; without them, adoption may remain limited to organizations with internal compliance expertise. Success would position the platform as a governance-first development tool rather than purely a developer experience platform.

⚠️ Risks

- Regulatory drift: compliance requirements change frequently; failure to maintain accuracy could expose users to non-compliance and create reputational damage to the project

- Liability exposure: if organizations rely on the framework and subsequently fail audits, maintainers could face legal challenges despite FOSS disclaimers, potentially requiring liability insurance

- Scope creep and maintenance burden: compliance requirements are extensive; the module could become unmaintainable without dedicated resources, leading to stale or incorrect guidance

- Market misalignment: enterprises may still prefer vendor-backed solutions with formal SLAs and indemnification; FOSS alone may be insufficient to overcome procurement requirements

- Security implementation errors: compliance frameworks involve cryptography and access control; implementation flaws could create false sense of security and expose users to actual vulnerabilities

📋 Requirements

- Compliance expertise: hire or partner with regulatory consultants familiar with SOC 2, HIPAA, GDPR, and relevant industry standards to ensure accuracy and maintainability

- Legal review process: establish governance for compliance documentation updates, including legal review cycles to prevent liability exposure from inaccurate guidance

- Cryptographic and security engineering: implement robust encryption, key management, and access control mechanisms; may require security audits and third-party validation

- Audit and certification infrastructure: develop processes and documentation to support third-party audits; consider partnerships with audit firms to enable SOC 2 Type II or equivalent certifications

- Integration development: build connectors to common enterprise tools (identity providers, secret management systems, monitoring platforms) to reduce implementation friction

- Documentation and training: create comprehensive guides for compliance officers and developers; develop certification or training programs to build expertise in the community

- Governance model: establish clear policies for compliance module maintenance, versioning, and deprecation to manage regulatory changes without breaking user implementations

Option 7 Analysis: Multi-Modal LLM Integration Layer

✅ Pros

- Significantly expands addressable market by enabling use cases in document processing, code review with screenshots, accessibility features, and content analysis that text-only platforms cannot serve

- Maintains BYOK principle across modalities—users can bring their own vision/audio model credentials (Claude Vision, GPT-4V, Whisper, etc.), preserving user control and avoiding vendor lock-in

- File-centric state management naturally accommodates multi-modal workflows since images, audio files, and documents are already file-based, requiring minimal architectural deviation

- Structured workflow design (vs. chat) is well-suited for multi-modal tasks like batch document processing, automated screenshot analysis in CI/CD, or audio transcription pipelines

- Differentiates platform from chat-centric competitors by enabling deterministic, reproducible multi-modal workflows suitable for production automation and compliance-heavy industries

❌ Cons

- Significantly increases complexity in state management—handling large binary files (images, audio, video) requires careful consideration of storage, caching, versioning, and garbage collection

- Multi-modal model APIs have inconsistent interfaces, rate limits, and cost structures; abstracting these differences while maintaining transparency is non-trivial

- Frontend-centric approach becomes challenging for heavy processing tasks (audio transcription, large image analysis); may require backend support or edge processing strategies

- Introduces new security and privacy concerns—storing/processing sensitive visual and audio data requires robust encryption, audit trails, and compliance considerations beyond text workflows

- Fragmented ecosystem of multi-modal models means ongoing maintenance burden as new models emerge and existing APIs evolve; FOSS community may lack resources for comprehensive support

📊 Feasibility

Moderately feasible with phased implementation. Core infrastructure (file handling, workflow orchestration) already exists; initial phase could focus on vision models (most mature, highest demand) with structured file I/O. Audio and document processing can follow. Estimated 3-6 month MVP for vision support, 6-12 months for comprehensive multi-modal coverage. Resource requirements are moderate-to-high; requires backend engineers for API abstraction layers and frontend developers for multi-modal UI components. Community contribution potential is good for model-specific adapters.

💥 Impact